mirror of

https://github.com/sadoyan/aralez.git

synced 2026-04-30 14:58:38 +08:00

Compare commits

25 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

c78245e695 | ||

|

|

66b1a1c399 | ||

|

|

bba6dd8514 | ||

|

|

79485ac69d | ||

|

|

61c5625016 | ||

|

|

57bdc71acd | ||

|

|

9e09b829a6 | ||

|

|

d3602fa578 | ||

|

|

e304482667 | ||

|

|

f8118f9596 | ||

|

|

f654312466 | ||

|

|

b44f7069a0 | ||

|

|

a44979ec82 | ||

|

|

ece4fa20af | ||

|

|

2ad3a059ab | ||

|

|

6f012cee69 | ||

|

|

51c88c8f7c | ||

|

|

f91bc41103 | ||

|

|

21e1276ff5 | ||

|

|

8463cdabbc | ||

|

|

d0e4b52ce6 | ||

|

|

b552d24497 | ||

|

|

2e33d692bb | ||

|

|

e586967830 | ||

|

|

8d4e434d6a |

3

.gitignore

vendored

3

.gitignore

vendored

@@ -5,9 +5,12 @@

|

||||

*.dll

|

||||

*.exe

|

||||

*.sh

|

||||

/docs/

|

||||

/docs

|

||||

/target/

|

||||

*.iml

|

||||

.idea/

|

||||

.etc/

|

||||

*.ipr

|

||||

*.iws

|

||||

/out/

|

||||

|

||||

210

Cargo.lock

generated

210

Cargo.lock

generated

@@ -128,10 +128,11 @@ dependencies = [

|

||||

"log",

|

||||

"mimalloc",

|

||||

"notify",

|

||||

"openssl",

|

||||

"once_cell",

|

||||

"pingora",

|

||||

"pingora-core",

|

||||

"pingora-http",

|

||||

"pingora-limits",

|

||||

"pingora-proxy",

|

||||

"prometheus 0.14.0",

|

||||

"rand 0.9.1",

|

||||

@@ -142,6 +143,7 @@ dependencies = [

|

||||

"sha2",

|

||||

"tokio",

|

||||

"tonic",

|

||||

"tower-http",

|

||||

"urlencoding",

|

||||

"x509-parser",

|

||||

]

|

||||

@@ -601,9 +603,9 @@ dependencies = [

|

||||

|

||||

[[package]]

|

||||

name = "crypto-common"

|

||||

version = "0.2.0-rc.2"

|

||||

version = "0.2.0-rc.3"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "170d71b5b14dec99db7739f6fc7d6ec2db80b78c3acb77db48392ccc3d8a9ea0"

|

||||

checksum = "8a23fa214dea9efd4dacee5a5614646b30216ae0f05d4bb51bafb50e9da1c5be"

|

||||

dependencies = [

|

||||

"hybrid-array",

|

||||

]

|

||||

@@ -684,12 +686,12 @@ dependencies = [

|

||||

|

||||

[[package]]

|

||||

name = "digest"

|

||||

version = "0.11.0-pre.10"

|

||||

version = "0.11.0-rc.0"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "6c478574b20020306f98d61c8ca3322d762e1ff08117422ac6106438605ea516"

|

||||

checksum = "460dd7f37e4950526b54a5a6b1f41b6c8e763c58eb9a8fc8fc05ba5c2f44ca7b"

|

||||

dependencies = [

|

||||

"block-buffer 0.11.0-rc.4",

|

||||

"crypto-common 0.2.0-rc.2",

|

||||

"crypto-common 0.2.0-rc.3",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

@@ -1079,6 +1081,12 @@ dependencies = [

|

||||

"pin-project-lite",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

name = "http-range-header"

|

||||

version = "0.4.2"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "9171a2ea8a68358193d15dd5d70c1c10a2afc3e7e4c5bc92bc9f025cebd7359c"

|

||||

|

||||

[[package]]

|

||||

name = "httparse"

|

||||

version = "1.9.5"

|

||||

@@ -1169,21 +1177,28 @@ dependencies = [

|

||||

|

||||

[[package]]

|

||||

name = "hyper-util"

|

||||

version = "0.1.10"

|

||||

version = "0.1.14"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "df2dcfbe0677734ab2f3ffa7fa7bfd4706bfdc1ef393f2ee30184aed67e631b4"

|

||||

checksum = "dc2fdfdbff08affe55bb779f33b053aa1fe5dd5b54c257343c17edfa55711bdb"

|

||||

dependencies = [

|

||||

"base64",

|

||||

"bytes",

|

||||

"futures-channel",

|

||||

"futures-core",

|

||||

"futures-util",

|

||||

"http",

|

||||

"http-body",

|

||||

"hyper",

|

||||

"ipnet",

|

||||

"libc",

|

||||

"percent-encoding",

|

||||

"pin-project-lite",

|

||||

"socket2",

|

||||

"system-configuration",

|

||||

"tokio",

|

||||

"tower-service",

|

||||

"tracing",

|

||||

"windows-registry",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

@@ -1371,6 +1386,16 @@ version = "2.11.0"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "469fb0b9cefa57e3ef31275ee7cacb78f2fdca44e4765491884a2b119d4eb130"

|

||||

|

||||

[[package]]

|

||||

name = "iri-string"

|

||||

version = "0.7.8"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "dbc5ebe9c3a1a7a5127f920a418f7585e9e758e911d0466ed004f393b0e380b2"

|

||||

dependencies = [

|

||||

"memchr",

|

||||

"serde",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

name = "is_terminal_polyfill"

|

||||

version = "1.70.1"

|

||||

@@ -1469,15 +1494,15 @@ checksum = "bbd2bcb4c963f2ddae06a2efc7e9f3591312473c50c6685e1f298068316e66fe"

|

||||

|

||||

[[package]]

|

||||

name = "libc"

|

||||

version = "0.2.169"

|

||||

version = "0.2.174"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "b5aba8db14291edd000dfcc4d620c7ebfb122c613afb886ca8803fa4e128a20a"

|

||||

checksum = "1171693293099992e19cddea4e8b849964e9846f4acee11b3948bcc337be8776"

|

||||

|

||||

[[package]]

|

||||

name = "libmimalloc-sys"

|

||||

version = "0.1.42"

|

||||

version = "0.1.43"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "ec9d6fac27761dabcd4ee73571cdb06b7022dc99089acbe5435691edffaac0f4"

|

||||

checksum = "bf88cd67e9de251c1781dbe2f641a1a3ad66eaae831b8a2c38fbdc5ddae16d4d"

|

||||

dependencies = [

|

||||

"cc",

|

||||

"libc",

|

||||

@@ -1588,9 +1613,9 @@ dependencies = [

|

||||

|

||||

[[package]]

|

||||

name = "mimalloc"

|

||||

version = "0.1.46"

|

||||

version = "0.1.47"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "995942f432bbb4822a7e9c3faa87a695185b0d09273ba85f097b54f4e458f2af"

|

||||

checksum = "b1791cbe101e95af5764f06f20f6760521f7158f69dbf9d6baf941ee1bf6bc40"

|

||||

dependencies = [

|

||||

"libmimalloc-sys",

|

||||

]

|

||||

@@ -1601,6 +1626,16 @@ version = "0.3.17"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "6877bb514081ee2a7ff5ef9de3281f14a4dd4bceac4c09388074a6b5df8a139a"

|

||||

|

||||

[[package]]

|

||||

name = "mime_guess"

|

||||

version = "2.0.5"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "f7c44f8e672c00fe5308fa235f821cb4198414e1c77935c1ab6948d3fd78550e"

|

||||

dependencies = [

|

||||

"mime",

|

||||

"unicase",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

name = "minimal-lexical"

|

||||

version = "0.2.1"

|

||||

@@ -2052,6 +2087,15 @@ dependencies = [

|

||||

"crc32fast",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

name = "pingora-limits"

|

||||

version = "0.5.0"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "a719a8cb5558ca06bd6076c97b8905d500ea556da89e132ba53d4272844f95b9"

|

||||

dependencies = [

|

||||

"ahash",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

name = "pingora-load-balancing"

|

||||

version = "0.5.0"

|

||||

@@ -2403,15 +2447,14 @@ checksum = "2b15c43186be67a4fd63bee50d0303afffcef381492ebe2c5d87f324e1b8815c"

|

||||

|

||||

[[package]]

|

||||

name = "reqwest"

|

||||

version = "0.12.15"

|

||||

version = "0.12.20"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "d19c46a6fdd48bc4dab94b6103fccc55d34c67cc0ad04653aad4ea2a07cd7bbb"

|

||||

checksum = "eabf4c97d9130e2bf606614eb937e86edac8292eaa6f422f995d7e8de1eb1813"

|

||||

dependencies = [

|

||||

"base64",

|

||||

"bytes",

|

||||

"encoding_rs",

|

||||

"futures-core",

|

||||

"futures-util",

|

||||

"h2",

|

||||

"http",

|

||||

"http-body",

|

||||

@@ -2420,29 +2463,26 @@ dependencies = [

|

||||

"hyper-rustls",

|

||||

"hyper-tls",

|

||||

"hyper-util",

|

||||

"ipnet",

|

||||

"js-sys",

|

||||

"log",

|

||||

"mime",

|

||||

"native-tls",

|

||||

"once_cell",

|

||||

"percent-encoding",

|

||||

"pin-project-lite",

|

||||

"rustls-pemfile",

|

||||

"rustls-pki-types",

|

||||

"serde",

|

||||

"serde_json",

|

||||

"serde_urlencoded",

|

||||

"sync_wrapper",

|

||||

"system-configuration",

|

||||

"tokio",

|

||||

"tokio-native-tls",

|

||||

"tower",

|

||||

"tower-http",

|

||||

"tower-service",

|

||||

"url",

|

||||

"wasm-bindgen",

|

||||

"wasm-bindgen-futures",

|

||||

"web-sys",

|

||||

"windows-registry",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

@@ -2709,13 +2749,13 @@ dependencies = [

|

||||

|

||||

[[package]]

|

||||

name = "sha2"

|

||||

version = "0.11.0-pre.5"

|

||||

version = "0.11.0-rc.0"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "19b4241d1a56954dce82cecda5c8e9c794eef6f53abe5e5216bac0a0ea71ffa7"

|

||||

checksum = "aa1d2e6b3cc4e43a8258a9a3b17aa5dfd2cc5186c7024bba8a64aa65b2c71a59"

|

||||

dependencies = [

|

||||

"cfg-if",

|

||||

"cpufeatures",

|

||||

"digest 0.11.0-pre.10",

|

||||

"digest 0.11.0-rc.0",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

@@ -2762,9 +2802,9 @@ checksum = "3c5e1a9a646d36c3599cd173a41282daf47c44583ad367b8e6837255952e5c67"

|

||||

|

||||

[[package]]

|

||||

name = "socket2"

|

||||

version = "0.5.8"

|

||||

version = "0.5.10"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "c970269d99b64e60ec3bd6ad27270092a5394c4e309314b18ae3fe575695fbe8"

|

||||

checksum = "e22376abed350d73dd1cd119b57ffccad95b4e585a7cda43e286245ce23c0678"

|

||||

dependencies = [

|

||||

"libc",

|

||||

"windows-sys 0.52.0",

|

||||

@@ -3004,9 +3044,9 @@ dependencies = [

|

||||

|

||||

[[package]]

|

||||

name = "tokio"

|

||||

version = "1.45.0"

|

||||

version = "1.45.1"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "2513ca694ef9ede0fb23fe71a4ee4107cb102b9dc1930f6d0fd77aae068ae165"

|

||||

checksum = "75ef51a33ef1da925cea3e4eb122833cb377c61439ca401b770f54902b806779"

|

||||

dependencies = [

|

||||

"backtrace",

|

||||

"bytes",

|

||||

@@ -3101,9 +3141,9 @@ dependencies = [

|

||||

|

||||

[[package]]

|

||||

name = "tonic"

|

||||

version = "0.13.0"

|

||||

version = "0.13.1"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "85839f0b32fd242bb3209262371d07feda6d780d16ee9d2bc88581b89da1549b"

|

||||

checksum = "7e581ba15a835f4d9ea06c55ab1bd4dce26fc53752c69a04aac00703bfb49ba9"

|

||||

dependencies = [

|

||||

"async-trait",

|

||||

"axum",

|

||||

@@ -3147,6 +3187,34 @@ dependencies = [

|

||||

"tracing",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

name = "tower-http"

|

||||

version = "0.6.6"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "adc82fd73de2a9722ac5da747f12383d2bfdb93591ee6c58486e0097890f05f2"

|

||||

dependencies = [

|

||||

"bitflags 2.8.0",

|

||||

"bytes",

|

||||

"futures-core",

|

||||

"futures-util",

|

||||

"http",

|

||||

"http-body",

|

||||

"http-body-util",

|

||||

"http-range-header",

|

||||

"httpdate",

|

||||

"iri-string",

|

||||

"mime",

|

||||

"mime_guess",

|

||||

"percent-encoding",

|

||||

"pin-project-lite",

|

||||

"tokio",

|

||||

"tokio-util",

|

||||

"tower",

|

||||

"tower-layer",

|

||||

"tower-service",

|

||||

"tracing",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

name = "tower-layer"

|

||||

version = "0.3.3"

|

||||

@@ -3447,29 +3515,29 @@ checksum = "76840935b766e1b0a05c0066835fb9ec80071d4c09a16f6bd5f7e655e3c14c38"

|

||||

|

||||

[[package]]

|

||||

name = "windows-registry"

|

||||

version = "0.4.0"

|

||||

version = "0.5.2"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "4286ad90ddb45071efd1a66dfa43eb02dd0dfbae1545ad6cc3c51cf34d7e8ba3"

|

||||

checksum = "b3bab093bdd303a1240bb99b8aba8ea8a69ee19d34c9e2ef9594e708a4878820"

|

||||

dependencies = [

|

||||

"windows-link",

|

||||

"windows-result",

|

||||

"windows-strings",

|

||||

"windows-targets 0.53.0",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

name = "windows-result"

|

||||

version = "0.3.2"

|

||||

version = "0.3.4"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "c64fd11a4fd95df68efcfee5f44a294fe71b8bc6a91993e2791938abcc712252"

|

||||

checksum = "56f42bd332cc6c8eac5af113fc0c1fd6a8fd2aa08a0119358686e5160d0586c6"

|

||||

dependencies = [

|

||||

"windows-link",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

name = "windows-strings"

|

||||

version = "0.3.1"

|

||||

version = "0.4.2"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "87fa48cc5d406560701792be122a10132491cff9d0aeb23583cc2dcafc847319"

|

||||

checksum = "56e6c93f3a0c3b36176cb1327a4958a0353d5d166c2a35cb268ace15e91d3b57"

|

||||

dependencies = [

|

||||

"windows-link",

|

||||

]

|

||||

@@ -3525,29 +3593,13 @@ dependencies = [

|

||||

"windows_aarch64_gnullvm 0.52.6",

|

||||

"windows_aarch64_msvc 0.52.6",

|

||||

"windows_i686_gnu 0.52.6",

|

||||

"windows_i686_gnullvm 0.52.6",

|

||||

"windows_i686_gnullvm",

|

||||

"windows_i686_msvc 0.52.6",

|

||||

"windows_x86_64_gnu 0.52.6",

|

||||

"windows_x86_64_gnullvm 0.52.6",

|

||||

"windows_x86_64_msvc 0.52.6",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

name = "windows-targets"

|

||||

version = "0.53.0"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "b1e4c7e8ceaaf9cb7d7507c974735728ab453b67ef8f18febdd7c11fe59dca8b"

|

||||

dependencies = [

|

||||

"windows_aarch64_gnullvm 0.53.0",

|

||||

"windows_aarch64_msvc 0.53.0",

|

||||

"windows_i686_gnu 0.53.0",

|

||||

"windows_i686_gnullvm 0.53.0",

|

||||

"windows_i686_msvc 0.53.0",

|

||||

"windows_x86_64_gnu 0.53.0",

|

||||

"windows_x86_64_gnullvm 0.53.0",

|

||||

"windows_x86_64_msvc 0.53.0",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

name = "windows_aarch64_gnullvm"

|

||||

version = "0.48.5"

|

||||

@@ -3560,12 +3612,6 @@ version = "0.52.6"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "32a4622180e7a0ec044bb555404c800bc9fd9ec262ec147edd5989ccd0c02cd3"

|

||||

|

||||

[[package]]

|

||||

name = "windows_aarch64_gnullvm"

|

||||

version = "0.53.0"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "86b8d5f90ddd19cb4a147a5fa63ca848db3df085e25fee3cc10b39b6eebae764"

|

||||

|

||||

[[package]]

|

||||

name = "windows_aarch64_msvc"

|

||||

version = "0.48.5"

|

||||

@@ -3578,12 +3624,6 @@ version = "0.52.6"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "09ec2a7bb152e2252b53fa7803150007879548bc709c039df7627cabbd05d469"

|

||||

|

||||

[[package]]

|

||||

name = "windows_aarch64_msvc"

|

||||

version = "0.53.0"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "c7651a1f62a11b8cbd5e0d42526e55f2c99886c77e007179efff86c2b137e66c"

|

||||

|

||||

[[package]]

|

||||

name = "windows_i686_gnu"

|

||||

version = "0.48.5"

|

||||

@@ -3596,24 +3636,12 @@ version = "0.52.6"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "8e9b5ad5ab802e97eb8e295ac6720e509ee4c243f69d781394014ebfe8bbfa0b"

|

||||

|

||||

[[package]]

|

||||

name = "windows_i686_gnu"

|

||||

version = "0.53.0"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "c1dc67659d35f387f5f6c479dc4e28f1d4bb90ddd1a5d3da2e5d97b42d6272c3"

|

||||

|

||||

[[package]]

|

||||

name = "windows_i686_gnullvm"

|

||||

version = "0.52.6"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "0eee52d38c090b3caa76c563b86c3a4bd71ef1a819287c19d586d7334ae8ed66"

|

||||

|

||||

[[package]]

|

||||

name = "windows_i686_gnullvm"

|

||||

version = "0.53.0"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "9ce6ccbdedbf6d6354471319e781c0dfef054c81fbc7cf83f338a4296c0cae11"

|

||||

|

||||

[[package]]

|

||||

name = "windows_i686_msvc"

|

||||

version = "0.48.5"

|

||||

@@ -3626,12 +3654,6 @@ version = "0.52.6"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "240948bc05c5e7c6dabba28bf89d89ffce3e303022809e73deaefe4f6ec56c66"

|

||||

|

||||

[[package]]

|

||||

name = "windows_i686_msvc"

|

||||

version = "0.53.0"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "581fee95406bb13382d2f65cd4a908ca7b1e4c2f1917f143ba16efe98a589b5d"

|

||||

|

||||

[[package]]

|

||||

name = "windows_x86_64_gnu"

|

||||

version = "0.48.5"

|

||||

@@ -3644,12 +3666,6 @@ version = "0.52.6"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "147a5c80aabfbf0c7d901cb5895d1de30ef2907eb21fbbab29ca94c5b08b1a78"

|

||||

|

||||

[[package]]

|

||||

name = "windows_x86_64_gnu"

|

||||

version = "0.53.0"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "2e55b5ac9ea33f2fc1716d1742db15574fd6fc8dadc51caab1c16a3d3b4190ba"

|

||||

|

||||

[[package]]

|

||||

name = "windows_x86_64_gnullvm"

|

||||

version = "0.48.5"

|

||||

@@ -3662,12 +3678,6 @@ version = "0.52.6"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "24d5b23dc417412679681396f2b49f3de8c1473deb516bd34410872eff51ed0d"

|

||||

|

||||

[[package]]

|

||||

name = "windows_x86_64_gnullvm"

|

||||

version = "0.53.0"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "0a6e035dd0599267ce1ee132e51c27dd29437f63325753051e71dd9e42406c57"

|

||||

|

||||

[[package]]

|

||||

name = "windows_x86_64_msvc"

|

||||

version = "0.48.5"

|

||||

@@ -3680,12 +3690,6 @@ version = "0.52.6"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "589f6da84c646204747d1270a2a5661ea66ed1cced2631d546fdfb155959f9ec"

|

||||

|

||||

[[package]]

|

||||

name = "windows_x86_64_msvc"

|

||||

version = "0.53.0"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "271414315aff87387382ec3d271b52d7ae78726f5d44ac98b4f4030c91880486"

|

||||

|

||||

[[package]]

|

||||

name = "wit-bindgen-rt"

|

||||

version = "0.33.0"

|

||||

|

||||

19

Cargo.toml

19

Cargo.toml

@@ -11,7 +11,7 @@ panic = "abort"

|

||||

strip = true

|

||||

|

||||

[dependencies]

|

||||

tokio = { version = "1.45.0", features = ["full"] }

|

||||

tokio = { version = "1.45.1", features = ["full"] }

|

||||

#pingora = { version = "0.5.0", features = ["lb", "rustls"] } # openssl, rustls, boringssl

|

||||

pingora = { version = "0.5.0", features = ["lb", "openssl"] } # openssl, rustls, boringssl

|

||||

serde = { version = "1.0.219", features = ["derive"] }

|

||||

@@ -19,6 +19,8 @@ dashmap = "7.0.0-rc2"

|

||||

pingora-core = "0.5.0"

|

||||

pingora-proxy = "0.5.0"

|

||||

pingora-http = "0.5.0"

|

||||

pingora-limits = "0.5.0"

|

||||

#pingora-pool = "0.5.0"

|

||||

async-trait = "0.1.88"

|

||||

env_logger = "0.11.8"

|

||||

log = "0.4.27"

|

||||

@@ -26,7 +28,7 @@ futures = "0.3.31"

|

||||

notify = "8.0.0"

|

||||

axum = { version = "0.8.4" }

|

||||

axum-server = { version = "0.7.2", features = ["tls-openssl"] }

|

||||

reqwest = { version = "0.12.15", features = ["json", "native-tls-alpn"] }

|

||||

reqwest = { version = "0.12.20", features = ["json", "native-tls-alpn"] }

|

||||

#reqwest = { version = "0.12.15", features = ["json", "rustls-tls"] }

|

||||

#reqwest = { version = "0.12.15", default-features = false, features = ["rustls-tls", "json"] }

|

||||

|

||||

@@ -34,18 +36,21 @@ serde_yaml = "0.9.34-deprecated"

|

||||

rand = "0.9.0"

|

||||

base64 = "0.22.1"

|

||||

jsonwebtoken = "9.3.1"

|

||||

tonic = "0.13.0"

|

||||

sha2 = { version = "0.11.0-pre.5", default-features = false }

|

||||

tonic = "0.13.1"

|

||||

sha2 = { version = "0.11.0-rc.0", default-features = false }

|

||||

base16ct = { version = "0.2.0", features = ["alloc"] }

|

||||

urlencoding = "2.1.3"

|

||||

arc-swap = "1.7.1"

|

||||

#rustls = { version = "0.23.27", features = ["ring"] }

|

||||

mimalloc = { version = "0.1.46", default-features = false }

|

||||

mimalloc = { version = "0.1.47", default-features = false }

|

||||

prometheus = "0.14.0"

|

||||

lazy_static = "1.5.0"

|

||||

openssl = "0.10.72"

|

||||

#openssl = "0.10.73"

|

||||

x509-parser = "0.17.0"

|

||||

rustls-pemfile = "2.2.0"

|

||||

#hickory-client = { version = "0.25.2" }

|

||||

tower-http = { version = "0.6.6", features = ["fs"] }

|

||||

once_cell = "1.20.2"

|

||||

#moka = { version = "0.12.10", features = ["sync"] }

|

||||

|

||||

#openssl = "0.10.72"

|

||||

|

||||

|

||||

120

METRICS.md

120

METRICS.md

@@ -1,120 +0,0 @@

|

||||

# 📈 Aralez Prometheus Metrics Reference

|

||||

|

||||

This document outlines Prometheus metrics for the [Aralez](https://github.com/sadoyan/aralez) reverse proxy.

|

||||

These metrics can be used for monitoring, alerting and performance analysis.

|

||||

|

||||

Exposed to `http://config_address/metrics`

|

||||

|

||||

By default `http://127.0.0.1:3000/metrics`

|

||||

|

||||

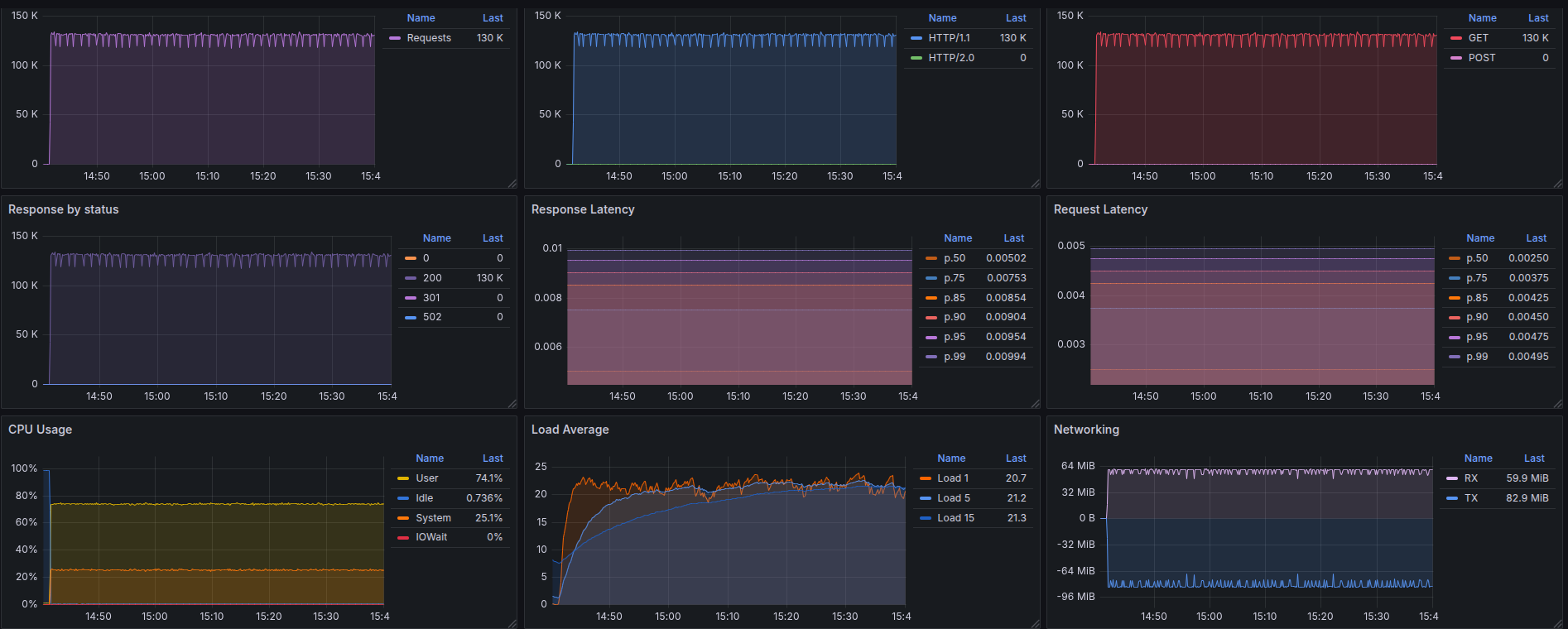

# 📊 Example Grafana dashboard during stress test :

|

||||

|

||||

|

||||

|

||||

---

|

||||

|

||||

## 🛠️ Prometheus Metrics

|

||||

|

||||

### 1. `aralez_requests_total`

|

||||

|

||||

- **Type**: `Counter`

|

||||

- **Purpose**: Total amount requests served by Aralez.

|

||||

|

||||

**PromQL example:**

|

||||

|

||||

```promql

|

||||

rate(aralez_requests_total[5m])

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

### 2. `aralez_errors_total`

|

||||

|

||||

- **Type**: `Counter`

|

||||

- **Purpose**: Count of requests that resulted in an error.

|

||||

|

||||

**PromQL example:**

|

||||

|

||||

```promql

|

||||

rate(aralez_errors_total[5m])

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

### 3. `aralez_responses_total{status="200"}`

|

||||

|

||||

- **Type**: `CounterVec`

|

||||

- **Purpose**: Count of responses by HTTP status code.

|

||||

|

||||

**PromQL example:**

|

||||

|

||||

```promql

|

||||

rate(aralez_responses_total{status=~"5.."}[5m]) > 0

|

||||

```

|

||||

|

||||

> Useful for alerting on 5xx errors.

|

||||

|

||||

---

|

||||

|

||||

### 4. `aralez_response_latency_seconds`

|

||||

|

||||

- **Type**: `Histogram`

|

||||

- **Purpose**: Tracks the latency of responses in seconds.

|

||||

|

||||

**Example bucket output:**

|

||||

|

||||

```prometheus

|

||||

aralez_response_latency_seconds_bucket{le="0.01"} 15

|

||||

aralez_response_latency_seconds_bucket{le="0.1"} 120

|

||||

aralez_response_latency_seconds_bucket{le="0.25"} 245

|

||||

aralez_response_latency_seconds_bucket{le="0.5"} 500

|

||||

...

|

||||

aralez_response_latency_seconds_count 1023

|

||||

aralez_response_latency_seconds_sum 42.6

|

||||

```

|

||||

|

||||

| Metric | Meaning |

|

||||

|-------------------------|---------------------------------------------------------------|

|

||||

| `bucket{le="0.1"} 120` | 120 requests were ≤ 100ms |

|

||||

| `bucket{le="0.25"} 245` | 245 requests were ≤ 250ms |

|

||||

| `count` | Total number of observations (i.e., total responses measured) |

|

||||

| `sum` | Total time of all responses, in seconds |

|

||||

|

||||

### 🔍 How to interpret:

|

||||

|

||||

- `le` means “less than or equal to”.

|

||||

- `count` is total amount of observations.

|

||||

- `sum` is the total time (in seconds) of all responses.

|

||||

|

||||

**PromQL examples:**

|

||||

|

||||

🔹 **95th percentile latency**

|

||||

|

||||

```promql

|

||||

histogram_quantile(0.95, rate(aralez_response_latency_seconds_bucket[5m]))

|

||||

|

||||

```

|

||||

|

||||

🔹 **Average latency**

|

||||

|

||||

```promql

|

||||

rate(aralez_response_latency_seconds_sum[5m]) / rate(aralez_response_latency_seconds_count[5m])

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## ✅ Notes

|

||||

|

||||

- Metrics are registered after the first served request.

|

||||

|

||||

---

|

||||

✅ Summary of key metrics

|

||||

|

||||

| Metric Name | Type | What it Tells You |

|

||||

|---------------------------------------|------------|---------------------------|

|

||||

| `aralez_requests_total` | Counter | Total requests served |

|

||||

| `aralez_errors_total` | Counter | Number of failed requests |

|

||||

| `aralez_responses_total{status="200"}` | CounterVec | Response status breakdown |

|

||||

| `aralez_response_latency_seconds` | Histogram | How fast responses are |

|

||||

|

||||

📘 *Last updated: May 2025*

|

||||

141

README.md

141

README.md

@@ -1,19 +1,29 @@

|

||||

|

||||

|

||||

# Aralez (Արալեզ), Reverse proxy and service mesh built on top of Cloudflare's Pingora

|

||||

---

|

||||

|

||||

**What Aralez means?**

|

||||

<ins>Aralez = Արալեզ. Named after the legendary Armenian guardian spirit, winged dog-like creature, that descend upon fallen heroes to lick their wounds and resurrect them.</ins>.

|

||||

# Aralez (Արալեզ),

|

||||

|

||||

### **Reverse proxy and service mesh built on top of Cloudflare's Pingora**

|

||||

|

||||

What Aralez means ?

|

||||

**Aralez = Արալեզ** <ins>.Named after the legendary Armenian guardian spirit, winged dog-like creature, that descend upon fallen heroes to lick their wounds and resurrect them.</ins>.

|

||||

|

||||

Built on Rust, on top of **Cloudflare’s Pingora engine**, **Aralez** delivers world-class performance, security and scalability — right out of the box.

|

||||

|

||||

[](https://www.buymeacoffee.com/sadoyan)

|

||||

|

||||

---

|

||||

|

||||

## 🔧 Key Features

|

||||

|

||||

- **Dynamic Config Reloads** — Upstreams can be updated live via API, no restart required.

|

||||

- **TLS Termination** — Built-in OpenSSL support.

|

||||

- **Automatic load of certificates** — Automatically reads and loads certificates from a folder, without a restart.

|

||||

- **Upstreams TLS detection** — Aralez will automatically detect if upstreams uses secure connection.

|

||||

- **Built in rate limiter** — Limit requests to server, by setting up upper limit for requests per seconds, per virtualhost.

|

||||

- **Global rate limiter** — Set rate limit for all virtualhosts.

|

||||

- **Per path rate limiter** — Set rate limit for specific paths. Path limits will override global limits.

|

||||

- **Authentication** — Supports Basic Auth, API tokens, and JWT verification.

|

||||

- **Basic Auth**

|

||||

- **API Key** via `x-api-key` header

|

||||

@@ -24,6 +34,7 @@ Built on Rust, on top of **Cloudflare’s Pingora engine**, **Aralez** delivers

|

||||

- Failover with health checks

|

||||

- Sticky sessions via cookies

|

||||

- **Unified Port** — Serve HTTP and WebSocket traffic over the same connection.

|

||||

- **Built in file server** — Build in minimalistic file server for serving static files, should be added as upstreams for public access.

|

||||

- **Memory Safe** — Created purely on Rust.

|

||||

- **High Performance** — Built with [Pingora](https://github.com/cloudflare/pingora) and tokio for async I/O.

|

||||

|

||||

@@ -61,28 +72,32 @@ Built on Rust, on top of **Cloudflare’s Pingora engine**, **Aralez** delivers

|

||||

|

||||

### 🔧 `main.yaml`

|

||||

|

||||

| Key | Example Value | Description |

|

||||

|----------------------------------|--------------------------------------|--------------------------------------------------------------------------------------------------|

|

||||

| **threads** | 12 | Number of running daemon threads. Optional, defaults to 1 |

|

||||

| **user** | aralez | Optional, Username for running aralez after dropping root privileges, requires to launch as root |

|

||||

| **group** | aralez | Optional,Group for running aralez after dropping root privileges, requires to launch as root |

|

||||

| **daemon** | false | Run in background (boolean) |

|

||||

| **upstream_keepalive_pool_size** | 500 | Pool size for upstream keepalive connections |

|

||||

| **pid_file** | /tmp/aralez.pid | Path to PID file |

|

||||

| **error_log** | /tmp/aralez_err.log | Path to error log file |

|

||||

| **upgrade_sock** | /tmp/aralez.sock | Path to live upgrade socket file |

|

||||

| **config_address** | 0.0.0.0:3000 | HTTP API address for pushing upstreams.yaml from remote location |

|

||||

| **config_tls_address** | 0.0.0.0:3001 | HTTPS API address for pushing upstreams.yaml from remote location |

|

||||

| **config_tls_certificate** | etc/server.crt | Certificate file path for API. Mandatory if proxy_address_tls is set, else optional |

|

||||

| **config_tls_key_file** | etc/key.pem | Private Key file path. Mandatory if proxy_address_tls is set, else optional |

|

||||

| **proxy_address_http** | 0.0.0.0:6193 | Aralez HTTP bind address |

|

||||

| **proxy_address_tls** | 0.0.0.0:6194 | Aralez HTTPS bind address (Optional) |

|

||||

| **proxy_certificates** | etc/certs/ | The directory containing certificate and key files. In a format {NAME}.crt, {NAME}.key. |

|

||||

| **upstreams_conf** | etc/upstreams.yaml | The location of upstreams file |

|

||||

| **log_level** | info | Log level , possible values : info, warn, error, debug, trace, off |

|

||||

| **hc_method** | HEAD | Healthcheck method (HEAD, GET, POST are supported) UPPERCASE |

|

||||

| **hc_interval** | 2 | Interval for health checks in seconds |

|

||||

| **master_key** | 5aeff7f9-7b94-447c-af60-e8c488544a3e | Master key for working with API server and JWT Secret generation |

|

||||

| Key | Example Value | Description |

|

||||

|----------------------------------|--------------------------------------|----------------------------------------------------------------------------------------------------|

|

||||

| **threads** | 12 | Number of running daemon threads. Optional, defaults to 1 |

|

||||

| **user** | aralez | Optional, Username for running aralez after dropping root privileges, requires to launch as root |

|

||||

| **group** | aralez | Optional,Group for running aralez after dropping root privileges, requires to launch as root |

|

||||

| **daemon** | false | Run in background (boolean) |

|

||||

| **upstream_keepalive_pool_size** | 500 | Pool size for upstream keepalive connections |

|

||||

| **pid_file** | /tmp/aralez.pid | Path to PID file |

|

||||

| **error_log** | /tmp/aralez_err.log | Path to error log file |

|

||||

| **upgrade_sock** | /tmp/aralez.sock | Path to live upgrade socket file |

|

||||

| **config_address** | 0.0.0.0:3000 | HTTP API address for pushing upstreams.yaml from remote location |

|

||||

| **config_tls_address** | 0.0.0.0:3001 | HTTPS API address for pushing upstreams.yaml from remote location |

|

||||

| **config_tls_certificate** | etc/server.crt | Certificate file path for API. Mandatory if proxy_address_tls is set, else optional |

|

||||

| **proxy_tls_grade** | (high, medium, unsafe) | Grade of TLS ciphers, for easy configuration. High matches Qualys SSL Labs A+ (defaults to medium) |

|

||||

| **config_tls_key_file** | etc/key.pem | Private Key file path. Mandatory if proxy_address_tls is set, else optional |

|

||||

| **proxy_address_http** | 0.0.0.0:6193 | Aralez HTTP bind address |

|

||||

| **proxy_address_tls** | 0.0.0.0:6194 | Aralez HTTPS bind address (Optional) |

|

||||

| **proxy_certificates** | etc/certs/ | The directory containing certificate and key files. In a format {NAME}.crt, {NAME}.key. |

|

||||

| **upstreams_conf** | etc/upstreams.yaml | The location of upstreams file |

|

||||

| **log_level** | info | Log level , possible values : info, warn, error, debug, trace, off |

|

||||

| **hc_method** | HEAD | Healthcheck method (HEAD, GET, POST are supported) UPPERCASE |

|

||||

| **hc_interval** | 2 | Interval for health checks in seconds |

|

||||

| **master_key** | 5aeff7f9-7b94-447c-af60-e8c488544a3e | Master key for working with API server and JWT Secret generation |

|

||||

| **file_server_folder** | /some/local/folder | Optional, local folder to serve |

|

||||

| **file_server_address** | 127.0.0.1:3002 | Optional, Local address for file server. Can set as upstream for public access |

|

||||

| **config_api_enabled** | true | Boolean to enable/disable remote config push capability |

|

||||

|

||||

### 🌐 `upstreams.yaml`

|

||||

|

||||

@@ -103,12 +118,41 @@ Make the binary executable `chmod 755 ./aralez-VERSION` and run.

|

||||

|

||||

File names:

|

||||

|

||||

| File Name | Description |

|

||||

|---------------------------|---------------------------------------------------------------|

|

||||

| `aralez-x86_64-musl.gz` | Static Linux x86_64 binary, without any system dependency |

|

||||

| `aralez-x86_64-glibc.gz` | Dynamic Linux x86_64 binary, with minimal system dependencies |

|

||||

| `aralez-aarch64-musl.gz` | Static Linux ARM64 binary, without any system dependency |

|

||||

| `aralez-aarch64-glibc.gz` | Dynamic Linux ARM64 binary, with minimal system dependencies |

|

||||

| File Name | Description |

|

||||

|---------------------------|--------------------------------------------------------------------------|

|

||||

| `aralez-x86_64-musl.gz` | Static Linux x86_64 binary, without any system dependency |

|

||||

| `aralez-x86_64-glibc.gz` | Dynamic Linux x86_64 binary, with minimal system dependencies |

|

||||

| `aralez-aarch64-musl.gz` | Static Linux ARM64 binary, without any system dependency |

|

||||

| `aralez-aarch64-glibc.gz` | Dynamic Linux ARM64 binary, with minimal system dependencies |

|

||||

| `sadoyan/aralez` | Docker image on Debian 13 slim (https://hub.docker.com/r/sadoyan/aralez) |

|

||||

|

||||

**Via docker**

|

||||

|

||||

```shell

|

||||

docker run -d \

|

||||

-v /local/path/to/config:/etc/aralez:ro \

|

||||

-p 80:80 \

|

||||

-p 443:443 \

|

||||

sadoyan/aralez

|

||||

```

|

||||

|

||||

## 💡 Note

|

||||

|

||||

In general **glibc** builds are working faster, but have few, basic, system dependencies for example :

|

||||

|

||||

```

|

||||

linux-vdso.so.1 (0x00007ffeea33b000)

|

||||

libgcc_s.so.1 => /lib/x86_64-linux-gnu/libgcc_s.so.1 (0x00007f09e7377000)

|

||||

libm.so.6 => /lib/x86_64-linux-gnu/libm.so.6 (0x00007f09e6320000)

|

||||

libc.so.6 => /lib/x86_64-linux-gnu/libc.so.6 (0x00007f09e613f000)

|

||||

/lib64/ld-linux-x86-64.so.2 (0x00007f09e73b1000)

|

||||

```

|

||||

|

||||

These are common to any Linux systems, so the binary should work on almost any Linux system.

|

||||

|

||||

**musl** builds are 100% portable, static compiled binaries and have zero system depencecies.

|

||||

In general musl builds have a little less performance.

|

||||

The most intensive tests shows 107k-110k requests per second on **Glibc** binaries against 97k-100k **Musl** ones.

|

||||

|

||||

## 🔌 Running the Proxy

|

||||

|

||||

@@ -142,6 +186,7 @@ A sample `upstreams.yaml` entry:

|

||||

provider: "file"

|

||||

sticky_sessions: false

|

||||

to_https: false

|

||||

rate_limit: 10

|

||||

headers:

|

||||

- "Access-Control-Allow-Origin:*"

|

||||

- "Access-Control-Allow-Methods:POST, GET, OPTIONS"

|

||||

@@ -152,6 +197,7 @@ authorization:

|

||||

myhost.mydomain.com:

|

||||

paths:

|

||||

"/":

|

||||

rate_limit: 20

|

||||

to_https: false

|

||||

headers:

|

||||

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

||||

@@ -166,19 +212,29 @@ myhost.mydomain.com:

|

||||

servers:

|

||||

- "127.0.0.4:8443"

|

||||

- "127.0.0.5:8443"

|

||||

"/.well-known/acme-challenge":

|

||||

healthcheck: false

|

||||

servers:

|

||||

- "127.0.0.1:8001"

|

||||

```

|

||||

|

||||

**This means:**

|

||||

|

||||

- Sticky sessions are disabled globally. This setting applies to all upstreams. If enabled all requests will be 301 redirected to HTTPS.

|

||||

- HTTP to HTTPS redirect disabled globally, but can be overridden by `to_https` setting per upstream.

|

||||

- Requests to each hosted domains will be limited to 10 requests per second per virtualhost.

|

||||

- Requests limits are calculated per requester ip plus requested virtualhost.

|

||||

- If the requester exceeds the limit it will receive `429 Too Many Requests` error.

|

||||

- Optional. Rate limiter will be disabled if the parameter is entirely removed from config.

|

||||

- Requests to `myhost.mydomain.com/` will be limited to 20 requests per second.

|

||||

- Requests to `myhost.mydomain.com/` will be proxied to `127.0.0.1` and `127.0.0.2`.

|

||||

- Plain HTTP to `myhost.mydomain.com/foo` will get 301 redirect to configured TLS port of Aralez.

|

||||

- Requests to `myhost.mydomain.com/foo` will be proxied to `127.0.0.4` and `127.0.0.5`.

|

||||

- Requests to `myhost.mydomain.com/.well-known/acme-challenge` will be proxied to `127.0.0.1:8001`, but healthcheks are disabled.

|

||||

- SSL/TLS for upstreams is detected automatically, no need to set any config parameter.

|

||||

- Assuming the `127.0.0.5:8443` is SSL protected. The inner traffic will use TLS.

|

||||

- Self signed certificates are silently accepted.

|

||||

- Global headers (CORS for this case) will be injected to all upstreams

|

||||

- Self-signed certificates are silently accepted.

|

||||

- Global headers (CORS for this case) will be injected to all upstreams.

|

||||

- Additional headers will be injected into the request for `myhost.mydomain.com`.

|

||||

- You can choose any path, deep nested paths are supported, the best match chosen.

|

||||

- All requests to servers will require JWT token authentication (You can comment out the authorization to disable it),

|

||||

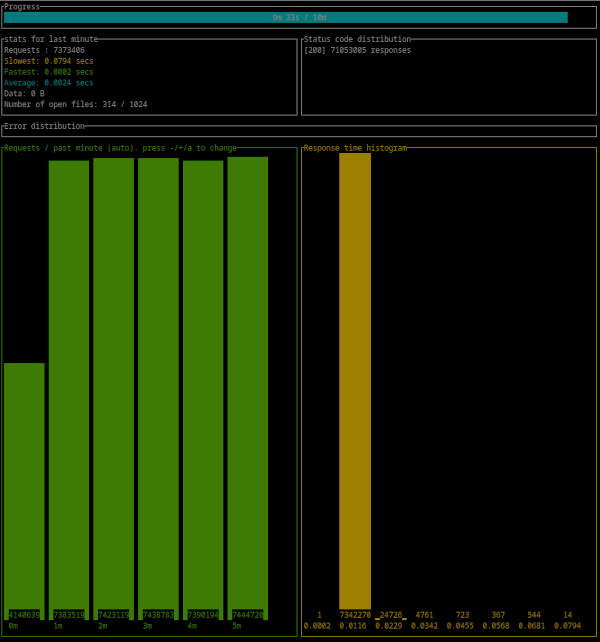

@@ -442,4 +498,21 @@ Error distribution:

|

||||

[228] aborted due to deadline

|

||||

```

|

||||

|

||||

|

||||

|

||||

|

||||

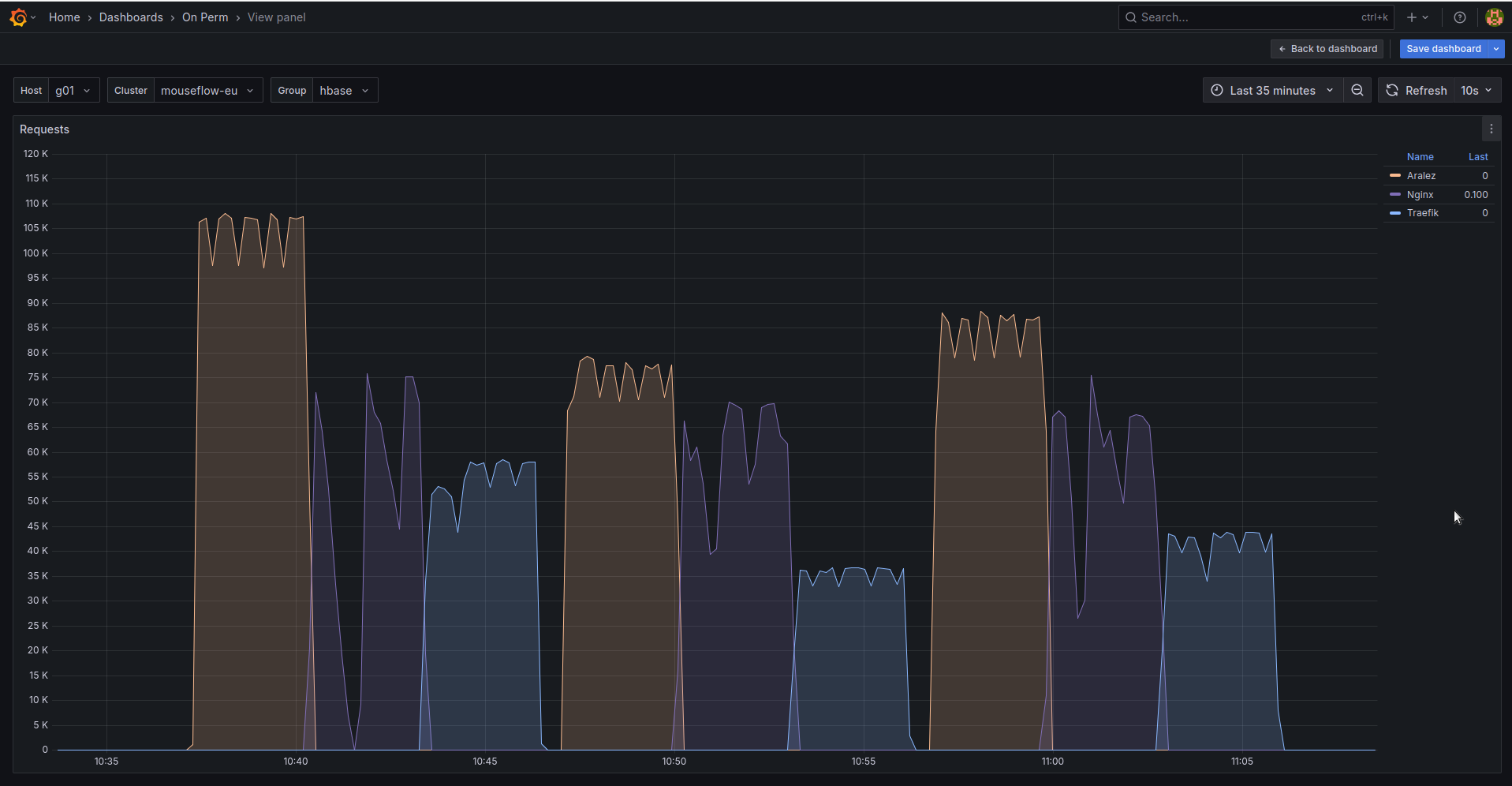

## 🚀 Aralez, Nginx, Traefik performance benchmark

|

||||

|

||||

This benchmark is done on 4 servers. With CPU Intel(R) Xeon(R) E-2174G CPU @ 3.80GHz, 64 GB RAM.

|

||||

|

||||

1. Sever runs Aralez, Traefik, Nginx on different ports. Tuned as much as I could .

|

||||

2. 3x Upstreams servers, running Nginx. Replying with dummy json hardcoded in config file for max performance.

|

||||

|

||||

All servers are connected to the same switch with 1GB port in datacenter , not a home lab. The results:

|

||||

|

||||

|

||||

The results show requests per second performed by Load balancer. You can see 3 batches with 800 concurrent users.

|

||||

|

||||

1. Requests via http1.1 to plain text endpoint.

|

||||

2. Requests to via http2 to SSL endpoint.

|

||||

3. Mixed workload with plain http1.1 and htt2 SSL.

|

||||

|

||||

|

||||

BIN

assets/bench.png

Normal file

BIN

assets/bench.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 160 KiB |

@@ -1,5 +1,5 @@

|

||||

# Main configuration file , applied on startup

|

||||

threads: 12 # Nubber of daemon threads default setting

|

||||

# Main configuration file, applied on startup

|

||||

threads: 12 # Number of daemon threads default setting

|

||||

#user: pastor # Username for running aralez after dropping root privileges, requires program to start as root

|

||||

#group: pastor # Group for running aralez after dropping root privileges, requires program to start as root

|

||||

daemon: false # Run in background

|

||||

@@ -7,15 +7,19 @@ upstream_keepalive_pool_size: 500 # Pool size for upstream keepalive connections

|

||||

pid_file: /tmp/aralez.pid # Path to PID file

|

||||

error_log: /tmp/aralez_err.log # Path to error log

|

||||

upgrade_sock: /tmp/aralez.sock # Path to socket file

|

||||

config_api_enabled: true # Boolean to enable/disable remote config push capability.

|

||||

config_address: 0.0.0.0:3000 # HTTP API address for pushing upstreams.yaml from remote location

|

||||

config_tls_address: 0.0.0.0:3001 # HTTP TLS API address for pushing upstreams.yaml from remote location

|

||||

config_tls_certificate: etc/server.crt # Mandatory if config_tls_address is set

|

||||

config_tls_key_file: etc/key.pem # Mandatory if config_tls_address is set

|

||||

config_tls_certificate: /etc/server.crt # Mandatory if config_tls_address is set

|

||||

config_tls_key_file: /etc/key.pem # Mandatory if config_tls_address is set

|

||||

proxy_address_http: 0.0.0.0:6193 # Proxy HTTP bind address

|

||||

proxy_address_tls: 0.0.0.0:6194 # Optional, Proxy TLS bind address

|

||||

proxy_certificates: etc/yoyo # Mandatory if proxy_address_tls set, should contain certificate and key files strictly in a format {NAME}.crt, {NAME}.key.

|

||||

upstreams_conf: etc/upstreams.yaml # the location of upstreams file

|

||||

proxy_certificates: /etc/certs # Mandatory if proxy_address_tls set, should contain a certificate and key files strictly in a format {NAME}.crt, {NAME}.key.

|

||||

proxy_tls_grade: a+ # Grade of TLS suite for proxy (a+, a, b, c, unsafe), matching grades of Qualys SSL Labs

|

||||

upstreams_conf: /etc/upstreams.yaml # the location of upstreams file

|

||||

file_server_folder: /opt/storage # Optional, local folder to serve

|

||||

file_server_address: 127.0.0.1:3002 # Optional, Local address for file server. Can set as upstream for public access.

|

||||

log_level: info # info, warn, error, debug, trace, off

|

||||

hc_method: HEAD # Healthcheck method (HEAD, GET, POST are supported) UPPERCASE

|

||||

hc_interval: 2 #Interval for health checks in seconds

|

||||

master_key: 910517d9-f9a1-48de-8826-dbadacbd84af-cb6f830e-ab16-47ec-9d8f-0090de732774 # Mater key for working with API server and JWT Secret

|

||||

master_key: 910517d9-f9a1-48de-8826-dbadacbd84af-cb6f830e-ab16-47ec-9d8f-0090de732774 # Mater key for working with API server and JWT Secret

|

||||

@@ -1,7 +1,8 @@

|

||||

# The file under watch and hot reload, changes are applied immediately, no need to restart or reload.

|

||||

provider: "file" # consul

|

||||

provider: "file" # consul, kubernetes

|

||||

sticky_sessions: false

|

||||

to_ssl: false

|

||||

#rate_limit: 100

|

||||

headers:

|

||||

- "Access-Control-Allow-Origin:*"

|

||||

- "Access-Control-Allow-Methods:POST, GET, OPTIONS"

|

||||

@@ -16,20 +17,32 @@ authorization:

|

||||

# creds: "5ecbf799-1343-4e94-a9b5-e278af5cd313-56b45249-1839-4008-a450-a60dc76d2bae"

|

||||

consul: # If the provider is consul. Otherwise, ignored.

|

||||

servers:

|

||||

- "http://master1:8500"

|

||||

- "http://192.168.22.1:8500"

|

||||

- "http://master1.foo.local:8500"

|

||||

- "http://consul1:8500"

|

||||

- "http://consul2:8500"

|

||||

- "http://consul3:8500"

|

||||

services: # proxy: The hostname to access the proxy server, real : The real service name in Consul database.

|

||||

- proxy: "proxy-frontend-dev-frontend-srv"

|

||||

real: "frontend-dev-frontend-srv"

|

||||

token: "8e2db809-845b-45e1-8b47-2c8356a09da0-a4370955-18c2-4d6e-a8f8-ffcc0b47be81" # Consul server access token, If Consul auth is enabled

|

||||

kubernetes:

|

||||

servers:

|

||||

- "172.16.0.11:5443" # KUBERNETES_SERVICE_HOST : KUBERNETES_SERVICE_PORT_HTTPS

|

||||

services:

|

||||

- proxy: "vt-api-service-v2"

|

||||

real: "vt-api-service-v2"

|

||||

- proxy: "vt-search-service"

|

||||

real: "vt-search-service"

|

||||

- proxy: "vt-websocket-service"

|

||||

real: "vt-websocket-service"

|

||||

tokenpath: "/tmp/token.txt" # /var/run/secrets/kubernetes.io/serviceaccount/token

|

||||

upstreams:

|

||||

myip.mydomain.com:

|

||||

paths:

|

||||

rate_limit: 10 # Per path rate limit have higher priority than global rate limit. If not set, the global rate limit will be used

|

||||

"/":

|

||||

to_https: false

|

||||

headers:

|

||||

- "X-Proxy-From:Gazan"

|

||||

- "X-Proxy-From:Aralez"

|

||||

servers: # List of upstreams HOST:PORT

|

||||

- "127.0.0.1:8000"

|

||||

- "127.0.0.2:8000"

|

||||

@@ -39,7 +52,7 @@ upstreams:

|

||||

to_https: true

|

||||

headers:

|

||||

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

||||

- "X-Proxy-From:Gazan"

|

||||

- "X-Proxy-From:Aralez"

|

||||

servers:

|

||||

- "127.0.0.1:8000"

|

||||

- "127.0.0.2:8000"

|

||||

@@ -52,9 +65,11 @@ upstreams:

|

||||

headers:

|

||||

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

||||

servers:

|

||||

- "192.168.1.1:8000"

|

||||

- "192.168.1.10:8000"

|

||||

- "127.0.0.1:8000"

|

||||

- "127.0.0.2:8000"

|

||||

- "127.0.0.3:8000"

|

||||

- "127.0.0.4:8000"

|

||||

- "127.0.0.4:8000"

|

||||

"/.well-known/acme-challenge":

|

||||

healthcheck: false

|

||||

servers:

|

||||

- "127.0.0.1:8001"

|

||||

@@ -1,9 +1,11 @@

|

||||

pub mod auth;

|

||||

pub mod consul;

|

||||

pub mod discovery;

|

||||

pub mod dnsclient;

|

||||

mod filewatch;

|

||||

pub mod healthcheck;

|

||||

pub mod jwt;

|

||||

pub mod kuber;

|

||||

pub mod metrics;

|

||||

pub mod parceyaml;

|

||||

pub mod structs;

|

||||

|

||||

@@ -1,6 +1,5 @@

|

||||

use crate::utils::parceyaml::load_configuration;

|

||||

use crate::utils::structs::{Configuration, ServiceMapping, UpstreamsDashMap};

|

||||

use crate::utils::tools::{clone_dashmap_into, compare_dashmaps};

|

||||

use crate::utils::structs::{Configuration, InnerMap, ServiceMapping, UpstreamsDashMap};

|

||||

use crate::utils::tools::{clone_dashmap_into, compare_dashmaps, print_upstreams};

|

||||

use dashmap::DashMap;

|

||||

use futures::channel::mpsc::Sender;

|

||||

use futures::SinkExt;

|

||||

@@ -11,6 +10,7 @@ use reqwest::header::{HeaderMap, HeaderValue};

|

||||

use serde::Deserialize;

|

||||

use std::collections::HashMap;

|

||||

use std::sync::atomic::AtomicUsize;

|

||||

use std::sync::Arc;

|

||||

use std::time::Duration;

|

||||

|

||||

#[derive(Debug, Deserialize)]

|

||||

@@ -27,59 +27,53 @@ struct TaggedAddress {

|

||||

port: u16,

|

||||

}

|

||||

|

||||

pub async fn start(fp: String, mut toreturn: Sender<Configuration>) {

|

||||

let config = load_configuration(fp.as_str(), "filepath");

|

||||

pub async fn start(mut toreturn: Sender<Configuration>, config: Arc<Configuration>) {

|

||||

let headers = DashMap::new();

|

||||

match config {

|

||||

Some(config) => {

|

||||

if config.typecfg.to_string() != "consul" {

|

||||

info!("Not running Consul discovery, requested type is: {}", config.typecfg);

|

||||

return;

|

||||

}

|

||||

info!("Consul Discovery is enabled : {}", config.typecfg);

|

||||

let consul = config.consul.clone();

|

||||

let prev_upstreams = UpstreamsDashMap::new();

|

||||

match consul {

|

||||

Some(consul) => {

|

||||

let servers = consul.servers.unwrap();

|

||||

info!("Consul Servers => {:?}", servers);

|

||||

let end = servers.len() - 1;

|

||||

|

||||

info!("Consul Discovery is enabled : {}", config.typecfg);

|

||||

let consul = config.consul.clone();

|

||||

let prev_upstreams = UpstreamsDashMap::new();

|

||||

match consul {

|

||||

Some(consul) => {

|

||||

let servers = consul.servers.unwrap();

|

||||

info!("Consul Servers => {:?}", servers);

|

||||

let end = servers.len();

|

||||

|

||||

loop {

|

||||

let num = rand::rng().random_range(1..end);

|

||||

headers.clear();

|

||||

for (k, v) in config.headers.clone() {

|

||||

headers.insert(k.to_string(), v);

|

||||

}

|

||||

let consul_data = servers.get(num).unwrap().to_string();

|

||||

let upstreams = consul_request(consul_data, consul.services.clone(), consul.token.clone());

|

||||

match upstreams.await {

|

||||

Some(upstreams) => {

|

||||

if !compare_dashmaps(&upstreams, &prev_upstreams) {

|

||||

let mut tosend: Configuration = Configuration {

|

||||

upstreams: Default::default(),

|

||||

headers: Default::default(),

|

||||

consul: None,

|

||||

typecfg: "".to_string(),

|

||||

extraparams: config.extraparams.clone(),

|

||||

};

|

||||

|

||||

clone_dashmap_into(&upstreams, &prev_upstreams);

|

||||

clone_dashmap_into(&upstreams, &tosend.upstreams);

|

||||

tosend.headers = headers.clone();

|

||||

tosend.extraparams.authentication = config.extraparams.authentication.clone();

|

||||

tosend.typecfg = config.typecfg.clone();

|

||||

tosend.consul = config.consul.clone();

|

||||

toreturn.send(tosend).await.unwrap();

|

||||

}

|

||||

}

|

||||

None => {}

|

||||

}

|

||||

sleep(Duration::from_secs(5)).await;

|

||||

}

|

||||

loop {

|

||||

let mut num = 0;

|

||||

if end > 0 {

|

||||

num = rand::rng().random_range(0..end);

|

||||

}

|

||||

None => {}

|

||||

headers.clear();

|

||||

for (k, v) in config.headers.clone() {

|

||||

headers.insert(k.to_string(), v);

|

||||

}

|

||||

let consul_data = servers.get(num).unwrap().to_string();

|

||||

let upstreams = consul_request(consul_data, consul.services.clone(), consul.token.clone());

|

||||

match upstreams.await {

|

||||

Some(upstreams) => {

|

||||

if !compare_dashmaps(&upstreams, &prev_upstreams) {

|

||||

let mut tosend: Configuration = Configuration {

|

||||

upstreams: Default::default(),

|

||||

headers: Default::default(),

|

||||

consul: None,

|

||||

kubernetes: None,

|

||||

typecfg: "".to_string(),

|

||||

extraparams: config.extraparams.clone(),

|

||||

};

|

||||

|

||||

clone_dashmap_into(&upstreams, &prev_upstreams);

|

||||

clone_dashmap_into(&upstreams, &tosend.upstreams);

|

||||

tosend.headers = headers.clone();

|

||||

tosend.extraparams.authentication = config.extraparams.authentication.clone();

|

||||

tosend.typecfg = config.typecfg.clone();

|

||||

tosend.consul = config.consul.clone();

|

||||

print_upstreams(&tosend.upstreams);

|

||||

toreturn.send(tosend).await.unwrap();

|

||||

}

|

||||

}

|

||||

None => {}

|

||||

}

|

||||

sleep(Duration::from_secs(5)).await;

|

||||

}

|

||||

}

|

||||

None => {}

|

||||

@@ -109,7 +103,7 @@ async fn consul_request(url: String, whitelist: Option<Vec<ServiceMapping>>, tok

|

||||

Some(upstreams)

|

||||

}

|

||||

|

||||

async fn get_by_http(url: String, token: Option<String>) -> Option<DashMap<String, (Vec<(String, u16, bool, bool, bool)>, AtomicUsize)>> {

|

||||

async fn get_by_http(url: String, token: Option<String>) -> Option<DashMap<String, (Vec<InnerMap>, AtomicUsize)>> {

|

||||

let client = reqwest::Client::new();

|

||||

let mut headers = HeaderMap::new();

|

||||

if let Some(token) = token {

|

||||

@@ -118,7 +112,7 @@ async fn get_by_http(url: String, token: Option<String>) -> Option<DashMap<Strin

|

||||

let to = Duration::from_secs(1);

|

||||

let u = client.get(url).timeout(to).send();

|

||||

let mut values = Vec::new();

|

||||

let upstreams: DashMap<String, (Vec<(String, u16, bool, bool, bool)>, AtomicUsize)> = DashMap::new();

|

||||

let upstreams: DashMap<String, (Vec<InnerMap>, AtomicUsize)> = DashMap::new();

|

||||

match u.await {

|

||||

Ok(r) => {

|

||||

let jason = r.json::<Vec<Service>>().await;

|

||||

@@ -127,7 +121,15 @@ async fn get_by_http(url: String, token: Option<String>) -> Option<DashMap<Strin

|

||||

for service in whitelist {

|

||||

let addr = service.tagged_addresses.get("lan_ipv4").unwrap().address.clone();

|

||||

let prt = service.tagged_addresses.get("lan_ipv4").unwrap().port.clone();

|

||||

let to_add = (addr, prt, false, false, false);

|

||||

let to_add = InnerMap {

|

||||

address: addr,

|

||||

port: prt,

|

||||

is_ssl: false,

|

||||

is_http2: false,

|

||||

to_https: false,

|

||||

rate_limit: None,

|

||||

healthcheck: None,

|

||||

};

|

||||

values.push(to_add);

|

||||

}

|

||||

}

|

||||

|

||||

@@ -1,28 +1,20 @@

|

||||

use crate::utils::consul;

|

||||

use crate::utils::filewatch;

|

||||

use crate::utils::structs::Configuration;

|

||||

use crate::utils::{consul, kuber};

|

||||

use crate::web::webserver;

|

||||

use async_trait::async_trait;

|

||||

use futures::channel::mpsc::Sender;

|

||||

use std::sync::Arc;

|

||||

|

||||

pub struct FromFileProvider {

|

||||

pub path: String,

|

||||

}

|

||||

pub struct APIUpstreamProvider {

|

||||

pub config_api_enabled: bool,

|

||||

pub address: String,

|

||||

pub masterkey: String,

|

||||

pub tls_address: Option<String>,

|

||||

pub tls_certificate: Option<String>,

|

||||

pub tls_key_file: Option<String>,

|

||||

}

|

||||

|

||||

pub struct ConsulProvider {

|

||||

pub path: String,

|

||||

}

|

||||

|

||||

#[async_trait]

|

||||

pub trait Discovery {

|

||||

async fn start(&self, tx: Sender<Configuration>);

|

||||

pub file_server_address: Option<String>,

|

||||

pub file_server_folder: Option<String>,

|

||||

}

|

||||

|

||||

#[async_trait]

|

||||

@@ -32,6 +24,23 @@ impl Discovery for APIUpstreamProvider {

|

||||

}

|

||||

}

|

||||

|

||||

pub struct FromFileProvider {

|

||||

pub path: String,

|

||||

}

|

||||

|

||||

pub struct ConsulProvider {

|

||||

pub config: Arc<Configuration>,

|

||||

}

|

||||

|

||||

pub struct KubernetesProvider {

|

||||

pub config: Arc<Configuration>,

|

||||

}

|

||||

|

||||

#[async_trait]

|

||||

pub trait Discovery {

|

||||

async fn start(&self, tx: Sender<Configuration>);

|

||||

}

|

||||

|

||||

#[async_trait]

|

||||

impl Discovery for FromFileProvider {

|

||||

async fn start(&self, tx: Sender<Configuration>) {

|

||||

@@ -42,6 +51,13 @@ impl Discovery for FromFileProvider {

|

||||

#[async_trait]

|

||||

impl Discovery for ConsulProvider {

|

||||

async fn start(&self, tx: Sender<Configuration>) {

|

||||

tokio::spawn(consul::start(self.path.clone(), tx.clone()));

|

||||

tokio::spawn(consul::start(tx.clone(), self.config.clone()));

|

||||

}

|

||||

}

|

||||

|

||||

#[async_trait]

|

||||

impl Discovery for KubernetesProvider {

|

||||

async fn start(&self, tx: Sender<Configuration>) {

|

||||

tokio::spawn(kuber::start(tx.clone(), self.config.clone()));

|

||||

}

|

||||

}

|

||||

|

||||

158

src/utils/dnsclient.rs

Normal file

158

src/utils/dnsclient.rs

Normal file

@@ -0,0 +1,158 @@

|

||||

/*

|

||||

use crate::utils::structs::InnerMap;

|

||||

use dashmap::DashMap;

|

||||

use hickory_client::client::{Client, ClientHandle};

|

||||

use hickory_client::proto::rr::{DNSClass, Name, RecordType};

|

||||

use hickory_client::proto::runtime::TokioRuntimeProvider;

|

||||

use hickory_client::proto::udp::UdpClientStream;

|

||||

use std::net::SocketAddr;

|

||||

use std::str::FromStr;

|

||||

use std::sync::atomic::AtomicUsize;

|

||||

use std::time::Duration;

|

||||

use tokio::sync::Mutex;

|

||||

|

||||

type DnsError = Box<dyn std::error::Error + Send + Sync + 'static>;

|

||||

|

||||

pub struct DnsClientPool {

|

||||

clients: Vec<Mutex<DnsClient>>,

|

||||

}

|

||||

|

||||

struct DnsClient {

|

||||

client: Client,

|

||||

}

|

||||

|

||||

pub async fn start2(mut toreturn: Sender<Configuration>, config: Arc<Configuration>) {

|

||||

let k8s = config.kubernetes.clone();

|

||||

match k8s {

|

||||

Some(k8s) => {

|

||||

let dnserver = k8s.servers.unwrap_or(vec!["127.0.0.1:53".to_string()]);

|

||||

let headers = DashMap::new();

|

||||

let end = dnserver.len() - 1;

|

||||

let mut num = 0;

|

||||

if end > 0 {

|

||||

num = rand::rng().random_range(0..end);

|

||||

}

|

||||

let srv = dnserver.get(num).unwrap().to_string();

|

||||

let pool = DnsClientPool::new(5, srv.clone()).await;

|

||||

let u = UpstreamsDashMap::new();

|

||||

if let Some(whitelist) = k8s.services {

|

||||

loop {

|

||||

let upstreams = UpstreamsDashMap::new();

|

||||

for service in whitelist.iter() {

|

||||

let ret = pool.query_srv(service.real.as_str(), srv.clone()).await;

|

||||

match ret {

|

||||

Ok(r) => {

|

||||

upstreams.insert(service.proxy.clone(), r);

|

||||

}

|

||||

Err(e) => eprintln!("DNS query failed for {:?}: {:?}", service, e),

|

||||

}

|

||||

}

|

||||

if !compare_dashmaps(&u, &upstreams) {

|

||||

headers.clear();

|

||||

for (k, v) in config.headers.clone() {

|

||||

headers.insert(k.to_string(), v);

|

||||

}

|

||||

|

||||

let mut tosend: Configuration = Configuration {

|

||||

upstreams: Default::default(),

|

||||

headers: Default::default(),

|

||||

consul: None,

|

||||

kubernetes: None,

|

||||

typecfg: "".to_string(),

|

||||

extraparams: config.extraparams.clone(),

|

||||

};

|

||||

|

||||

clone_dashmap_into(&upstreams, &u);

|

||||

clone_dashmap_into(&upstreams, &tosend.upstreams);

|

||||

tosend.headers = headers.clone();

|

||||