mirror of

https://github.com/sadoyan/aralez.git

synced 2026-04-30 14:58:38 +08:00

Compare commits

11 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

ece4fa20af | ||

|

|

2ad3a059ab | ||

|

|

6f012cee69 | ||

|

|

51c88c8f7c | ||

|

|

f91bc41103 | ||

|

|

21e1276ff5 | ||

|

|

8463cdabbc | ||

|

|

d0e4b52ce6 | ||

|

|

b552d24497 | ||

|

|

2e33d692bb | ||

|

|

e586967830 |

1

.gitignore

vendored

1

.gitignore

vendored

@@ -8,6 +8,7 @@

|

|||||||

/target/

|

/target/

|

||||||

*.iml

|

*.iml

|

||||||

.idea/

|

.idea/

|

||||||

|

.etc/

|

||||||

*.ipr

|

*.ipr

|

||||||

*.iws

|

*.iws

|

||||||

/out/

|

/out/

|

||||||

|

|||||||

38

Cargo.lock

generated

38

Cargo.lock

generated

@@ -128,9 +128,11 @@ dependencies = [

|

|||||||

"log",

|

"log",

|

||||||

"mimalloc",

|

"mimalloc",

|

||||||

"notify",

|

"notify",

|

||||||

|

"once_cell",

|

||||||

"pingora",

|

"pingora",

|

||||||

"pingora-core",

|

"pingora-core",

|

||||||

"pingora-http",

|

"pingora-http",

|

||||||

|

"pingora-limits",

|

||||||

"pingora-proxy",

|

"pingora-proxy",

|

||||||

"prometheus 0.14.0",

|

"prometheus 0.14.0",

|

||||||

"rand 0.9.1",

|

"rand 0.9.1",

|

||||||

@@ -141,6 +143,7 @@ dependencies = [

|

|||||||

"sha2",

|

"sha2",

|

||||||

"tokio",

|

"tokio",

|

||||||

"tonic",

|

"tonic",

|

||||||

|

"tower-http",

|

||||||

"urlencoding",

|

"urlencoding",

|

||||||

"x509-parser",

|

"x509-parser",

|

||||||

]

|

]

|

||||||

@@ -1078,6 +1081,12 @@ dependencies = [

|

|||||||

"pin-project-lite",

|

"pin-project-lite",

|

||||||

]

|

]

|

||||||

|

|

||||||

|

[[package]]

|

||||||

|

name = "http-range-header"

|

||||||

|

version = "0.4.2"

|

||||||

|

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||||

|

checksum = "9171a2ea8a68358193d15dd5d70c1c10a2afc3e7e4c5bc92bc9f025cebd7359c"

|

||||||

|

|

||||||

[[package]]

|

[[package]]

|

||||||

name = "httparse"

|

name = "httparse"

|

||||||

version = "1.9.5"

|

version = "1.9.5"

|

||||||

@@ -1617,6 +1626,16 @@ version = "0.3.17"

|

|||||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||||

checksum = "6877bb514081ee2a7ff5ef9de3281f14a4dd4bceac4c09388074a6b5df8a139a"

|

checksum = "6877bb514081ee2a7ff5ef9de3281f14a4dd4bceac4c09388074a6b5df8a139a"

|

||||||

|

|

||||||

|

[[package]]

|

||||||

|

name = "mime_guess"

|

||||||

|

version = "2.0.5"

|

||||||

|

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||||

|

checksum = "f7c44f8e672c00fe5308fa235f821cb4198414e1c77935c1ab6948d3fd78550e"

|

||||||

|

dependencies = [

|

||||||

|

"mime",

|

||||||

|

"unicase",

|

||||||

|

]

|

||||||

|

|

||||||

[[package]]

|

[[package]]

|

||||||

name = "minimal-lexical"

|

name = "minimal-lexical"

|

||||||

version = "0.2.1"

|

version = "0.2.1"

|

||||||

@@ -2068,6 +2087,15 @@ dependencies = [

|

|||||||

"crc32fast",

|

"crc32fast",

|

||||||

]

|

]

|

||||||

|

|

||||||

|

[[package]]

|

||||||

|

name = "pingora-limits"

|

||||||

|

version = "0.5.0"

|

||||||

|

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||||

|

checksum = "a719a8cb5558ca06bd6076c97b8905d500ea556da89e132ba53d4272844f95b9"

|

||||||

|

dependencies = [

|

||||||

|

"ahash",

|

||||||

|

]

|

||||||

|

|

||||||

[[package]]

|

[[package]]

|

||||||

name = "pingora-load-balancing"

|

name = "pingora-load-balancing"

|

||||||

version = "0.5.0"

|

version = "0.5.0"

|

||||||

@@ -3167,14 +3195,24 @@ checksum = "adc82fd73de2a9722ac5da747f12383d2bfdb93591ee6c58486e0097890f05f2"

|

|||||||

dependencies = [

|

dependencies = [

|

||||||

"bitflags 2.8.0",

|

"bitflags 2.8.0",

|

||||||

"bytes",

|

"bytes",

|

||||||

|

"futures-core",

|

||||||

"futures-util",

|

"futures-util",

|

||||||

"http",

|

"http",

|

||||||

"http-body",

|

"http-body",

|

||||||

|

"http-body-util",

|

||||||

|

"http-range-header",

|

||||||

|

"httpdate",

|

||||||

"iri-string",

|

"iri-string",

|

||||||

|

"mime",

|

||||||

|

"mime_guess",

|

||||||

|

"percent-encoding",

|

||||||

"pin-project-lite",

|

"pin-project-lite",

|

||||||

|

"tokio",

|

||||||

|

"tokio-util",

|

||||||

"tower",

|

"tower",

|

||||||

"tower-layer",

|

"tower-layer",

|

||||||

"tower-service",

|

"tower-service",

|

||||||

|

"tracing",

|

||||||

]

|

]

|

||||||

|

|

||||||

[[package]]

|

[[package]]

|

||||||

|

|||||||

@@ -19,6 +19,8 @@ dashmap = "7.0.0-rc2"

|

|||||||

pingora-core = "0.5.0"

|

pingora-core = "0.5.0"

|

||||||

pingora-proxy = "0.5.0"

|

pingora-proxy = "0.5.0"

|

||||||

pingora-http = "0.5.0"

|

pingora-http = "0.5.0"

|

||||||

|

pingora-limits = "0.5.0"

|

||||||

|

#pingora-pool = "0.5.0"

|

||||||

async-trait = "0.1.88"

|

async-trait = "0.1.88"

|

||||||

env_logger = "0.11.8"

|

env_logger = "0.11.8"

|

||||||

log = "0.4.27"

|

log = "0.4.27"

|

||||||

@@ -46,4 +48,8 @@ lazy_static = "1.5.0"

|

|||||||

#openssl = "0.10.73"

|

#openssl = "0.10.73"

|

||||||

x509-parser = "0.17.0"

|

x509-parser = "0.17.0"

|

||||||

rustls-pemfile = "2.2.0"

|

rustls-pemfile = "2.2.0"

|

||||||

|

tower-http = { version = "0.6.6", features = ["fs"] }

|

||||||

|

once_cell = "1.20.2"

|

||||||

|

#moka = { version = "0.12.10", features = ["sync"] }

|

||||||

|

|

||||||

|

|

||||||

|

|||||||

56

README.md

56

README.md

@@ -13,7 +13,11 @@ Built on Rust, on top of **Cloudflare’s Pingora engine**, **Aralez** delivers

|

|||||||

|

|

||||||

- **Dynamic Config Reloads** — Upstreams can be updated live via API, no restart required.

|

- **Dynamic Config Reloads** — Upstreams can be updated live via API, no restart required.

|

||||||

- **TLS Termination** — Built-in OpenSSL support.

|

- **TLS Termination** — Built-in OpenSSL support.

|

||||||

|

- **Automatic load of certificates** — Automatically reads and loads certificates from a folder, without a restart.

|

||||||

- **Upstreams TLS detection** — Aralez will automatically detect if upstreams uses secure connection.

|

- **Upstreams TLS detection** — Aralez will automatically detect if upstreams uses secure connection.

|

||||||

|

- **Built in rate limiter** — Limit requests to server, by setting up upper limit for requests per seconds, per virtualhost.

|

||||||

|

- **Global rate limiter** — Set rate limit for all virtualhosts.

|

||||||

|

- **Per path rate limiter** — Set rate limit for specific paths. Path limits will override global limits.

|

||||||

- **Authentication** — Supports Basic Auth, API tokens, and JWT verification.

|

- **Authentication** — Supports Basic Auth, API tokens, and JWT verification.

|

||||||

- **Basic Auth**

|

- **Basic Auth**

|

||||||

- **API Key** via `x-api-key` header

|

- **API Key** via `x-api-key` header

|

||||||

@@ -24,6 +28,7 @@ Built on Rust, on top of **Cloudflare’s Pingora engine**, **Aralez** delivers

|

|||||||

- Failover with health checks

|

- Failover with health checks

|

||||||

- Sticky sessions via cookies

|

- Sticky sessions via cookies

|

||||||

- **Unified Port** — Serve HTTP and WebSocket traffic over the same connection.

|

- **Unified Port** — Serve HTTP and WebSocket traffic over the same connection.

|

||||||

|

- **Built in file server** — Build in minimalistic file server for serving static files, should be added as upstreams for public access.

|

||||||

- **Memory Safe** — Created purely on Rust.

|

- **Memory Safe** — Created purely on Rust.

|

||||||

- **High Performance** — Built with [Pingora](https://github.com/cloudflare/pingora) and tokio for async I/O.

|

- **High Performance** — Built with [Pingora](https://github.com/cloudflare/pingora) and tokio for async I/O.

|

||||||

|

|

||||||

@@ -83,6 +88,9 @@ Built on Rust, on top of **Cloudflare’s Pingora engine**, **Aralez** delivers

|

|||||||

| **hc_method** | HEAD | Healthcheck method (HEAD, GET, POST are supported) UPPERCASE |

|

| **hc_method** | HEAD | Healthcheck method (HEAD, GET, POST are supported) UPPERCASE |

|

||||||

| **hc_interval** | 2 | Interval for health checks in seconds |

|

| **hc_interval** | 2 | Interval for health checks in seconds |

|

||||||

| **master_key** | 5aeff7f9-7b94-447c-af60-e8c488544a3e | Master key for working with API server and JWT Secret generation |

|

| **master_key** | 5aeff7f9-7b94-447c-af60-e8c488544a3e | Master key for working with API server and JWT Secret generation |

|

||||||

|

| **file_server_folder** | /some/local/folder | Optional, local folder to serve |

|

||||||

|

| **file_server_address** | 127.0.0.1:3002 | Optional, Local address for file server. Can set as upstream for public access |

|

||||||

|

| **config_api_enabled** | true | Boolean to enable/disable remote config push capability |

|

||||||

|

|

||||||

### 🌐 `upstreams.yaml`

|

### 🌐 `upstreams.yaml`

|

||||||

|

|

||||||

@@ -110,6 +118,24 @@ File names:

|

|||||||

| `aralez-aarch64-musl.gz` | Static Linux ARM64 binary, without any system dependency |

|

| `aralez-aarch64-musl.gz` | Static Linux ARM64 binary, without any system dependency |

|

||||||

| `aralez-aarch64-glibc.gz` | Dynamic Linux ARM64 binary, with minimal system dependencies |

|

| `aralez-aarch64-glibc.gz` | Dynamic Linux ARM64 binary, with minimal system dependencies |

|

||||||

|

|

||||||

|

## 💡 Note

|

||||||

|

|

||||||

|

In general **glibc** builds are working faster, but have few, basic, system dependencies for example :

|

||||||

|

|

||||||

|

```

|

||||||

|

linux-vdso.so.1 (0x00007ffeea33b000)

|

||||||

|

libgcc_s.so.1 => /lib/x86_64-linux-gnu/libgcc_s.so.1 (0x00007f09e7377000)

|

||||||

|

libm.so.6 => /lib/x86_64-linux-gnu/libm.so.6 (0x00007f09e6320000)

|

||||||

|

libc.so.6 => /lib/x86_64-linux-gnu/libc.so.6 (0x00007f09e613f000)

|

||||||

|

/lib64/ld-linux-x86-64.so.2 (0x00007f09e73b1000)

|

||||||

|

```

|

||||||

|

|

||||||

|

These are common to any Linux systems, so the binary should work on almost any Linux system.

|

||||||

|

|

||||||

|

**musl** builds are 100% portable, static compiled binaries and have zero system depencecies.

|

||||||

|

In general musl builds have a little less performance.

|

||||||

|

The most intensive tests shows 107k-110k requests per second on **Glibc** binaries against 97k-100k **Musl** ones.

|

||||||

|

|

||||||

## 🔌 Running the Proxy

|

## 🔌 Running the Proxy

|

||||||

|

|

||||||

```bash

|

```bash

|

||||||

@@ -142,6 +168,7 @@ A sample `upstreams.yaml` entry:

|

|||||||

provider: "file"

|

provider: "file"

|

||||||

sticky_sessions: false

|

sticky_sessions: false

|

||||||

to_https: false

|

to_https: false

|

||||||

|

rate_limit: 10

|

||||||

headers:

|

headers:

|

||||||

- "Access-Control-Allow-Origin:*"

|

- "Access-Control-Allow-Origin:*"

|

||||||

- "Access-Control-Allow-Methods:POST, GET, OPTIONS"

|

- "Access-Control-Allow-Methods:POST, GET, OPTIONS"

|

||||||

@@ -152,6 +179,7 @@ authorization:

|

|||||||

myhost.mydomain.com:

|

myhost.mydomain.com:

|

||||||

paths:

|

paths:

|

||||||

"/":

|

"/":

|

||||||

|

rate_limit: 20

|

||||||

to_https: false

|

to_https: false

|

||||||

headers:

|

headers:

|

||||||

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

||||||

@@ -172,13 +200,18 @@ myhost.mydomain.com:

|

|||||||

|

|

||||||

- Sticky sessions are disabled globally. This setting applies to all upstreams. If enabled all requests will be 301 redirected to HTTPS.

|

- Sticky sessions are disabled globally. This setting applies to all upstreams. If enabled all requests will be 301 redirected to HTTPS.

|

||||||

- HTTP to HTTPS redirect disabled globally, but can be overridden by `to_https` setting per upstream.

|

- HTTP to HTTPS redirect disabled globally, but can be overridden by `to_https` setting per upstream.

|

||||||

|

- Requests to each hosted domains will be limited to 10 requests per second per virtualhost.

|

||||||

|

- Requests limits are calculated per requester ip plus requested virtualhost.

|

||||||

|

- If the requester exceeds the limit it will receive `429 Too Many Requests` error.

|

||||||

|

- Optional. Rate limiter will be disabled if the parameter is entirely removed from config.

|

||||||

|

- Requests to `myhost.mydomain.com/` will be limited to 20 requests per second.

|

||||||

- Requests to `myhost.mydomain.com/` will be proxied to `127.0.0.1` and `127.0.0.2`.

|

- Requests to `myhost.mydomain.com/` will be proxied to `127.0.0.1` and `127.0.0.2`.

|

||||||

- Plain HTTP to `myhost.mydomain.com/foo` will get 301 redirect to configured TLS port of Aralez.

|

- Plain HTTP to `myhost.mydomain.com/foo` will get 301 redirect to configured TLS port of Aralez.

|

||||||

- Requests to `myhost.mydomain.com/foo` will be proxied to `127.0.0.4` and `127.0.0.5`.

|

- Requests to `myhost.mydomain.com/foo` will be proxied to `127.0.0.4` and `127.0.0.5`.

|

||||||

- SSL/TLS for upstreams is detected automatically, no need to set any config parameter.

|

- SSL/TLS for upstreams is detected automatically, no need to set any config parameter.

|

||||||

- Assuming the `127.0.0.5:8443` is SSL protected. The inner traffic will use TLS.

|

- Assuming the `127.0.0.5:8443` is SSL protected. The inner traffic will use TLS.

|

||||||

- Self signed certificates are silently accepted.

|

- Self-signed certificates are silently accepted.

|

||||||

- Global headers (CORS for this case) will be injected to all upstreams

|

- Global headers (CORS for this case) will be injected to all upstreams.

|

||||||

- Additional headers will be injected into the request for `myhost.mydomain.com`.

|

- Additional headers will be injected into the request for `myhost.mydomain.com`.

|

||||||

- You can choose any path, deep nested paths are supported, the best match chosen.

|

- You can choose any path, deep nested paths are supported, the best match chosen.

|

||||||

- All requests to servers will require JWT token authentication (You can comment out the authorization to disable it),

|

- All requests to servers will require JWT token authentication (You can comment out the authorization to disable it),

|

||||||

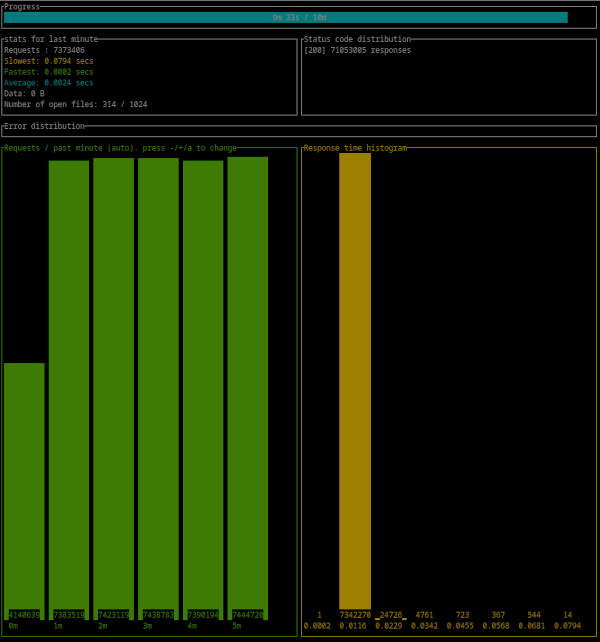

@@ -442,4 +475,21 @@ Error distribution:

|

|||||||

[228] aborted due to deadline

|

[228] aborted due to deadline

|

||||||

```

|

```

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

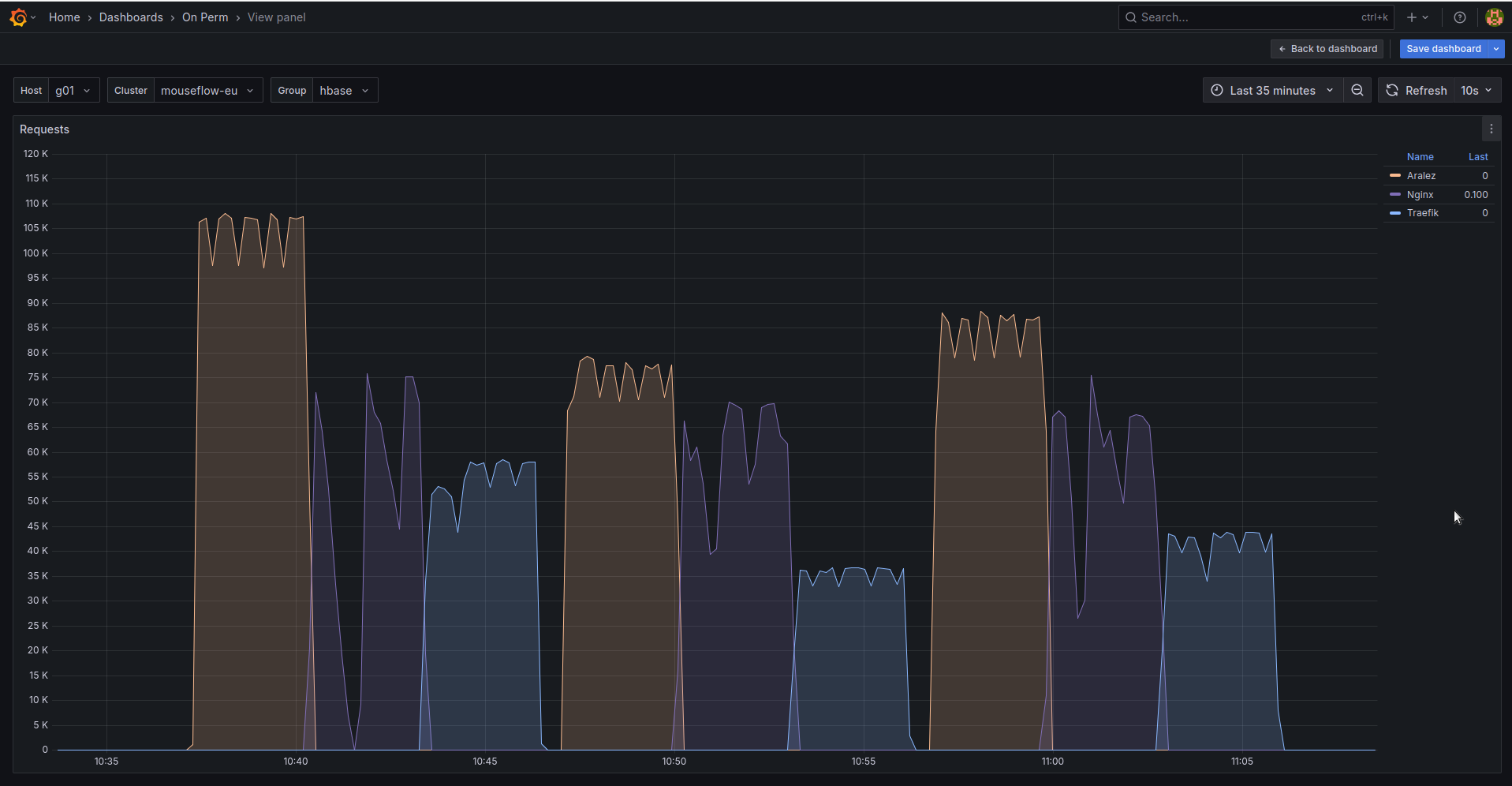

## 🚀 Aralez, Nginx, Traefik performance benchmark

|

||||||

|

|

||||||

|

This benchmark is done on 4 servers. With CPU Intel(R) Xeon(R) E-2174G CPU @ 3.80GHz, 64 GB RAM.

|

||||||

|

|

||||||

|

1. Sever runs Aralez, Traefik, Nginx on different ports. Tuned as much as I could .

|

||||||

|

2. 3x Upstreams servers, running Nginx. Replying with dummy json hardcoded in config file for max performance.

|

||||||

|

|

||||||

|

All servers are connected to the same switch with 1GB port in datacenter , not a home lab. The results:

|

||||||

|

|

||||||

|

|

||||||

|

The results show requests per second performed by Load balancer. You can see 3 batches with 800 concurrent users.

|

||||||

|

|

||||||

|

1. Requests via http1.1 to plain text endpoint.

|

||||||

|

2. Requests to via http2 to SSL endpoint.

|

||||||

|

3. Mixed workload with plain http1.1 and htt2 SSL.

|

||||||

|

|

||||||

|

|||||||

BIN

assets/bench.png

Normal file

BIN

assets/bench.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 160 KiB |

@@ -1,5 +1,5 @@

|

|||||||

# Main configuration file , applied on startup

|

# Main configuration file, applied on startup

|

||||||

threads: 12 # Nubber of daemon threads default setting

|

threads: 12 # Number of daemon threads default setting

|

||||||

#user: pastor # Username for running aralez after dropping root privileges, requires program to start as root

|

#user: pastor # Username for running aralez after dropping root privileges, requires program to start as root

|

||||||

#group: pastor # Group for running aralez after dropping root privileges, requires program to start as root

|

#group: pastor # Group for running aralez after dropping root privileges, requires program to start as root

|

||||||

daemon: false # Run in background

|

daemon: false # Run in background

|

||||||

@@ -7,14 +7,17 @@ upstream_keepalive_pool_size: 500 # Pool size for upstream keepalive connections

|

|||||||

pid_file: /tmp/aralez.pid # Path to PID file

|

pid_file: /tmp/aralez.pid # Path to PID file

|

||||||

error_log: /tmp/aralez_err.log # Path to error log

|

error_log: /tmp/aralez_err.log # Path to error log

|

||||||

upgrade_sock: /tmp/aralez.sock # Path to socket file

|

upgrade_sock: /tmp/aralez.sock # Path to socket file

|

||||||

|

config_api_enabled: true # Boolean to enable/disable remote config push capability.

|

||||||

config_address: 0.0.0.0:3000 # HTTP API address for pushing upstreams.yaml from remote location

|

config_address: 0.0.0.0:3000 # HTTP API address for pushing upstreams.yaml from remote location

|

||||||

config_tls_address: 0.0.0.0:3001 # HTTP TLS API address for pushing upstreams.yaml from remote location

|

config_tls_address: 0.0.0.0:3001 # HTTP TLS API address for pushing upstreams.yaml from remote location

|

||||||

config_tls_certificate: etc/server.crt # Mandatory if config_tls_address is set

|

config_tls_certificate: /opt/Rust/Projects/asyncweb/etc/server.crt # Mandatory if config_tls_address is set

|

||||||

config_tls_key_file: etc/key.pem # Mandatory if config_tls_address is set

|

config_tls_key_file: /opt/Rust/Projects/asyncweb/etc/key.pem # Mandatory if config_tls_address is set

|

||||||

proxy_address_http: 0.0.0.0:6193 # Proxy HTTP bind address

|

proxy_address_http: 0.0.0.0:6193 # Proxy HTTP bind address

|

||||||

proxy_address_tls: 0.0.0.0:6194 # Optional, Proxy TLS bind address

|

proxy_address_tls: 0.0.0.0:6194 # Optional, Proxy TLS bind address

|

||||||

proxy_certificates: etc/yoyo # Mandatory if proxy_address_tls set, should contain certificate and key files strictly in a format {NAME}.crt, {NAME}.key.

|

proxy_certificates: /opt/Rust/Projects/asyncweb/etc/yoyo # Mandatory if proxy_address_tls set, should contain certificate and key files strictly in a format {NAME}.crt, {NAME}.key.

|

||||||

upstreams_conf: etc/upstreams.yaml # the location of upstreams file

|

upstreams_conf: /opt/Rust/Projects/asyncweb/etc/upstreams.yaml # the location of upstreams file

|

||||||

|

file_server_folder: /opt/storage # Optional, local folder to serve

|

||||||

|

file_server_address: 127.0.0.1:3002 # Optional, Local address for file server. Can set as upstream for public access.

|

||||||

log_level: info # info, warn, error, debug, trace, off

|

log_level: info # info, warn, error, debug, trace, off

|

||||||

hc_method: HEAD # Healthcheck method (HEAD, GET, POST are supported) UPPERCASE

|

hc_method: HEAD # Healthcheck method (HEAD, GET, POST are supported) UPPERCASE

|

||||||

hc_interval: 2 #Interval for health checks in seconds

|

hc_interval: 2 #Interval for health checks in seconds

|

||||||

|

|||||||

@@ -2,6 +2,7 @@

|

|||||||

provider: "file" # consul

|

provider: "file" # consul

|

||||||

sticky_sessions: false

|

sticky_sessions: false

|

||||||

to_ssl: false

|

to_ssl: false

|

||||||

|

#rate_limit: 100

|

||||||

headers:

|

headers:

|

||||||

- "Access-Control-Allow-Origin:*"

|

- "Access-Control-Allow-Origin:*"

|

||||||

- "Access-Control-Allow-Methods:POST, GET, OPTIONS"

|

- "Access-Control-Allow-Methods:POST, GET, OPTIONS"

|

||||||

@@ -16,9 +17,9 @@ authorization:

|

|||||||

# creds: "5ecbf799-1343-4e94-a9b5-e278af5cd313-56b45249-1839-4008-a450-a60dc76d2bae"

|

# creds: "5ecbf799-1343-4e94-a9b5-e278af5cd313-56b45249-1839-4008-a450-a60dc76d2bae"

|

||||||

consul: # If the provider is consul. Otherwise, ignored.

|

consul: # If the provider is consul. Otherwise, ignored.

|

||||||

servers:

|

servers:

|

||||||

- "http://master1:8500"

|

- "http://consul1:8500"

|

||||||

- "http://192.168.22.1:8500"

|

- "http://consul2:8500"

|

||||||

- "http://master1.foo.local:8500"

|

- "http://consul3:8500"

|

||||||

services: # proxy: The hostname to access the proxy server, real : The real service name in Consul database.

|

services: # proxy: The hostname to access the proxy server, real : The real service name in Consul database.

|

||||||

- proxy: "proxy-frontend-dev-frontend-srv"

|

- proxy: "proxy-frontend-dev-frontend-srv"

|

||||||

real: "frontend-dev-frontend-srv"

|

real: "frontend-dev-frontend-srv"

|

||||||

@@ -26,10 +27,11 @@ consul: # If the provider is consul. Otherwise, ignored.

|

|||||||

upstreams:

|

upstreams:

|

||||||

myip.mydomain.com:

|

myip.mydomain.com:

|

||||||

paths:

|

paths:

|

||||||

|

rate_limit: 10 # Per path rate limit have higher priority than global rate limit. If not set, the global rate limit will be used

|

||||||

"/":

|

"/":

|

||||||

to_https: false

|

to_https: false

|

||||||

headers:

|

headers:

|

||||||

- "X-Proxy-From:Gazan"

|

- "X-Proxy-From:Aralez"

|

||||||

servers: # List of upstreams HOST:PORT

|

servers: # List of upstreams HOST:PORT

|

||||||

- "127.0.0.1:8000"

|

- "127.0.0.1:8000"

|

||||||

- "127.0.0.2:8000"

|

- "127.0.0.2:8000"

|

||||||

@@ -39,7 +41,7 @@ upstreams:

|

|||||||

to_https: true

|

to_https: true

|

||||||

headers:

|

headers:

|

||||||

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

||||||

- "X-Proxy-From:Gazan"

|

- "X-Proxy-From:Aralez"

|

||||||

servers:

|

servers:

|

||||||

- "127.0.0.1:8000"

|

- "127.0.0.1:8000"

|

||||||

- "127.0.0.2:8000"

|

- "127.0.0.2:8000"

|

||||||

|

|||||||

@@ -1,5 +1,5 @@

|

|||||||

use crate::utils::parceyaml::load_configuration;

|

use crate::utils::parceyaml::load_configuration;

|

||||||

use crate::utils::structs::{Configuration, ServiceMapping, UpstreamsDashMap};

|

use crate::utils::structs::{Configuration, InnerMap, ServiceMapping, UpstreamsDashMap};

|

||||||

use crate::utils::tools::{clone_dashmap_into, compare_dashmaps};

|

use crate::utils::tools::{clone_dashmap_into, compare_dashmaps};

|

||||||

use dashmap::DashMap;

|

use dashmap::DashMap;

|

||||||

use futures::channel::mpsc::Sender;

|

use futures::channel::mpsc::Sender;

|

||||||

@@ -109,7 +109,7 @@ async fn consul_request(url: String, whitelist: Option<Vec<ServiceMapping>>, tok

|

|||||||

Some(upstreams)

|

Some(upstreams)

|

||||||

}

|

}

|

||||||

|

|

||||||

async fn get_by_http(url: String, token: Option<String>) -> Option<DashMap<String, (Vec<(String, u16, bool, bool, bool)>, AtomicUsize)>> {

|

async fn get_by_http(url: String, token: Option<String>) -> Option<DashMap<String, (Vec<InnerMap>, AtomicUsize)>> {

|

||||||

let client = reqwest::Client::new();

|

let client = reqwest::Client::new();

|

||||||

let mut headers = HeaderMap::new();

|

let mut headers = HeaderMap::new();

|

||||||

if let Some(token) = token {

|

if let Some(token) = token {

|

||||||

@@ -118,7 +118,7 @@ async fn get_by_http(url: String, token: Option<String>) -> Option<DashMap<Strin

|

|||||||

let to = Duration::from_secs(1);

|

let to = Duration::from_secs(1);

|

||||||

let u = client.get(url).timeout(to).send();

|

let u = client.get(url).timeout(to).send();

|

||||||

let mut values = Vec::new();

|

let mut values = Vec::new();

|

||||||

let upstreams: DashMap<String, (Vec<(String, u16, bool, bool, bool)>, AtomicUsize)> = DashMap::new();

|

let upstreams: DashMap<String, (Vec<InnerMap>, AtomicUsize)> = DashMap::new();

|

||||||

match u.await {

|

match u.await {

|

||||||

Ok(r) => {

|

Ok(r) => {

|

||||||

let jason = r.json::<Vec<Service>>().await;

|

let jason = r.json::<Vec<Service>>().await;

|

||||||

@@ -127,7 +127,14 @@ async fn get_by_http(url: String, token: Option<String>) -> Option<DashMap<Strin

|

|||||||

for service in whitelist {

|

for service in whitelist {

|

||||||

let addr = service.tagged_addresses.get("lan_ipv4").unwrap().address.clone();

|

let addr = service.tagged_addresses.get("lan_ipv4").unwrap().address.clone();

|

||||||

let prt = service.tagged_addresses.get("lan_ipv4").unwrap().port.clone();

|

let prt = service.tagged_addresses.get("lan_ipv4").unwrap().port.clone();

|

||||||

let to_add = (addr, prt, false, false, false);

|

let to_add = InnerMap {

|

||||||

|

address: addr,

|

||||||

|

port: prt,

|

||||||

|

is_ssl: false,

|

||||||

|

is_http2: false,

|

||||||

|

to_https: false,

|

||||||

|

rate_limit: None,

|

||||||

|

};

|

||||||

values.push(to_add);

|

values.push(to_add);

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -9,11 +9,14 @@ pub struct FromFileProvider {

|

|||||||

pub path: String,

|

pub path: String,

|

||||||

}

|

}

|

||||||

pub struct APIUpstreamProvider {

|

pub struct APIUpstreamProvider {

|

||||||

|

pub config_api_enabled: bool,

|

||||||

pub address: String,

|

pub address: String,

|

||||||

pub masterkey: String,

|

pub masterkey: String,

|

||||||

pub tls_address: Option<String>,

|

pub tls_address: Option<String>,

|

||||||

pub tls_certificate: Option<String>,

|

pub tls_certificate: Option<String>,

|

||||||

pub tls_key_file: Option<String>,

|

pub tls_key_file: Option<String>,

|

||||||

|

pub file_server_address: Option<String>,

|

||||||

|

pub file_server_folder: Option<String>,

|

||||||

}

|

}

|

||||||

|

|

||||||

pub struct ConsulProvider {

|

pub struct ConsulProvider {

|

||||||

|

|||||||

@@ -1,4 +1,4 @@

|

|||||||

use crate::utils::structs::{UpstreamsDashMap, UpstreamsIdMap};

|

use crate::utils::structs::{InnerMap, UpstreamsDashMap, UpstreamsIdMap};

|

||||||

use crate::utils::tools::*;

|

use crate::utils::tools::*;

|

||||||

use dashmap::DashMap;

|

use dashmap::DashMap;

|

||||||

use log::{error, info, warn};

|

use log::{error, info, warn};

|

||||||

@@ -9,9 +9,11 @@ use std::time::Duration;

|

|||||||

use tokio::time::interval;

|

use tokio::time::interval;

|

||||||

use tonic::transport::Endpoint;

|

use tonic::transport::Endpoint;

|

||||||

|

|

||||||

|

#[allow(unused_assignments)]

|

||||||

pub async fn hc2(upslist: Arc<UpstreamsDashMap>, fullist: Arc<UpstreamsDashMap>, idlist: Arc<UpstreamsIdMap>, params: (&str, u64)) {

|

pub async fn hc2(upslist: Arc<UpstreamsDashMap>, fullist: Arc<UpstreamsDashMap>, idlist: Arc<UpstreamsIdMap>, params: (&str, u64)) {

|

||||||

let mut period = interval(Duration::from_secs(params.1));

|

let mut period = interval(Duration::from_secs(params.1));

|

||||||

let mut first_run = 0;

|

let mut first_run = 0;

|

||||||

|

let client = Client::builder().timeout(Duration::from_secs(2)).danger_accept_invalid_certs(true).build().unwrap();

|

||||||

loop {

|

loop {

|

||||||

tokio::select! {

|

tokio::select! {

|

||||||

_ = period.tick() => {

|

_ = period.tick() => {

|

||||||

@@ -20,47 +22,44 @@ pub async fn hc2(upslist: Arc<UpstreamsDashMap>, fullist: Arc<UpstreamsDashMap>,

|

|||||||

for val in fclone.iter() {

|

for val in fclone.iter() {

|

||||||

let host = val.key();

|

let host = val.key();

|

||||||

let inner = DashMap::new();

|

let inner = DashMap::new();

|

||||||

let mut _scheme: (String, u16, bool, bool, bool) = ("".to_string(), 0, false, false, false);

|

let mut scheme = InnerMap::new();

|

||||||

for path_entry in val.value().iter() {

|

for path_entry in val.value().iter() {

|

||||||

// let inner = DashMap::new();

|

|

||||||

let path = path_entry.key();

|

let path = path_entry.key();

|

||||||

let mut innervec= Vec::new();

|

let mut innervec= Vec::new();

|

||||||

for k in path_entry.value().0 .iter().enumerate() {

|

for k in path_entry.value().0 .iter().enumerate() {

|

||||||

let (ip, port, _ssl, _version, _redir) = k.1;

|

|

||||||

let mut _link = String::new();

|

let mut _link = String::new();

|

||||||

let tls = detect_tls(ip, port).await;

|

let tls = detect_tls(k.1.address.as_str(), &k.1.port, &client).await;

|

||||||

let mut is_h2 = false;

|

let mut is_h2 = false;

|

||||||

|

|

||||||

// if tls.1 == Some(Version::HTTP_11) {

|

|

||||||

// println!(" V1: ==> {:?}", tls.1)

|

|

||||||

// }else if tls.1 == Some(Version::HTTP_2) {

|

|

||||||

// is_h2 = true;

|

|

||||||

// println!(" V2: ==> {:?}", tls.1)

|

|

||||||

// }

|

|

||||||

|

|

||||||

if tls.1 == Some(Version::HTTP_2) {

|

if tls.1 == Some(Version::HTTP_2) {

|

||||||

is_h2 = true;

|

is_h2 = true;

|

||||||

// println!(" V2: ==> {} ==> {:?}", tls.0, tls.1)

|

|

||||||

}

|

}

|

||||||

|

|

||||||

match tls.0 {

|

match tls.0 {

|

||||||

true => _link = format!("https://{}:{}{}", ip, port, path),

|

true => _link = format!("https://{}:{}{}", k.1.address, k.1.port, path),

|

||||||

false => _link = format!("http://{}:{}{}", ip, port, path),

|

false => _link = format!("http://{}:{}{}", k.1.address, k.1.port, path),

|

||||||

}

|

}

|

||||||

// if _pref == "https://" {

|

scheme = InnerMap {

|

||||||

// _scheme = (ip.to_string(), *port, true);

|

address: k.1.address.clone(),

|

||||||

// }else {

|

port: k.1.port,

|

||||||

// _scheme = (ip.to_string(), *port, false);

|

is_ssl: tls.0,

|

||||||

// }

|

is_http2: is_h2,

|

||||||

_scheme = (ip.to_string(), *port, tls.0, is_h2, *_redir);

|

to_https: k.1.to_https,

|

||||||

// let link = format!("{}{}:{}{}", _pref, ip, port, path);

|

rate_limit: k.1.rate_limit,

|

||||||

let resp = http_request(_link.as_str(), params.0, "").await;

|

};

|

||||||

|

let resp = http_request(_link.as_str(), params.0, "", &client).await;

|

||||||

match resp.0 {

|

match resp.0 {

|

||||||

true => {

|

true => {

|

||||||

if resp.1 {

|

if resp.1 {

|

||||||

_scheme = (ip.to_string(), *port, tls.0, true, *_redir);

|

scheme = InnerMap {

|

||||||

|

address: k.1.address.clone(),

|

||||||

|

port: k.1.port,

|

||||||

|

is_ssl: tls.0,

|

||||||

|

is_http2: is_h2,

|

||||||

|

to_https: k.1.to_https,

|

||||||

|

rate_limit: k.1.rate_limit,

|

||||||

|

};

|

||||||

}

|

}

|

||||||

innervec.push(_scheme.clone());

|

innervec.push(scheme);

|

||||||

}

|

}

|

||||||

false => {

|

false => {

|

||||||

warn!("Dead Upstream : {}", _link);

|

warn!("Dead Upstream : {}", _link);

|

||||||

@@ -91,33 +90,26 @@ pub async fn hc2(upslist: Arc<UpstreamsDashMap>, fullist: Arc<UpstreamsDashMap>,

|

|||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

#[allow(dead_code)]

|

async fn http_request(url: &str, method: &str, payload: &str, client: &Client) -> (bool, bool) {

|

||||||

async fn http_request(url: &str, method: &str, payload: &str) -> (bool, bool) {

|

|

||||||

let client = Client::builder().danger_accept_invalid_certs(true).build().unwrap();

|

|

||||||

let timeout = Duration::from_secs(1);

|

|

||||||

if !["POST", "GET", "HEAD"].contains(&method) {

|

if !["POST", "GET", "HEAD"].contains(&method) {

|

||||||

error!("Method {} not supported. Only GET|POST|HEAD are supported ", method);

|

error!("Method {} not supported. Only GET|POST|HEAD are supported ", method);

|

||||||

return (false, false);

|

return (false, false);

|

||||||

}

|

}

|

||||||

async fn send_request(client: &Client, method: &str, url: &str, payload: &str, timeout: Duration) -> Option<reqwest::Response> {

|

async fn send_request(client: &Client, method: &str, url: &str, payload: &str) -> Option<reqwest::Response> {

|

||||||

match method {

|

match method {

|

||||||

"POST" => client.post(url).body(payload.to_owned()).timeout(timeout).send().await.ok(),

|

"POST" => client.post(url).body(payload.to_owned()).send().await.ok(),

|

||||||

"GET" => client.get(url).timeout(timeout).send().await.ok(),

|

"GET" => client.get(url).send().await.ok(),

|

||||||

"HEAD" => client.head(url).timeout(timeout).send().await.ok(),

|

"HEAD" => client.head(url).send().await.ok(),

|

||||||

_ => None,

|

_ => None,

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

match send_request(&client, method, url, payload, timeout).await {

|

match send_request(&client, method, url, payload).await {

|

||||||

Some(response) => {

|

Some(response) => {

|

||||||

let status = response.status().as_u16();

|

let status = response.status().as_u16();

|

||||||

((99..499).contains(&status), false)

|

((99..499).contains(&status), false)

|

||||||

}

|

}

|

||||||

None => {

|

None => (ping_grpc(&url).await, true),

|

||||||

// let fallback_url = url.replace("https", "http");

|

|

||||||

// ping_grpc(&fallback_url).await

|

|

||||||

(ping_grpc(&url).await, true)

|

|

||||||

}

|

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

@@ -128,10 +120,7 @@ pub async fn ping_grpc(addr: &str) -> bool {

|

|||||||

let endpoint = endpoint.timeout(Duration::from_secs(2));

|

let endpoint = endpoint.timeout(Duration::from_secs(2));

|

||||||

|

|

||||||

match tokio::time::timeout(Duration::from_secs(3), endpoint.connect()).await {

|

match tokio::time::timeout(Duration::from_secs(3), endpoint.connect()).await {

|

||||||

Ok(Ok(_channel)) => {

|

Ok(Ok(_channel)) => true,

|

||||||

// println!("{:?} ==> {:?} ==> {}", endpoint, _channel, addr);

|

|

||||||

true

|

|

||||||

}

|

|

||||||

_ => false,

|

_ => false,

|

||||||

}

|

}

|

||||||

} else {

|

} else {

|

||||||

@@ -139,15 +128,24 @@ pub async fn ping_grpc(addr: &str) -> bool {

|

|||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

async fn detect_tls(ip: &str, port: &u16) -> (bool, Option<Version>) {

|

async fn detect_tls(ip: &str, port: &u16, client: &Client) -> (bool, Option<Version>) {

|

||||||

let url = format!("https://{}:{}", ip, port);

|

let https_url = format!("https://{}:{}", ip, port);

|

||||||

// let url = format!("{}:{}", ip, port);

|

match client.get(&https_url).send().await {

|

||||||

let client = Client::builder().timeout(Duration::from_secs(2)).danger_accept_invalid_certs(true).build().unwrap();

|

Ok(response) => {

|

||||||

match client.get(&url).send().await {

|

// println!("{} => {:?} (HTTPS)", https_url, response.version());

|

||||||

Ok(response) => (true, Some(response.version())),

|

return (true, Some(response.version()));

|

||||||

Err(e) => {

|

}

|

||||||

if e.is_builder() || e.is_connect() || e.to_string().contains("tls") {

|

_ => {}

|

||||||

(false, None)

|

}

|

||||||

|

let http_url = format!("http://{}:{}", ip, port);

|

||||||

|

match client.get(&http_url).send().await {

|

||||||

|

Ok(response) => {

|

||||||

|

// println!("{} => {:?} (HTTP)", http_url, response.version());

|

||||||

|

(false, Some(response.version()))

|

||||||

|

}

|

||||||

|

Err(_) => {

|

||||||

|

if ping_grpc(&http_url).await {

|

||||||

|

(false, Some(Version::HTTP_2))

|

||||||

} else {

|

} else {

|

||||||

(false, None)

|

(false, None)

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -1,133 +1,153 @@

|

|||||||

use crate::utils::structs::*;

|

use crate::utils::structs::*;

|

||||||

use dashmap::DashMap;

|

use dashmap::DashMap;

|

||||||

use log::{error, info, warn};

|

use log::{error, info, warn};

|

||||||

use serde_yaml::Error;

|

|

||||||

use std::collections::HashMap;

|

use std::collections::HashMap;

|

||||||

use std::fs;

|

use std::fs;

|

||||||

use std::sync::atomic::AtomicUsize;

|

use std::sync::atomic::AtomicUsize;

|

||||||

|

|

||||||

pub fn load_configuration(d: &str, kind: &str) -> Option<Configuration> {

|

pub fn load_configuration(d: &str, kind: &str) -> Option<Configuration> {

|

||||||

let mut toreturn: Configuration = Configuration {

|

let yaml_data = match kind {

|

||||||

upstreams: Default::default(),

|

"filepath" => match fs::read_to_string(d) {

|

||||||

headers: Default::default(),

|

Ok(data) => {

|

||||||

consul: None,

|

info!("Reading upstreams from {}", d);

|

||||||

typecfg: "".to_string(),

|

data

|

||||||

extraparams: Extraparams {

|

}

|

||||||

sticky_sessions: false,

|

Err(e) => {

|

||||||

to_https: None,

|

error!("Reading: {}: {:?}", d, e);

|

||||||

authentication: DashMap::new(),

|

warn!("Running with empty upstreams list, update it via API");

|

||||||

|

return None;

|

||||||

|

}

|

||||||

},

|

},

|

||||||

};

|

|

||||||

toreturn.upstreams = UpstreamsDashMap::new();

|

|

||||||

toreturn.headers = Headers::new();

|

|

||||||

|

|

||||||

let mut yaml_data = d.to_string();

|

|

||||||

match kind {

|

|

||||||

"filepath" => {

|

|

||||||

let _ = match fs::read_to_string(d) {

|

|

||||||

Ok(data) => {

|

|

||||||

info!("Reading upstreams from {}", d);

|

|

||||||

yaml_data = data

|

|

||||||

}

|

|

||||||

Err(e) => {

|

|

||||||

error!("Reading: {}: {:?}", d, e.to_string());

|

|

||||||

warn!("Running with empty upstreams list, update it via API");

|

|

||||||

return None;

|

|

||||||

}

|

|

||||||

};

|

|

||||||

}

|

|

||||||

"content" => {

|

"content" => {

|

||||||

info!("Reading upstreams from API post body");

|

info!("Reading upstreams from API post body");

|

||||||

|

d.to_string()

|

||||||

}

|

}

|

||||||

_ => error!("Mismatched parameter, only filepath|content is allowed "),

|

_ => {

|

||||||

}

|

error!("Mismatched parameter, only filepath|content is allowed");

|

||||||

|

return None;

|

||||||

let p: Result<Config, Error> = serde_yaml::from_str(&yaml_data);

|

|

||||||

match p {

|

|

||||||

Ok(parsed) => {

|

|

||||||

let global_headers = DashMap::new();

|

|

||||||

let mut hl = Vec::new();

|

|

||||||

if let Some(headers) = &parsed.headers {

|

|

||||||

for header in headers.iter() {

|

|

||||||

if let Some((key, val)) = header.split_once(':') {

|

|

||||||

hl.push((key.to_string(), val.to_string()));

|

|

||||||

}

|

|

||||||

}

|

|

||||||

global_headers.insert("/".to_string(), hl);

|

|

||||||

toreturn.headers.insert("GLOBAL_HEADERS".to_string(), global_headers);

|

|

||||||

|

|

||||||

toreturn.extraparams.sticky_sessions = parsed.sticky_sessions;

|

|

||||||

toreturn.extraparams.to_https = parsed.to_https;

|

|

||||||

}

|

|

||||||

if let Some(auth) = &parsed.authorization {

|

|

||||||

let name = auth.get("type").unwrap().to_string();

|

|

||||||

let creds = auth.get("creds").unwrap().to_string();

|

|

||||||

let val: Vec<String> = vec![name, creds];

|

|

||||||

toreturn.extraparams.authentication.insert("authorization".to_string(), val);

|

|

||||||

} else {

|

|

||||||

toreturn.extraparams.authentication = DashMap::new();

|

|

||||||

}

|

|

||||||

|

|

||||||

match parsed.provider.as_str() {

|

|

||||||

"file" => {

|

|

||||||

toreturn.typecfg = "file".to_string();

|

|

||||||

if let Some(upstream) = parsed.upstreams {

|

|

||||||

for (hostname, host_config) in upstream {

|

|

||||||

let path_map = DashMap::new();

|

|

||||||

let header_list = DashMap::new();

|

|

||||||

for (path, path_config) in host_config.paths {

|

|

||||||

let mut server_list = Vec::new();

|

|

||||||

let mut hl = Vec::new();

|

|

||||||

if let Some(headers) = &path_config.headers {

|

|

||||||

for header in headers.iter().by_ref() {

|

|

||||||

if let Some((key, val)) = header.split_once(':') {

|

|

||||||

hl.push((key.to_string(), val.to_string()));

|

|

||||||

}

|

|

||||||

}

|

|

||||||

}

|

|

||||||

header_list.insert(path.clone(), hl);

|

|

||||||

for server in path_config.servers {

|

|

||||||

if let Some((ip, port_str)) = server.split_once(':') {

|

|

||||||

if let Ok(port) = port_str.parse::<u16>() {

|

|

||||||

// let to_https = matches!(path_config.to_https, Some(true));

|

|

||||||

let to_https = path_config.to_https.unwrap_or(false);

|

|

||||||

server_list.push((ip.to_string(), port, true, false, to_https));

|

|

||||||

}

|

|

||||||

}

|

|

||||||

}

|

|

||||||

path_map.insert(path, (server_list, AtomicUsize::new(0)));

|

|

||||||

}

|

|

||||||

toreturn.headers.insert(hostname.clone(), header_list);

|

|

||||||

toreturn.upstreams.insert(hostname, path_map);

|

|

||||||

}

|

|

||||||

}

|

|

||||||

Some(toreturn)

|

|

||||||

}

|

|

||||||

"consul" => {

|

|

||||||

toreturn.typecfg = "consul".to_string();

|

|

||||||

let consul = parsed.consul;

|

|

||||||

match consul {

|

|

||||||

Some(consul) => {

|

|

||||||

toreturn.consul = Some(consul);

|

|

||||||

Some(toreturn)

|

|

||||||

}

|

|

||||||

None => None,

|

|

||||||

}

|

|

||||||

}

|

|

||||||

"kubernetes" => None,

|

|

||||||

_ => {

|

|

||||||

warn!("Unknown provider {}", parsed.provider);

|

|

||||||

None

|

|

||||||

}

|

|

||||||

}

|

|

||||||

}

|

}

|

||||||

|

};

|

||||||

|

|

||||||

|

let parsed: Config = match serde_yaml::from_str(&yaml_data) {

|

||||||

|

Ok(cfg) => cfg,

|

||||||

Err(e) => {

|

Err(e) => {

|

||||||

error!("Failed to parse upstreams file: {}", e);

|

error!("Failed to parse upstreams file: {}", e);

|

||||||

|

return None;

|

||||||

|

}

|

||||||

|

};

|

||||||

|

|

||||||

|

let mut toreturn = Configuration::default();

|

||||||

|

|

||||||

|

populate_headers_and_auth(&mut toreturn, &parsed);

|

||||||

|

toreturn.typecfg = parsed.provider.clone();

|

||||||

|

|

||||||

|

match parsed.provider.as_str() {

|

||||||

|

"file" => {

|

||||||

|

populate_file_upstreams(&mut toreturn, &parsed);

|

||||||

|

Some(toreturn)

|

||||||

|

}

|

||||||

|

"consul" => {

|

||||||

|

toreturn.consul = parsed.consul;

|

||||||

|

if toreturn.consul.is_some() {

|

||||||

|

Some(toreturn)

|

||||||

|

} else {

|

||||||

|

None

|

||||||

|

}

|

||||||

|

}

|

||||||

|

"kubernetes" => None,

|

||||||

|

_ => {

|

||||||

|

warn!("Unknown provider {}", parsed.provider);

|

||||||

None

|

None

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

|

fn populate_headers_and_auth(config: &mut Configuration, parsed: &Config) {

|

||||||

|

if let Some(headers) = &parsed.headers {

|

||||||

|

let mut hl = Vec::new();

|

||||||

|

for header in headers {

|

||||||

|

if let Some((key, val)) = header.split_once(':') {

|

||||||

|

hl.push((key.trim().to_string(), val.trim().to_string()));

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

let global_headers = DashMap::new();

|

||||||

|

global_headers.insert("/".to_string(), hl);

|

||||||

|

config.headers.insert("GLOBAL_HEADERS".to_string(), global_headers);

|

||||||

|

}

|

||||||

|

|

||||||

|

config.extraparams.sticky_sessions = parsed.sticky_sessions;

|

||||||

|

config.extraparams.to_https = parsed.to_https;

|

||||||

|

config.extraparams.rate_limit = parsed.rate_limit;

|

||||||

|

|

||||||

|

if let Some(rate) = &parsed.rate_limit {

|

||||||

|

info!("Applied Global Rate Limit : {} request per second", rate);

|

||||||

|

}

|

||||||

|

|

||||||

|

if let Some(auth) = &parsed.authorization {

|

||||||

|

let name = auth.get("type").unwrap_or(&"".to_string()).to_string();

|

||||||

|

let creds = auth.get("creds").unwrap_or(&"".to_string()).to_string();

|

||||||

|

config.extraparams.authentication.insert("authorization".to_string(), vec![name, creds]);

|

||||||

|

} else {

|

||||||

|

config.extraparams.authentication = DashMap::new();

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

fn populate_file_upstreams(config: &mut Configuration, parsed: &Config) {

|

||||||

|

if let Some(upstreams) = &parsed.upstreams {

|

||||||

|

for (hostname, host_config) in upstreams {

|

||||||

|

let path_map = DashMap::new();

|

||||||

|

let header_list = DashMap::new();

|

||||||

|

for (path, path_config) in &host_config.paths {

|

||||||

|

if let Some(rate) = &path_config.rate_limit {

|

||||||

|

info!("Applied Rate Limit for {} : {} request per second", hostname, rate);

|

||||||

|

}

|

||||||

|

|

||||||

|

let mut server_list = Vec::new();

|

||||||

|

let mut hl = Vec::new();

|

||||||

|

|

||||||

|

if let Some(headers) = &path_config.headers {

|

||||||

|

for header in headers {

|

||||||

|

if let Some((key, val)) = header.split_once(':') {

|

||||||

|

hl.push((key.trim().to_string(), val.trim().to_string()));

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

header_list.insert(path.clone(), hl);

|

||||||

|

|

||||||

|

for server in &path_config.servers {

|

||||||

|

// let mut rate: Option<isize> = None;

|

||||||

|

// let size: isize = path_config.servers.len() as isize;

|

||||||

|

// if let Some(limit) = &path_config.rate_limit {

|

||||||

|

// if size > 0 {

|

||||||

|

// rate = Some(limit / size);

|

||||||

|

// }

|

||||||

|

// }

|

||||||

|

|

||||||

|

if let Some((ip, port_str)) = server.split_once(':') {

|

||||||

|

if let Ok(port) = port_str.parse::<u16>() {

|

||||||

|

server_list.push(InnerMap {

|

||||||

|

address: ip.trim().to_string(),

|

||||||

|

port,

|

||||||

|

is_ssl: true,

|

||||||

|

is_http2: false,

|

||||||

|

to_https: path_config.to_https.unwrap_or(false),

|

||||||

|

// rate_limit: rate,

|

||||||

|

rate_limit: path_config.rate_limit,

|

||||||

|

});

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

path_map.insert(path.clone(), (server_list, AtomicUsize::new(0)));

|

||||||

|

}

|

||||||

|

|

||||||

|

config.headers.insert(hostname.clone(), header_list);

|

||||||

|

config.upstreams.insert(hostname.clone(), path_map);

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

pub fn parce_main_config(path: &str) -> AppConfig {

|

pub fn parce_main_config(path: &str) -> AppConfig {

|

||||||

info!("Parsing configuration");

|

info!("Parsing configuration");

|

||||||

let data = fs::read_to_string(path).unwrap();

|

let data = fs::read_to_string(path).unwrap();

|

||||||

@@ -150,15 +170,5 @@ pub fn parce_main_config(path: &str) -> AppConfig {

|

|||||||

}

|

}

|

||||||

}

|

}

|

||||||

};

|

};

|

||||||

// match cfo.config_tls_address.clone() {

|

|

||||||

// Some(tls_cert) => {

|

|

||||||

// if let Some((ip, port_str)) = tls_cert.split_once(':') {

|

|

||||||

// if let Ok(port) = port_str.parse::<u16>() {

|

|

||||||

// cfo.local_tls_server = Option::from((ip.to_string(), port));

|

|

||||||

// }

|

|

||||||

// }

|

|

||||||

// }

|

|

||||||

// None => {}

|

|

||||||

// };

|

|

||||||

cfo

|

cfo

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -3,54 +3,81 @@ use serde::{Deserialize, Serialize};

|

|||||||

use std::collections::HashMap;

|

use std::collections::HashMap;

|

||||||

use std::sync::atomic::AtomicUsize;

|

use std::sync::atomic::AtomicUsize;

|

||||||

|

|

||||||

pub type InnerMap = (String, u16, bool, bool, bool);

|

// pub type InnerMap = BackendConfig;

|

||||||

pub type UpstreamsDashMap = DashMap<String, DashMap<String, (Vec<InnerMap>, AtomicUsize)>>;

|

pub type UpstreamsDashMap = DashMap<String, DashMap<String, (Vec<InnerMap>, AtomicUsize)>>;

|

||||||

|

|

||||||

|

// #[derive(Debug, Default)]

|

||||||

|

// pub struct UpstreamsMap {

|

||||||

|

// pub upstreams: DashMap<String, DashMap<String, (Vec<InnerMap>, AtomicUsize)>>,

|

||||||

|

// pub ratelimit: DashMap<String, Option<isize>>,

|

||||||

|

// }

|

||||||

|

// impl UpstreamsMap {

|

||||||

|

// pub fn new() -> Self {

|

||||||

|

// Self {

|

||||||

|

// upstreams: Default::default(),

|

||||||

|

// ratelimit: Default::default(),

|

||||||

|

// }

|

||||||

|

// }

|

||||||

|

// }

|

||||||

|

//

|

||||||

|

// pub type XUpstreamsDashMap = DashMap<String, UpstreamsMap>;

|

||||||

|

|

||||||

pub type UpstreamsIdMap = DashMap<String, InnerMap>;

|

pub type UpstreamsIdMap = DashMap<String, InnerMap>;

|

||||||

pub type Headers = DashMap<String, DashMap<String, Vec<(String, String)>>>;

|

pub type Headers = DashMap<String, DashMap<String, Vec<(String, String)>>>;

|

||||||

|

|

||||||

#[derive(Debug, Clone, Serialize, Deserialize)]

|

#[derive(Debug, Default, Clone, Serialize, Deserialize)]

|

||||||

pub struct ServiceMapping {

|

pub struct ServiceMapping {

|

||||||

pub proxy: String,

|

pub proxy: String,

|

||||||

pub real: String,

|

pub real: String,

|

||||||

}

|

}

|

||||||

|

|

||||||

#[derive(Clone, Debug)]

|

#[derive(Clone, Debug, Default)]

|

||||||

pub struct Extraparams {

|

pub struct Extraparams {

|

||||||

pub sticky_sessions: bool,

|

pub sticky_sessions: bool,

|

||||||

pub to_https: Option<bool>,

|

pub to_https: Option<bool>,

|

||||||

pub authentication: DashMap<String, Vec<String>>,

|

pub authentication: DashMap<String, Vec<String>>,

|

||||||

|

pub rate_limit: Option<isize>,

|

||||||

}

|

}

|

||||||

|

|

||||||

#[derive(Clone, Debug, Serialize, Deserialize)]

|

#[derive(Clone, Default, Debug, Serialize, Deserialize)]

|

||||||

pub struct Consul {

|

pub struct Consul {

|

||||||

pub servers: Option<Vec<String>>,

|

pub servers: Option<Vec<String>>,

|

||||||

pub services: Option<Vec<ServiceMapping>>,

|

pub services: Option<Vec<ServiceMapping>>,

|

||||||

pub token: Option<String>,

|

pub token: Option<String>,

|

||||||

}

|

}

|

||||||

#[derive(Debug, Serialize, Deserialize)]

|

#[derive(Debug, Default, Serialize, Deserialize)]

|

||||||

pub struct Config {

|

pub struct Config {

|

||||||

pub provider: String,

|

pub provider: String,

|

||||||

pub sticky_sessions: bool,

|

pub sticky_sessions: bool,

|

||||||

pub to_https: Option<bool>,

|

pub to_https: Option<bool>,

|

||||||

|

#[serde(default)]

|

||||||

pub upstreams: Option<HashMap<String, HostConfig>>,

|

pub upstreams: Option<HashMap<String, HostConfig>>,

|

||||||

|

#[serde(default)]

|

||||||

pub globals: Option<HashMap<String, Vec<String>>>,

|

pub globals: Option<HashMap<String, Vec<String>>>,

|

||||||

|

#[serde(default)]

|

||||||

pub headers: Option<Vec<String>>,

|

pub headers: Option<Vec<String>>,

|

||||||

|

#[serde(default)]

|

||||||

pub authorization: Option<HashMap<String, String>>,

|

pub authorization: Option<HashMap<String, String>>,

|

||||||

|

#[serde(default)]

|

||||||

pub consul: Option<Consul>,

|

pub consul: Option<Consul>,

|

||||||

|

#[serde(default)]

|

||||||

|

pub rate_limit: Option<isize>,

|

||||||

}

|

}

|

||||||

|

|

||||||

#[derive(Debug, Serialize, Deserialize)]

|

#[derive(Debug, Default, Serialize, Deserialize)]

|

||||||

pub struct HostConfig {

|

pub struct HostConfig {

|

||||||

pub paths: HashMap<String, PathConfig>,

|

pub paths: HashMap<String, PathConfig>,

|

||||||

|

pub rate_limit: Option<isize>,

|

||||||

}

|

}

|

||||||

|

|

||||||

#[derive(Debug, Serialize, Deserialize)]

|

#[derive(Debug, Default, Serialize, Deserialize)]

|

||||||

pub struct PathConfig {

|

pub struct PathConfig {

|

||||||

pub servers: Vec<String>,

|

pub servers: Vec<String>,

|

||||||

pub to_https: Option<bool>,

|

pub to_https: Option<bool>,

|

||||||

pub headers: Option<Vec<String>>,

|

pub headers: Option<Vec<String>>,

|

||||||

|

pub rate_limit: Option<isize>,

|

||||||

}

|

}

|

||||||

#[derive(Debug)]

|

#[derive(Debug, Default)]

|

||||||

pub struct Configuration {

|

pub struct Configuration {

|

||||||

pub upstreams: UpstreamsDashMap,

|

pub upstreams: UpstreamsDashMap,

|

||||||

pub headers: Headers,

|

pub headers: Headers,

|

||||||

@@ -59,7 +86,7 @@ pub struct Configuration {

|

|||||||

pub extraparams: Extraparams,

|

pub extraparams: Extraparams,

|

||||||

}

|

}

|

||||||

|

|

||||||

#[derive(Debug, Deserialize)]

|

#[derive(Debug, Default, Serialize, Deserialize)]

|

||||||

pub struct AppConfig {

|

pub struct AppConfig {

|

||||||

pub hc_interval: u16,

|

pub hc_interval: u16,

|

||||||

pub hc_method: String,

|

pub hc_method: String,

|

||||||

@@ -68,19 +95,37 @@ pub struct AppConfig {

|

|||||||

pub master_key: String,

|

pub master_key: String,

|

||||||

pub config_address: String,

|

pub config_address: String,

|

||||||

pub proxy_address_http: String,

|

pub proxy_address_http: String,

|

||||||

|

pub config_api_enabled: bool,

|

||||||

pub config_tls_address: Option<String>,

|

pub config_tls_address: Option<String>,

|

||||||

pub config_tls_certificate: Option<String>,

|

pub config_tls_certificate: Option<String>,

|

||||||

pub config_tls_key_file: Option<String>,

|

pub config_tls_key_file: Option<String>,

|

||||||

pub proxy_address_tls: Option<String>,

|

pub proxy_address_tls: Option<String>,

|

||||||

pub proxy_port_tls: Option<u16>,

|

pub proxy_port_tls: Option<u16>,

|

||||||

// pub tls_certificate: Option<String>,

|

|

||||||

// pub tls_key_file: Option<String>,

|

|

||||||

pub local_server: Option<(String, u16)>,

|

pub local_server: Option<(String, u16)>,

|

||||||

pub proxy_certificates: Option<String>,

|

pub proxy_certificates: Option<String>,

|

||||||

|

pub file_server_address: Option<String>,

|

||||||

|

pub file_server_folder: Option<String>,

|

||||||

}

|

}

|

||||||

|

|

||||||

// #[derive(Debug)]

|

#[derive(Debug, Clone, PartialEq, Eq, Hash)]

|

||||||

// pub struct CertificateMove {

|

pub struct InnerMap {

|

||||||

// pub cert_tx: Sender<CertificateConfig>,

|

pub address: String,

|

||||||

// pub cert_rx: Receiver<CertificateConfig>,

|

pub port: u16,

|

||||||

// }

|

pub is_ssl: bool,

|

||||||

|

pub is_http2: bool,

|

||||||

|

pub to_https: bool,

|

||||||

|

pub rate_limit: Option<isize>,

|

||||||

|

}

|

||||||

|

|

||||||

|

impl InnerMap {

|

||||||

|

pub fn new() -> Self {

|

||||||

|

Self {

|

||||||

|

address: Default::default(),

|

||||||

|

port: Default::default(),

|

||||||

|

is_ssl: Default::default(),

|

||||||

|

is_http2: Default::default(),

|

||||||

|

to_https: Default::default(),

|

||||||

|

rate_limit: Default::default(),

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|||||||

@@ -1,4 +1,4 @@

|

|||||||

use crate::utils::structs::{UpstreamsDashMap, UpstreamsIdMap};

|

use crate::utils::structs::{InnerMap, UpstreamsDashMap, UpstreamsIdMap};

|

||||||

use crate::utils::tls;

|

use crate::utils::tls;

|

||||||

use crate::utils::tls::CertificateConfig;

|

use crate::utils::tls::CertificateConfig;

|

||||||

use dashmap::DashMap;

|

use dashmap::DashMap;

|

||||||

@@ -21,10 +21,12 @@ pub fn print_upstreams(upstreams: &UpstreamsDashMap) {

|

|||||||

|

|

||||||

for path_entry in host_entry.value().iter() {

|

for path_entry in host_entry.value().iter() {

|

||||||

let path = path_entry.key();

|

let path = path_entry.key();

|

||||||

println!(" Path: {}", path);

|

println!(" Path: {}", path);

|

||||||

|

for f in path_entry.value().0.clone() {

|

||||||

for (ip, port, ssl, vers, to_https) in path_entry.value().0.clone() {

|

println!(

|

||||||

println!(" ===> IP: {}, Port: {}, SSL: {}, H2: {}, To HTTPS: {}", ip, port, ssl, vers, to_https);

|

" IP: {}, Port: {}, SSL: {}, H2: {}, To HTTPS: {}",

|

||||||

|

f.address, f.port, f.is_ssl, f.is_http2, f.to_https

|

||||||

|

);

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

@@ -140,13 +142,21 @@ pub fn clone_idmap_into(original: &UpstreamsDashMap, cloned: &UpstreamsIdMap) {

|

|||||||

let new_vec = vec.clone();

|

let new_vec = vec.clone();

|

||||||

for x in vec.iter() {

|

for x in vec.iter() {

|

||||||

let mut id = String::new();

|

let mut id = String::new();

|

||||||

write!(&mut id, "{}:{}:{}", x.0, x.1, x.2).unwrap();

|

write!(&mut id, "{}:{}:{}", x.address, x.port, x.is_ssl).unwrap();

|

||||||

let mut hasher = Sha256::new();

|

let mut hasher = Sha256::new();

|

||||||

hasher.update(id.clone().into_bytes());

|

hasher.update(id.clone().into_bytes());

|

||||||

let hash = hasher.finalize();

|

let hash = hasher.finalize();

|

||||||

let hex_hash = base16ct::lower::encode_string(&hash);

|