mirror of

https://github.com/sadoyan/aralez.git

synced 2026-04-30 14:58:38 +08:00

Compare commits

93 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

bee307793c | ||

|

|

6e83775127 | ||

|

|

baded40e6e | ||

|

|

c0a419f6f7 | ||

|

|

8aff2fa875 | ||

|

|

9b4ee26a2b | ||

|

|

f135106a44 | ||

|

|

389c12119a | ||

|

|

93a8661281 | ||

|

|

0505ce2849 | ||

|

|

72ed870538 | ||

|

|

68140d0cf0 | ||

|

|

7b9b206c13 | ||

|

|

4706b281bc | ||

|

|

1f8efc6af7 | ||

|

|

9f595b2709 | ||

|

|

ed44516015 | ||

|

|

17da7862e3 | ||

|

|

24d00da855 | ||

|

|

c9422759aa | ||

|

|

94b1f77734 | ||

|

|

9d986f9a28 | ||

|

|

3afa2f209f | ||

|

|

c151fdf58b | ||

|

|

438426153f | ||

|

|

9bb01fd1b0 | ||

|

|

abb5fef1d6 | ||

|

|

3618687ad5 | ||

|

|

a893b3c301 | ||

|

|

3ff262c7f4 | ||

|

|

062f02259f | ||

|

|

1a4c9b7d55 | ||

|

|

6ef7f23823 | ||

|

|

2b437c65fb | ||

|

|

38055ae94e | ||

|

|

703de9e909 | ||

|

|

2c8b01295c | ||

|

|

baebe1c00f | ||

|

|

6c1d3c5ef8 | ||

|

|

2d1a827007 | ||

|

|

a2a5250711 | ||

|

|

985e923342 | ||

|

|

0fc79c022f | ||

|

|

a43bccdfb8 | ||

|

|

5b87391fbb | ||

|

|

c68a4ad83d | ||

|

|

8ba8d32df1 | ||

|

|

7a839065e6 | ||

|

|

74821654f3 | ||

|

|

78c83b802f | ||

|

|

012505b77e | ||

|

|

21c4cb0901 | ||

|

|

86dd3d3402 | ||

|

|

d6b345202b | ||

|

|

5209d787e4 | ||

|

|

02de5f1c21 | ||

|

|

9519280026 | ||

|

|

e87c60cf4f | ||

|

|

25693a7058 | ||

|

|

3b0b385ec7 | ||

|

|

5359c2e8e9 | ||

|

|

2b62d1e6de | ||

|

|

8a290e5084 | ||

|

|

3541b20c80 | ||

|

|

bd5fed9be0 | ||

|

|

b916b152ea | ||

|

|

5d4915d6b9 | ||

|

|

3ea3996e27 | ||

|

|

dd069b8532 | ||

|

|

c78245e695 | ||

|

|

66b1a1c399 | ||

|

|

bba6dd8514 | ||

|

|

79485ac69d | ||

|

|

61c5625016 | ||

|

|

57bdc71acd | ||

|

|

9e09b829a6 | ||

|

|

d3602fa578 | ||

|

|

e304482667 | ||

|

|

f8118f9596 | ||

|

|

f654312466 | ||

|

|

b44f7069a0 | ||

|

|

a44979ec82 | ||

|

|

ece4fa20af | ||

|

|

2ad3a059ab | ||

|

|

6f012cee69 | ||

|

|

51c88c8f7c | ||

|

|

f91bc41103 | ||

|

|

21e1276ff5 | ||

|

|

8463cdabbc | ||

|

|

d0e4b52ce6 | ||

|

|

b552d24497 | ||

|

|

2e33d692bb | ||

|

|

e586967830 |

13

.cargo/config.toml

Normal file

13

.cargo/config.toml

Normal file

@@ -0,0 +1,13 @@

|

|||||||

|

[target.aarch64-unknown-linux-musl]

|

||||||

|

rustflags = [

|

||||||

|

"-C", "link-arg=-Wl,--defsym=fopen64=fopen",

|

||||||

|

"-C", "link-arg=-Wl,--defsym=fseeko64=fseeko",

|

||||||

|

"-C", "link-arg=-Wl,--defsym=ftello64=ftello"

|

||||||

|

]

|

||||||

|

|

||||||

|

[target.x86_64-unknown-linux-musl]

|

||||||

|

rustflags = [

|

||||||

|

"-C", "link-arg=-Wl,--defsym=fopen64=fopen",

|

||||||

|

"-C", "link-arg=-Wl,--defsym=fseeko64=fseeko",

|

||||||

|

"-C", "link-arg=-Wl,--defsym=ftello64=ftello"

|

||||||

|

]

|

||||||

15

.github/FUNDING.yml

vendored

Normal file

15

.github/FUNDING.yml

vendored

Normal file

@@ -0,0 +1,15 @@

|

|||||||

|

# These are supported funding model platforms

|

||||||

|

|

||||||

|

github: sadoyan

|

||||||

|

patreon: # Replace with a single Patreon username

|

||||||

|

open_collective: # Replace with a single Open Collective username

|

||||||

|

ko_fi: # Replace with a single Ko-fi username

|

||||||

|

tidelift: # Replace with a single Tidelift platform-name/package-name e.g., npm/babel

|

||||||

|

community_bridge: # Replace with a single Community Bridge project-name e.g., cloud-foundry

|

||||||

|

liberapay: # Replace with a single Liberapay username

|

||||||

|

issuehunt: # Replace with a single IssueHunt username

|

||||||

|

lfx_crowdfunding: # Replace with a single LFX Crowdfunding project-name e.g., cloud-foundry

|

||||||

|

polar: # Replace with a single Polar username

|

||||||

|

buy_me_a_coffee: sadoyan

|

||||||

|

thanks_dev: # Replace with a single thanks.dev username

|

||||||

|

custom: # Replace with up to 4 custom sponsorship URLs e.g., ['link1', 'link2']

|

||||||

3

.gitignore

vendored

3

.gitignore

vendored

@@ -5,9 +5,12 @@

|

|||||||

*.dll

|

*.dll

|

||||||

*.exe

|

*.exe

|

||||||

*.sh

|

*.sh

|

||||||

|

/docs/

|

||||||

|

/docs

|

||||||

/target/

|

/target/

|

||||||

*.iml

|

*.iml

|

||||||

.idea/

|

.idea/

|

||||||

|

.etc/

|

||||||

*.ipr

|

*.ipr

|

||||||

*.iws

|

*.iws

|

||||||

/out/

|

/out/

|

||||||

|

|||||||

2952

Cargo.lock

generated

2952

Cargo.lock

generated

File diff suppressed because it is too large

Load Diff

63

Cargo.toml

63

Cargo.toml

@@ -1,6 +1,6 @@

|

|||||||

[package]

|

[package]

|

||||||

name = "aralez"

|

name = "aralez"

|

||||||

version = "0.9.1"

|

version = "0.9.2"

|

||||||

edition = "2021"

|

edition = "2021"

|

||||||

|

|

||||||

[profile.release]

|

[profile.release]

|

||||||

@@ -11,39 +11,38 @@ panic = "abort"

|

|||||||

strip = true

|

strip = true

|

||||||

|

|

||||||

[dependencies]

|

[dependencies]

|

||||||

tokio = { version = "1.45.1", features = ["full"] }

|

tokio = { version = "1.52.1", features = ["full"] }

|

||||||

#pingora = { version = "0.5.0", features = ["lb", "rustls"] } # openssl, rustls, boringssl

|

pingora = { version = "0.8.0", features = ["lb", "openssl"] } # openssl, rustls, boringssl

|

||||||

pingora = { version = "0.5.0", features = ["lb", "openssl"] } # openssl, rustls, boringssl

|

serde = { version = "1.0.228", features = ["derive"] }

|

||||||

serde = { version = "1.0.219", features = ["derive"] }

|

|

||||||

dashmap = "7.0.0-rc2"

|

dashmap = "7.0.0-rc2"

|

||||||

pingora-core = "0.5.0"

|

pingora-core = "0.8.0"

|

||||||

pingora-proxy = "0.5.0"

|

pingora-proxy = "0.8.0"

|

||||||

pingora-http = "0.5.0"

|

pingora-http = "0.8.0"

|

||||||

async-trait = "0.1.88"

|

pingora-limits = "0.8.0"

|

||||||

env_logger = "0.11.8"

|

async-trait = "0.1.89"

|

||||||

log = "0.4.27"

|

env_logger = "0.11.10"

|

||||||

futures = "0.3.31"

|

log = "0.4.29"

|

||||||

notify = "8.0.0"

|

futures = "0.3.32"

|

||||||

axum = { version = "0.8.4" }

|

notify = "9.0.0-rc.3"

|

||||||

axum-server = { version = "0.7.2", features = ["tls-openssl"] }

|

axum = { version = "0.8.9" }

|

||||||

reqwest = { version = "0.12.20", features = ["json", "native-tls-alpn"] }

|

reqwest = { version = "0.13.2", features = ["json", "stream", "blocking"] }

|

||||||

#reqwest = { version = "0.12.15", features = ["json", "rustls-tls"] }

|

serde_yml = "0.0.12"

|

||||||

#reqwest = { version = "0.12.15", default-features = false, features = ["rustls-tls", "json"] }

|

rand = "0.10.1"

|

||||||

|

|

||||||

serde_yaml = "0.9.34-deprecated"

|

|

||||||

rand = "0.9.0"

|

|

||||||

base64 = "0.22.1"

|

base64 = "0.22.1"

|

||||||

jsonwebtoken = "9.3.1"

|

jsonwebtoken = { version = "10.3.0", default-features = false, features = ["use_pem", "rust_crypto"] }

|

||||||

tonic = "0.13.1"

|

tonic = "0.14.5"

|

||||||

sha2 = { version = "0.11.0-rc.0", default-features = false }

|

sha2 = { version = "0.11.0-rc.5", default-features = false }

|

||||||

base16ct = { version = "0.2.0", features = ["alloc"] }

|

base16ct = { version = "1.0.0", features = ["alloc"] }

|

||||||

urlencoding = "2.1.3"

|

urlencoding = "2.1.3"

|

||||||

arc-swap = "1.7.1"

|

arc-swap = "1.9.1"

|

||||||

#rustls = { version = "0.23.27", features = ["ring"] }

|

mimalloc = { version = "0.1.50", default-features = false }

|

||||||

mimalloc = { version = "0.1.47", default-features = false }

|

|

||||||

prometheus = "0.14.0"

|

prometheus = "0.14.0"

|

||||||

lazy_static = "1.5.0"

|

x509-parser = "0.18.1"

|

||||||

#openssl = "0.10.73"

|

|

||||||

x509-parser = "0.17.0"

|

|

||||||

rustls-pemfile = "2.2.0"

|

rustls-pemfile = "2.2.0"

|

||||||

|

tower-http = { version = "0.6.8", features = ["fs"] }

|

||||||

|

privdrop = "0.5.6"

|

||||||

|

ctrlc = "3.5.2"

|

||||||

|

serde_json = "1.0.149"

|

||||||

|

subtle = "2.6.1"

|

||||||

|

moka = { version = "0.12.1", features = ["sync"] }

|

||||||

|

ahash = "0.8.12"

|

||||||

|

|||||||

201

LICENSE

Normal file

201

LICENSE

Normal file

@@ -0,0 +1,201 @@

|

|||||||

|

Apache License

|

||||||

|

Version 2.0, January 2004

|

||||||

|

http://www.apache.org/licenses/

|

||||||

|

|

||||||

|

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

||||||

|

|

||||||

|

1. Definitions.

|

||||||

|

|

||||||

|

"License" shall mean the terms and conditions for use, reproduction,

|

||||||

|

and distribution as defined by Sections 1 through 9 of this document.

|

||||||

|

|

||||||

|

"Licensor" shall mean the copyright owner or entity authorized by

|

||||||

|

the copyright owner that is granting the License.

|

||||||

|

|

||||||

|

"Legal Entity" shall mean the union of the acting entity and all

|

||||||

|

other entities that control, are controlled by, or are under common

|

||||||

|

control with that entity. For the purposes of this definition,

|

||||||

|

"control" means (i) the power, direct or indirect, to cause the

|

||||||

|

direction or management of such entity, whether by contract or

|

||||||

|

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

||||||

|

outstanding shares, or (iii) beneficial ownership of such entity.

|

||||||

|

|

||||||

|

"You" (or "Your") shall mean an individual or Legal Entity

|

||||||

|

exercising permissions granted by this License.

|

||||||

|

|

||||||

|

"Source" form shall mean the preferred form for making modifications,

|

||||||

|

including but not limited to software source code, documentation

|

||||||

|

source, and configuration files.

|

||||||

|

|

||||||

|

"Object" form shall mean any form resulting from mechanical

|

||||||

|

transformation or translation of a Source form, including but

|

||||||

|

not limited to compiled object code, generated documentation,

|

||||||

|

and conversions to other media types.

|

||||||

|

|

||||||

|

"Work" shall mean the work of authorship, whether in Source or

|

||||||

|

Object form, made available under the License, as indicated by a

|

||||||

|

copyright notice that is included in or attached to the work

|

||||||

|

(an example is provided in the Appendix below).

|

||||||

|

|

||||||

|

"Derivative Works" shall mean any work, whether in Source or Object

|

||||||

|

form, that is based on (or derived from) the Work and for which the

|

||||||

|

editorial revisions, annotations, elaborations, or other modifications

|

||||||

|

represent, as a whole, an original work of authorship. For the purposes

|

||||||

|

of this License, Derivative Works shall not include works that remain

|

||||||

|

separable from, or merely link (or bind by name) to the interfaces of,

|

||||||

|

the Work and Derivative Works thereof.

|

||||||

|

|

||||||

|

"Contribution" shall mean any work of authorship, including

|

||||||

|

the original version of the Work and any modifications or additions

|

||||||

|

to that Work or Derivative Works thereof, that is intentionally

|

||||||

|

submitted to Licensor for inclusion in the Work by the copyright owner

|

||||||

|

or by an individual or Legal Entity authorized to submit on behalf of

|

||||||

|

the copyright owner. For the purposes of this definition, "submitted"

|

||||||

|

means any form of electronic, verbal, or written communication sent

|

||||||

|

to the Licensor or its representatives, including but not limited to

|

||||||

|

communication on electronic mailing lists, source code control systems,

|

||||||

|

and issue tracking systems that are managed by, or on behalf of, the

|

||||||

|

Licensor for the purpose of discussing and improving the Work, but

|

||||||

|

excluding communication that is conspicuously marked or otherwise

|

||||||

|

designated in writing by the copyright owner as "Not a Contribution."

|

||||||

|

|

||||||

|

"Contributor" shall mean Licensor and any individual or Legal Entity

|

||||||

|

on behalf of whom a Contribution has been received by Licensor and

|

||||||

|

subsequently incorporated within the Work.

|

||||||

|

|

||||||

|

2. Grant of Copyright License. Subject to the terms and conditions of

|

||||||

|

this License, each Contributor hereby grants to You a perpetual,

|

||||||

|

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

||||||

|

copyright license to reproduce, prepare Derivative Works of,

|

||||||

|

publicly display, publicly perform, sublicense, and distribute the

|

||||||

|

Work and such Derivative Works in Source or Object form.

|

||||||

|

|

||||||

|

3. Grant of Patent License. Subject to the terms and conditions of

|

||||||

|

this License, each Contributor hereby grants to You a perpetual,

|

||||||

|

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

||||||

|

(except as stated in this section) patent license to make, have made,

|

||||||

|

use, offer to sell, sell, import, and otherwise transfer the Work,

|

||||||

|

where such license applies only to those patent claims licensable

|

||||||

|

by such Contributor that are necessarily infringed by their

|

||||||

|

Contribution(s) alone or by combination of their Contribution(s)

|

||||||

|

with the Work to which such Contribution(s) was submitted. If You

|

||||||

|

institute patent litigation against any entity (including a

|

||||||

|

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

||||||

|

or a Contribution incorporated within the Work constitutes direct

|

||||||

|

or contributory patent infringement, then any patent licenses

|

||||||

|

granted to You under this License for that Work shall terminate

|

||||||

|

as of the date such litigation is filed.

|

||||||

|

|

||||||

|

4. Redistribution. You may reproduce and distribute copies of the

|

||||||

|

Work or Derivative Works thereof in any medium, with or without

|

||||||

|

modifications, and in Source or Object form, provided that You

|

||||||

|

meet the following conditions:

|

||||||

|

|

||||||

|

(a) You must give any other recipients of the Work or

|

||||||

|

Derivative Works a copy of this License; and

|

||||||

|

|

||||||

|

(b) You must cause any modified files to carry prominent notices

|

||||||

|

stating that You changed the files; and

|

||||||

|

|

||||||

|

(c) You must retain, in the Source form of any Derivative Works

|

||||||

|

that You distribute, all copyright, patent, trademark, and

|

||||||

|

attribution notices from the Source form of the Work,

|

||||||

|

excluding those notices that do not pertain to any part of

|

||||||

|

the Derivative Works; and

|

||||||

|

|

||||||

|

(d) If the Work includes a "NOTICE" text file as part of its

|

||||||

|

distribution, then any Derivative Works that You distribute must

|

||||||

|

include a readable copy of the attribution notices contained

|

||||||

|

within such NOTICE file, excluding those notices that do not

|

||||||

|

pertain to any part of the Derivative Works, in at least one

|

||||||

|

of the following places: within a NOTICE text file distributed

|

||||||

|

as part of the Derivative Works; within the Source form or

|

||||||

|

documentation, if provided along with the Derivative Works; or,

|

||||||

|

within a display generated by the Derivative Works, if and

|

||||||

|

wherever such third-party notices normally appear. The contents

|

||||||

|

of the NOTICE file are for informational purposes only and

|

||||||

|

do not modify the License. You may add Your own attribution

|

||||||

|

notices within Derivative Works that You distribute, alongside

|

||||||

|

or as an addendum to the NOTICE text from the Work, provided

|

||||||

|

that such additional attribution notices cannot be construed

|

||||||

|

as modifying the License.

|

||||||

|

|

||||||

|

You may add Your own copyright statement to Your modifications and

|

||||||

|

may provide additional or different license terms and conditions

|

||||||

|

for use, reproduction, or distribution of Your modifications, or

|

||||||

|

for any such Derivative Works as a whole, provided Your use,

|

||||||

|

reproduction, and distribution of the Work otherwise complies with

|

||||||

|

the conditions stated in this License.

|

||||||

|

|

||||||

|

5. Submission of Contributions. Unless You explicitly state otherwise,

|

||||||

|

any Contribution intentionally submitted for inclusion in the Work

|

||||||

|

by You to the Licensor shall be under the terms and conditions of

|

||||||

|

this License, without any additional terms or conditions.

|

||||||

|

Notwithstanding the above, nothing herein shall supersede or modify

|

||||||

|

the terms of any separate license agreement you may have executed

|

||||||

|

with Licensor regarding such Contributions.

|

||||||

|

|

||||||

|

6. Trademarks. This License does not grant permission to use the trade

|

||||||

|

names, trademarks, service marks, or product names of the Licensor,

|

||||||

|

except as required for reasonable and customary use in describing the

|

||||||

|

origin of the Work and reproducing the content of the NOTICE file.

|

||||||

|

|

||||||

|

7. Disclaimer of Warranty. Unless required by applicable law or

|

||||||

|

agreed to in writing, Licensor provides the Work (and each

|

||||||

|

Contributor provides its Contributions) on an "AS IS" BASIS,

|

||||||

|

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

||||||

|

implied, including, without limitation, any warranties or conditions

|

||||||

|

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

||||||

|

PARTICULAR PURPOSE. You are solely responsible for determining the

|

||||||

|

appropriateness of using or redistributing the Work and assume any

|

||||||

|

risks associated with Your exercise of permissions under this License.

|

||||||

|

|

||||||

|

8. Limitation of Liability. In no event and under no legal theory,

|

||||||

|

whether in tort (including negligence), contract, or otherwise,

|

||||||

|

unless required by applicable law (such as deliberate and grossly

|

||||||

|

negligent acts) or agreed to in writing, shall any Contributor be

|

||||||

|

liable to You for damages, including any direct, indirect, special,

|

||||||

|

incidental, or consequential damages of any character arising as a

|

||||||

|

result of this License or out of the use or inability to use the

|

||||||

|

Work (including but not limited to damages for loss of goodwill,

|

||||||

|

work stoppage, computer failure or malfunction, or any and all

|

||||||

|

other commercial damages or losses), even if such Contributor

|

||||||

|

has been advised of the possibility of such damages.

|

||||||

|

|

||||||

|

9. Accepting Warranty or Additional Liability. While redistributing

|

||||||

|

the Work or Derivative Works thereof, You may choose to offer,

|

||||||

|

and charge a fee for, acceptance of support, warranty, indemnity,

|

||||||

|

or other liability obligations and/or rights consistent with this

|

||||||

|

License. However, in accepting such obligations, You may act only

|

||||||

|

on Your own behalf and on Your sole responsibility, not on behalf

|

||||||

|

of any other Contributor, and only if You agree to indemnify,

|

||||||

|

defend, and hold each Contributor harmless for any liability

|

||||||

|

incurred by, or claims asserted against, such Contributor by reason

|

||||||

|

of your accepting any such warranty or additional liability.

|

||||||

|

|

||||||

|

END OF TERMS AND CONDITIONS

|

||||||

|

|

||||||

|

APPENDIX: How to apply the Apache License to your work.

|

||||||

|

|

||||||

|

To apply the Apache License to your work, attach the following

|

||||||

|

boilerplate notice, with the fields enclosed by brackets "[]"

|

||||||

|

replaced with your own identifying information. (Don't include

|

||||||

|

the brackets!) The text should be enclosed in the appropriate

|

||||||

|

comment syntax for the file format. We also recommend that a

|

||||||

|

file or class name and description of purpose be included on the

|

||||||

|

same "printed page" as the copyright notice for easier

|

||||||

|

identification within third-party archives.

|

||||||

|

|

||||||

|

Copyright [yyyy] [name of copyright owner]

|

||||||

|

|

||||||

|

Licensed under the Apache License, Version 2.0 (the "License");

|

||||||

|

you may not use this file except in compliance with the License.

|

||||||

|

You may obtain a copy of the License at

|

||||||

|

|

||||||

|

http://www.apache.org/licenses/LICENSE-2.0

|

||||||

|

|

||||||

|

Unless required by applicable law or agreed to in writing, software

|

||||||

|

distributed under the License is distributed on an "AS IS" BASIS,

|

||||||

|

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||||

|

See the License for the specific language governing permissions and

|

||||||

|

limitations under the License.

|

||||||

120

METRICS.md

120

METRICS.md

@@ -1,120 +0,0 @@

|

|||||||

# 📈 Aralez Prometheus Metrics Reference

|

|

||||||

|

|

||||||

This document outlines Prometheus metrics for the [Aralez](https://github.com/sadoyan/aralez) reverse proxy.

|

|

||||||

These metrics can be used for monitoring, alerting and performance analysis.

|

|

||||||

|

|

||||||

Exposed to `http://config_address/metrics`

|

|

||||||

|

|

||||||

By default `http://127.0.0.1:3000/metrics`

|

|

||||||

|

|

||||||

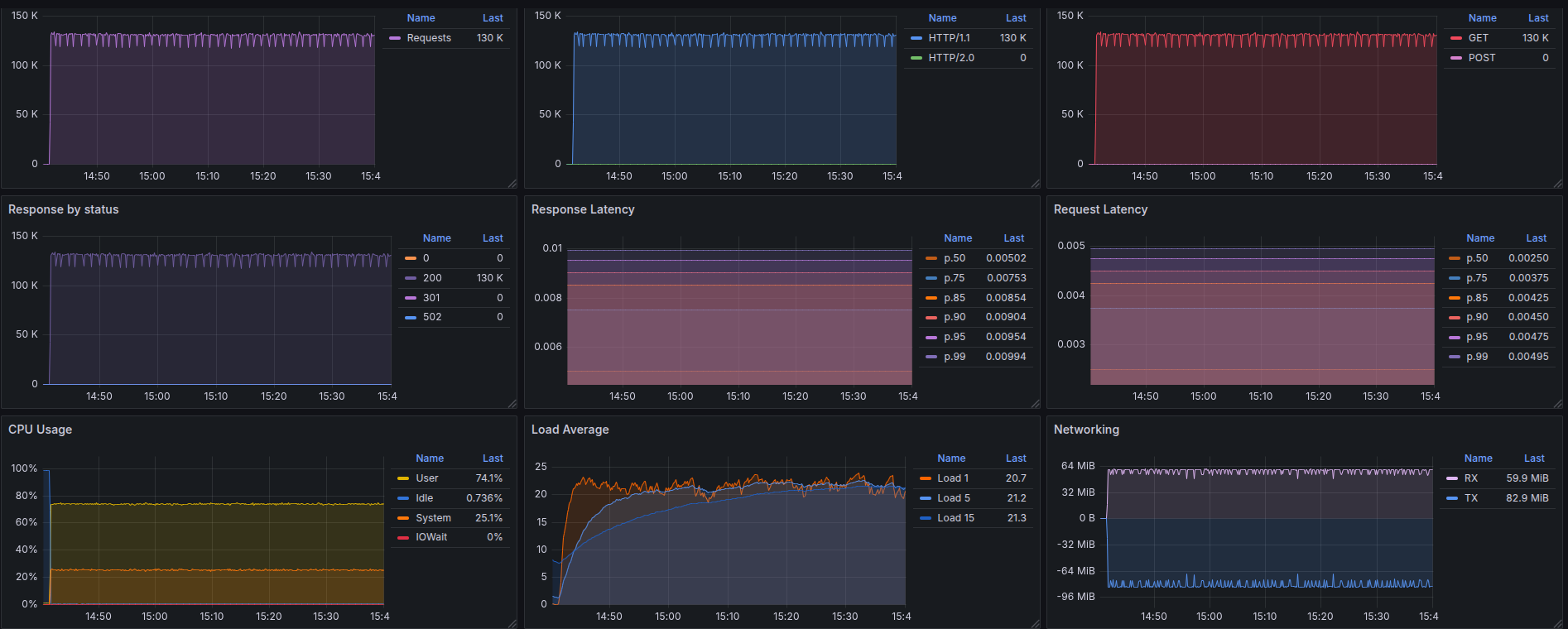

# 📊 Example Grafana dashboard during stress test :

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

## 🛠️ Prometheus Metrics

|

|

||||||

|

|

||||||

### 1. `aralez_requests_total`

|

|

||||||

|

|

||||||

- **Type**: `Counter`

|

|

||||||

- **Purpose**: Total amount requests served by Aralez.

|

|

||||||

|

|

||||||

**PromQL example:**

|

|

||||||

|

|

||||||

```promql

|

|

||||||

rate(aralez_requests_total[5m])

|

|

||||||

```

|

|

||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

### 2. `aralez_errors_total`

|

|

||||||

|

|

||||||

- **Type**: `Counter`

|

|

||||||

- **Purpose**: Count of requests that resulted in an error.

|

|

||||||

|

|

||||||

**PromQL example:**

|

|

||||||

|

|

||||||

```promql

|

|

||||||

rate(aralez_errors_total[5m])

|

|

||||||

```

|

|

||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

### 3. `aralez_responses_total{status="200"}`

|

|

||||||

|

|

||||||

- **Type**: `CounterVec`

|

|

||||||

- **Purpose**: Count of responses by HTTP status code.

|

|

||||||

|

|

||||||

**PromQL example:**

|

|

||||||

|

|

||||||

```promql

|

|

||||||

rate(aralez_responses_total{status=~"5.."}[5m]) > 0

|

|

||||||

```

|

|

||||||

|

|

||||||

> Useful for alerting on 5xx errors.

|

|

||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

### 4. `aralez_response_latency_seconds`

|

|

||||||

|

|

||||||

- **Type**: `Histogram`

|

|

||||||

- **Purpose**: Tracks the latency of responses in seconds.

|

|

||||||

|

|

||||||

**Example bucket output:**

|

|

||||||

|

|

||||||

```prometheus

|

|

||||||

aralez_response_latency_seconds_bucket{le="0.01"} 15

|

|

||||||

aralez_response_latency_seconds_bucket{le="0.1"} 120

|

|

||||||

aralez_response_latency_seconds_bucket{le="0.25"} 245

|

|

||||||

aralez_response_latency_seconds_bucket{le="0.5"} 500

|

|

||||||

...

|

|

||||||

aralez_response_latency_seconds_count 1023

|

|

||||||

aralez_response_latency_seconds_sum 42.6

|

|

||||||

```

|

|

||||||

|

|

||||||

| Metric | Meaning |

|

|

||||||

|-------------------------|---------------------------------------------------------------|

|

|

||||||

| `bucket{le="0.1"} 120` | 120 requests were ≤ 100ms |

|

|

||||||

| `bucket{le="0.25"} 245` | 245 requests were ≤ 250ms |

|

|

||||||

| `count` | Total number of observations (i.e., total responses measured) |

|

|

||||||

| `sum` | Total time of all responses, in seconds |

|

|

||||||

|

|

||||||

### 🔍 How to interpret:

|

|

||||||

|

|

||||||

- `le` means “less than or equal to”.

|

|

||||||

- `count` is total amount of observations.

|

|

||||||

- `sum` is the total time (in seconds) of all responses.

|

|

||||||

|

|

||||||

**PromQL examples:**

|

|

||||||

|

|

||||||

🔹 **95th percentile latency**

|

|

||||||

|

|

||||||

```promql

|

|

||||||

histogram_quantile(0.95, rate(aralez_response_latency_seconds_bucket[5m]))

|

|

||||||

|

|

||||||

```

|

|

||||||

|

|

||||||

🔹 **Average latency**

|

|

||||||

|

|

||||||

```promql

|

|

||||||

rate(aralez_response_latency_seconds_sum[5m]) / rate(aralez_response_latency_seconds_count[5m])

|

|

||||||

```

|

|

||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

## ✅ Notes

|

|

||||||

|

|

||||||

- Metrics are registered after the first served request.

|

|

||||||

|

|

||||||

---

|

|

||||||

✅ Summary of key metrics

|

|

||||||

|

|

||||||

| Metric Name | Type | What it Tells You |

|

|

||||||

|---------------------------------------|------------|---------------------------|

|

|

||||||

| `aralez_requests_total` | Counter | Total requests served |

|

|

||||||

| `aralez_errors_total` | Counter | Number of failed requests |

|

|

||||||

| `aralez_responses_total{status="200"}` | CounterVec | Response status breakdown |

|

|

||||||

| `aralez_response_latency_seconds` | Histogram | How fast responses are |

|

|

||||||

|

|

||||||

📘 *Last updated: May 2025*

|

|

||||||

148

README.md

148

README.md

@@ -1,19 +1,33 @@

|

|||||||

|

|

||||||

|

|

||||||

# Aralez (Արալեզ), Reverse proxy and service mesh built on top of Cloudflare's Pingora

|

---

|

||||||

|

|

||||||

|

# Aralez (Արալեզ),

|

||||||

|

|

||||||

|

### **Reverse proxy built on top of Cloudflare's Pingora**

|

||||||

|

|

||||||

|

Aralez is a high-performance Rust reverse proxy with zero-configuration automatic protocol handling, TLS, and upstream management,

|

||||||

|

featuring Consul and Kubernetes integration for dynamic pod discovery and health-checked routing, acting as a lightweight ingress-style proxy.

|

||||||

|

|

||||||

|

---

|

||||||

What Aralez means ?

|

What Aralez means ?

|

||||||

**Aralez = Արալեզ** <ins>.Named after the legendary Armenian guardian spirit, winged dog-like creature, that descend upon fallen heroes to lick their wounds and resurrect them.</ins>.

|

**Aralez = Արալեզ** <ins>Named after the legendary Armenian guardian spirit, winged dog-like creature, that descend upon fallen heroes to lick their wounds and resurrect them</ins>.

|

||||||

|

|

||||||

Built on Rust, on top of **Cloudflare’s Pingora engine**, **Aralez** delivers world-class performance, security and scalability — right out of the box.

|

Built on Rust, on top of **Cloudflare’s Pingora engine**, **Aralez** delivers world-class performance, security and scalability — right out of the box.

|

||||||

|

|

||||||

|

[](https://www.buymeacoffee.com/sadoyan)

|

||||||

|

|

||||||

---

|

---

|

||||||

|

|

||||||

## 🔧 Key Features

|

## 🔧 Key Features

|

||||||

|

|

||||||

- **Dynamic Config Reloads** — Upstreams can be updated live via API, no restart required.

|

- **Dynamic Config Reloads** — Upstreams can be updated live via API, no restart required.

|

||||||

- **TLS Termination** — Built-in OpenSSL support.

|

- **TLS Termination** — Built-in OpenSSL support.

|

||||||

|

- **Automatic loading of certificates** — Automatically reads and loads certificates from a folder, without a restart.

|

||||||

- **Upstreams TLS detection** — Aralez will automatically detect if upstreams uses secure connection.

|

- **Upstreams TLS detection** — Aralez will automatically detect if upstreams uses secure connection.

|

||||||

|

- **Built in rate limiter** — Limit requests to server, by setting up upper limit for requests per seconds, per virtualhost.

|

||||||

|

- **Global rate limiter** — Set rate limit for all virtualhosts.

|

||||||

|

- **Per path rate limiter** — Set rate limit for specific paths. Path limits will override global limits.

|

||||||

- **Authentication** — Supports Basic Auth, API tokens, and JWT verification.

|

- **Authentication** — Supports Basic Auth, API tokens, and JWT verification.

|

||||||

- **Basic Auth**

|

- **Basic Auth**

|

||||||

- **API Key** via `x-api-key` header

|

- **API Key** via `x-api-key` header

|

||||||

@@ -24,6 +38,7 @@ Built on Rust, on top of **Cloudflare’s Pingora engine**, **Aralez** delivers

|

|||||||

- Failover with health checks

|

- Failover with health checks

|

||||||

- Sticky sessions via cookies

|

- Sticky sessions via cookies

|

||||||

- **Unified Port** — Serve HTTP and WebSocket traffic over the same connection.

|

- **Unified Port** — Serve HTTP and WebSocket traffic over the same connection.

|

||||||

|

- **Built in file server** — Build in minimalistic file server for serving static files, should be added as upstreams for public access.

|

||||||

- **Memory Safe** — Created purely on Rust.

|

- **Memory Safe** — Created purely on Rust.

|

||||||

- **High Performance** — Built with [Pingora](https://github.com/cloudflare/pingora) and tokio for async I/O.

|

- **High Performance** — Built with [Pingora](https://github.com/cloudflare/pingora) and tokio for async I/O.

|

||||||

|

|

||||||

@@ -62,10 +77,10 @@ Built on Rust, on top of **Cloudflare’s Pingora engine**, **Aralez** delivers

|

|||||||

### 🔧 `main.yaml`

|

### 🔧 `main.yaml`

|

||||||

|

|

||||||

| Key | Example Value | Description |

|

| Key | Example Value | Description |

|

||||||

|----------------------------------|--------------------------------------|--------------------------------------------------------------------------------------------------|

|

|----------------------------------|--------------------------------------|----------------------------------------------------------------------------------------------------|

|

||||||

| **threads** | 12 | Number of running daemon threads. Optional, defaults to 1 |

|

| **threads** | 12 | Number of running daemon threads. Optional, defaults to 1 |

|

||||||

| **user** | aralez | Optional, Username for running aralez after dropping root privileges, requires to launch as root |

|

| **runuser** | aralez | Optional, Username for running aralez after dropping root privileges, requires to launch as root |

|

||||||

| **group** | aralez | Optional,Group for running aralez after dropping root privileges, requires to launch as root |

|

| **rungroup** | aralez | Optional,Group for running aralez after dropping root privileges, requires to launch as root |

|

||||||

| **daemon** | false | Run in background (boolean) |

|

| **daemon** | false | Run in background (boolean) |

|

||||||

| **upstream_keepalive_pool_size** | 500 | Pool size for upstream keepalive connections |

|

| **upstream_keepalive_pool_size** | 500 | Pool size for upstream keepalive connections |

|

||||||

| **pid_file** | /tmp/aralez.pid | Path to PID file |

|

| **pid_file** | /tmp/aralez.pid | Path to PID file |

|

||||||

@@ -74,6 +89,7 @@ Built on Rust, on top of **Cloudflare’s Pingora engine**, **Aralez** delivers

|

|||||||

| **config_address** | 0.0.0.0:3000 | HTTP API address for pushing upstreams.yaml from remote location |

|

| **config_address** | 0.0.0.0:3000 | HTTP API address for pushing upstreams.yaml from remote location |

|

||||||

| **config_tls_address** | 0.0.0.0:3001 | HTTPS API address for pushing upstreams.yaml from remote location |

|

| **config_tls_address** | 0.0.0.0:3001 | HTTPS API address for pushing upstreams.yaml from remote location |

|

||||||

| **config_tls_certificate** | etc/server.crt | Certificate file path for API. Mandatory if proxy_address_tls is set, else optional |

|

| **config_tls_certificate** | etc/server.crt | Certificate file path for API. Mandatory if proxy_address_tls is set, else optional |

|

||||||

|

| **proxy_tls_grade** | (high, medium, unsafe) | Grade of TLS ciphers, for easy configuration. High matches Qualys SSL Labs A+ (defaults to medium) |

|

||||||

| **config_tls_key_file** | etc/key.pem | Private Key file path. Mandatory if proxy_address_tls is set, else optional |

|

| **config_tls_key_file** | etc/key.pem | Private Key file path. Mandatory if proxy_address_tls is set, else optional |

|

||||||

| **proxy_address_http** | 0.0.0.0:6193 | Aralez HTTP bind address |

|

| **proxy_address_http** | 0.0.0.0:6193 | Aralez HTTP bind address |

|

||||||

| **proxy_address_tls** | 0.0.0.0:6194 | Aralez HTTPS bind address (Optional) |

|

| **proxy_address_tls** | 0.0.0.0:6194 | Aralez HTTPS bind address (Optional) |

|

||||||

@@ -83,6 +99,9 @@ Built on Rust, on top of **Cloudflare’s Pingora engine**, **Aralez** delivers

|

|||||||

| **hc_method** | HEAD | Healthcheck method (HEAD, GET, POST are supported) UPPERCASE |

|

| **hc_method** | HEAD | Healthcheck method (HEAD, GET, POST are supported) UPPERCASE |

|

||||||

| **hc_interval** | 2 | Interval for health checks in seconds |

|

| **hc_interval** | 2 | Interval for health checks in seconds |

|

||||||

| **master_key** | 5aeff7f9-7b94-447c-af60-e8c488544a3e | Master key for working with API server and JWT Secret generation |

|

| **master_key** | 5aeff7f9-7b94-447c-af60-e8c488544a3e | Master key for working with API server and JWT Secret generation |

|

||||||

|

| **file_server_folder** | /some/local/folder | Optional, local folder to serve |

|

||||||

|

| **file_server_address** | 127.0.0.1:3002 | Optional, Local address for file server. Can set as upstream for public access |

|

||||||

|

| **config_api_enabled** | true | Boolean to enable/disable remote config push capability |

|

||||||

|

|

||||||

### 🌐 `upstreams.yaml`

|

### 🌐 `upstreams.yaml`

|

||||||

|

|

||||||

@@ -104,11 +123,42 @@ Make the binary executable `chmod 755 ./aralez-VERSION` and run.

|

|||||||

File names:

|

File names:

|

||||||

|

|

||||||

| File Name | Description |

|

| File Name | Description |

|

||||||

|---------------------------|---------------------------------------------------------------|

|

|---------------------------------|--------------------------------------------------------------------------|

|

||||||

| `aralez-x86_64-musl.gz` | Static Linux x86_64 binary, without any system dependency |

|

| `aralez-x86_64-musl.gz` | Static Linux x86_64 binary, without any system dependency |

|

||||||

| `aralez-x86_64-glibc.gz` | Dynamic Linux x86_64 binary, with minimal system dependencies |

|

| `aralez-x86_64-glibc.gz` | Dynamic Linux x86_64 binary, with minimal system dependencies |

|

||||||

|

| `aralez-x86_64-compat-musl.gz` | Static Linux x86_64 binary, compatible with old pre Haswell CPUs |

|

||||||

|

| `aralez-x86_64-compat-glibc.gz` | Dynamic Linux x86_64 binary, compatible with old pre Haswell CPUs |

|

||||||

| `aralez-aarch64-musl.gz` | Static Linux ARM64 binary, without any system dependency |

|

| `aralez-aarch64-musl.gz` | Static Linux ARM64 binary, without any system dependency |

|

||||||

| `aralez-aarch64-glibc.gz` | Dynamic Linux ARM64 binary, with minimal system dependencies |

|

| `aralez-aarch64-glibc.gz` | Dynamic Linux ARM64 binary, with minimal system dependencies |

|

||||||

|

| `sadoyan/aralez` | Docker image on Debian 13 slim (https://hub.docker.com/r/sadoyan/aralez) |

|

||||||

|

|

||||||

|

**Via docker**

|

||||||

|

|

||||||

|

```shell

|

||||||

|

docker run -d \

|

||||||

|

-v /local/path/to/config:/etc/aralez:ro \

|

||||||

|

-p 80:80 \

|

||||||

|

-p 443:443 \

|

||||||

|

sadoyan/aralez

|

||||||

|

```

|

||||||

|

|

||||||

|

## 💡 Note

|

||||||

|

|

||||||

|

In general **glibc** builds are working faster, but have few, basic, system dependencies for example :

|

||||||

|

|

||||||

|

```

|

||||||

|

linux-vdso.so.1 (0x00007ffeea33b000)

|

||||||

|

libgcc_s.so.1 => /lib/x86_64-linux-gnu/libgcc_s.so.1 (0x00007f09e7377000)

|

||||||

|

libm.so.6 => /lib/x86_64-linux-gnu/libm.so.6 (0x00007f09e6320000)

|

||||||

|

libc.so.6 => /lib/x86_64-linux-gnu/libc.so.6 (0x00007f09e613f000)

|

||||||

|

/lib64/ld-linux-x86-64.so.2 (0x00007f09e73b1000)

|

||||||

|

```

|

||||||

|

|

||||||

|

These are common to any Linux systems, so the binary should work on almost any Linux system.

|

||||||

|

|

||||||

|

**musl** builds are 100% portable, static compiled binaries and have zero system depencecies.

|

||||||

|

In general musl builds have a little less performance.

|

||||||

|

The most intensive tests shows 107k-110k requests per second on **Glibc** binaries against 97k-100k **Musl** ones.

|

||||||

|

|

||||||

## 🔌 Running the Proxy

|

## 🔌 Running the Proxy

|

||||||

|

|

||||||

@@ -142,7 +192,11 @@ A sample `upstreams.yaml` entry:

|

|||||||

provider: "file"

|

provider: "file"

|

||||||

sticky_sessions: false

|

sticky_sessions: false

|

||||||

to_https: false

|

to_https: false

|

||||||

headers:

|

rate_limit: 10

|

||||||

|

server_headers:

|

||||||

|

- "X-Forwarded-Proto:https"

|

||||||

|

- "X-Forwarded-Port:443"

|

||||||

|

client_headers:

|

||||||

- "Access-Control-Allow-Origin:*"

|

- "Access-Control-Allow-Origin:*"

|

||||||

- "Access-Control-Allow-Methods:POST, GET, OPTIONS"

|

- "Access-Control-Allow-Methods:POST, GET, OPTIONS"

|

||||||

- "Access-Control-Max-Age:86400"

|

- "Access-Control-Max-Age:86400"

|

||||||

@@ -152,8 +206,12 @@ authorization:

|

|||||||

myhost.mydomain.com:

|

myhost.mydomain.com:

|

||||||

paths:

|

paths:

|

||||||

"/":

|

"/":

|

||||||

|

rate_limit: 20

|

||||||

to_https: false

|

to_https: false

|

||||||

headers:

|

server_headers:

|

||||||

|

- "X-Something-Else:Foobar"

|

||||||

|

- "X-Another-Header:Hohohohoho"

|

||||||

|

client_headers:

|

||||||

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

||||||

- "X-Proxy-From:Hopaaaaaaaaaaaar"

|

- "X-Proxy-From:Hopaaaaaaaaaaaar"

|

||||||

servers:

|

servers:

|

||||||

@@ -161,24 +219,36 @@ myhost.mydomain.com:

|

|||||||

- "127.0.0.2:8000"

|

- "127.0.0.2:8000"

|

||||||

"/foo":

|

"/foo":

|

||||||

to_https: true

|

to_https: true

|

||||||

headers:

|

client_headers:

|

||||||

- "X-Another-Header:Hohohohoho"

|

- "X-Another-Header:Hohohohoho"

|

||||||

servers:

|

servers:

|

||||||

- "127.0.0.4:8443"

|

- "127.0.0.4:8443"

|

||||||

- "127.0.0.5:8443"

|

- "127.0.0.5:8443"

|

||||||

|

"/.well-known/acme-challenge":

|

||||||

|

healthcheck: false

|

||||||

|

servers:

|

||||||

|

- "127.0.0.1:8001"

|

||||||

```

|

```

|

||||||

|

|

||||||

**This means:**

|

**This means:**

|

||||||

|

|

||||||

- Sticky sessions are disabled globally. This setting applies to all upstreams. If enabled all requests will be 301 redirected to HTTPS.

|

- Sticky sessions are disabled globally. This setting applies to all upstreams. If enabled all requests will be 301 redirected to HTTPS.

|

||||||

- HTTP to HTTPS redirect disabled globally, but can be overridden by `to_https` setting per upstream.

|

- HTTP to HTTPS redirect disabled globally, but can be overridden by `to_https` setting per upstream.

|

||||||

|

- All upstreams will receive custom headers : `X-Forwarded-Proto:https` and `X-Forwarded-Port:443`

|

||||||

|

- Additionally, myhost.mydomain.com with path `/` will receive custom headers : `X-Another-Header:Hohohohoho` and `X-Something-Else:Foobar`

|

||||||

|

- Requests to each hosted domains will be limited to 10 requests per second per virtualhost.

|

||||||

|

- Requests limits are calculated per requester ip plus requested virtualhost.

|

||||||

|

- If the requester exceeds the limit it will receive `429 Too Many Requests` error.

|

||||||

|

- Optional. Rate limiter will be disabled if the parameter is entirely removed from config.

|

||||||

|

- Requests to `myhost.mydomain.com/` will be limited to 20 requests per second.

|

||||||

- Requests to `myhost.mydomain.com/` will be proxied to `127.0.0.1` and `127.0.0.2`.

|

- Requests to `myhost.mydomain.com/` will be proxied to `127.0.0.1` and `127.0.0.2`.

|

||||||

- Plain HTTP to `myhost.mydomain.com/foo` will get 301 redirect to configured TLS port of Aralez.

|

- Plain HTTP to `myhost.mydomain.com/foo` will get 301 redirect to configured TLS port of Aralez.

|

||||||

- Requests to `myhost.mydomain.com/foo` will be proxied to `127.0.0.4` and `127.0.0.5`.

|

- Requests to `myhost.mydomain.com/foo` will be proxied to `127.0.0.4` and `127.0.0.5`.

|

||||||

|

- Requests to `myhost.mydomain.com/.well-known/acme-challenge` will be proxied to `127.0.0.1:8001`, but healthcheks are disabled.

|

||||||

- SSL/TLS for upstreams is detected automatically, no need to set any config parameter.

|

- SSL/TLS for upstreams is detected automatically, no need to set any config parameter.

|

||||||

- Assuming the `127.0.0.5:8443` is SSL protected. The inner traffic will use TLS.

|

- Assuming the `127.0.0.5:8443` is SSL protected. The inner traffic will use TLS.

|

||||||

- Self signed certificates are silently accepted.

|

- Self-signed certificates are silently accepted.

|

||||||

- Global headers (CORS for this case) will be injected to all upstreams

|

- Global headers (CORS for this case) will be injected to all upstreams.

|

||||||

- Additional headers will be injected into the request for `myhost.mydomain.com`.

|

- Additional headers will be injected into the request for `myhost.mydomain.com`.

|

||||||

- You can choose any path, deep nested paths are supported, the best match chosen.

|

- You can choose any path, deep nested paths are supported, the best match chosen.

|

||||||

- All requests to servers will require JWT token authentication (You can comment out the authorization to disable it),

|

- All requests to servers will require JWT token authentication (You can comment out the authorization to disable it),

|

||||||

@@ -284,20 +354,33 @@ curl -u username:password -H 'Host: myip.mydomain.com' http://127.0.0.1:6193/

|

|||||||

- Sticky session support.

|

- Sticky session support.

|

||||||

- HTTP2 ready.

|

- HTTP2 ready.

|

||||||

|

|

||||||

📊 Why Choose Aralez? – Feature Comparison

|

### 🧩 Summary Table: Feature Comparison

|

||||||

|

|

||||||

| Feature | **Aralez** | **Nginx** | **HAProxy** | **Traefik** |

|

| Feature / Proxy | **Aralez** | **Nginx** | **HAProxy** | **Traefik** | **Caddy** | **Envoy** |

|

||||||

|----------------------------|----------------------------------------------------------------------|--------------------------|-------------------------|-----------------|

|

|----------------------------------|:-----------------:|:---------------------------:|:-----------------:|:--------------------------------:|:---------------:|:---------------:|

|

||||||

| **Hot Reload** | ✅ Yes (live, API/file) | ⚠️ Reloads config | ⚠️ Reloads config | ✅ Yes (dynamic) |

|

| **Hot Reload (Zero Downtime)** | ✅ **Automatic** | ⚙️ Manual (graceful reload) | ⚙️ Manual | ✅ Automatic | ✅ Automatic | ✅ Automatic |

|

||||||

| **JWT Auth** | ✅ Built-in | ❌ External scripts | ❌ External Lua or agent | ⚠️ With plugins |

|

| **Auto Cert Reload (from disk)** | ✅ **Automatic** | ❌ No | ❌ No | ✅ Automatic (Let's Encrypt only) | ✅ Automatic | ⚙️ Manual |

|

||||||

| **WebSocket Support** | ✅ Automatic | ⚠️ Manual config | ✅ Yes | ✅ Yes |

|

| **Auth: Basic / API Key / JWT** | ✅ **Built-in** | ⚙️ Basic only | ⚙️ Basic only | ✅ Config-based | ✅ Config-based | ✅ Config-based |

|

||||||

| **gRPC Support** | ✅ Automatic (no config) | ⚠️ Manual + HTTP/2 + TLS | ⚠️ Complex setup | ✅ Native |

|

| **TLS / HTTP2 Termination** | ✅ **Automatic** | ⚙️ Manual config | ⚙️ Manual config | ✅ Automatic | ✅ Automatic | ✅ Automatic |

|

||||||

| **TLS Termination** | ✅ Built-in (OpenSSL) | ✅ Yes | ✅ Yes | ✅ Yes |

|

| **Built-in A+ TLS Grades** | ✅ **Automatic** | ⚙️ Manual tuning | ⚙️ Manual | ⚙️ Manual | ✅ Automatic | ⚙️ Manual |

|

||||||

| **TLS Upstream Detection** | ✅ Automatic | ❌ | ❌ | ❌ |

|

| **gRPC Proxy** | ✅ **Zero-Config** | ⚙️ Manual setup | ⚙️ Manual | ⚙️ Needs config | ⚙️ Needs config | ⚙️ Needs config |

|

||||||

| **HTTP/2 Support** | ✅ Automatic | ⚠️ Requires extra config | ⚠️ Requires build flags | ✅ Native |

|

| **SSL Proxy** | ✅ **Zero-Config** | ⚙️ Manual | ⚙️ Manual | ✅ Automatic | ✅ Automatic | ✅ Automatic |

|

||||||

| **Sticky Sessions** | ✅ Cookie-based | ⚠️ In plus version only | ✅ | ✅ |

|

| **HTTP/2 Proxy** | ✅ **Zero-Config** | ⚙️ Manual enable | ⚙️ Manual enable | ✅ Automatic | ✅ Automatic | ✅ Automatic |

|

||||||

| **Prometheus Metrics** | ✅ [Built in](https://github.com/sadoyan/aralez/blob/main/METRICS.md) | ⚠️ With Lua or exporter | ⚠️ With external script | ✅ Native |

|

| **WebSocket Proxy** | ✅ **Zero-Config** | ⚙️ Manual upgrade | ⚙️ Manual upgrade | ✅ Automatic | ✅ Automatic | ✅ Automatic |

|

||||||

| **Built With** | 🦀 Rust | C | C | Go |

|

| **Sticky Sessions** | ✅ **Built-in** | ⚙️ Config-based | ⚙️ Config-based | ✅ Automatic | ⚙️ Limited | ✅ Config-based |

|

||||||

|

| **Prometheus Metrics** | ✅ **Built-in** | ⚙️ External exporter | ✅ Built-in | ✅ Built-in | ✅ Built-in | ✅ Built-in |

|

||||||

|

| **Consul Integration** | ✅ **Yes** | ❌ No | ⚙️ Via DNS only | ✅ Yes | ❌ No | ✅ Yes |

|

||||||

|

| **Kubernetes Integration** | ✅ **Yes** | ⚙️ Needs ingress setup | ⚙️ External | ✅ Yes | ⚙️ Limited | ✅ Yes |

|

||||||

|

| **Request Limiter** | ✅ **Yes** | ✅ Config-based | ✅ Config-based | ✅ Config-based | ✅ Config-based | ✅ Config-based |

|

||||||

|

| **Serve Static Files** | ✅ **Yes** | ✅ Yes | ⚙️ Basic | ✅ Automatic | ✅ Automatic | ❌ No |

|

||||||

|

| **Upstream Health Checks** | ✅ **Automatic** | ⚙️ Manual config | ⚙️ Manual config | ✅ Automatic | ✅ Automatic | ✅ Automatic |

|

||||||

|

| **Built With** | 🦀 **Rust** | C | C | Go | Go | C++ |

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

✅ **Automatic / Zero-Config** – Works immediately, no setup required

|

||||||

|

⚙️ **Manual / Config-based** – Requires explicit configuration or modules

|

||||||

|

❌ **No** – Not supported

|

||||||

|

|

||||||

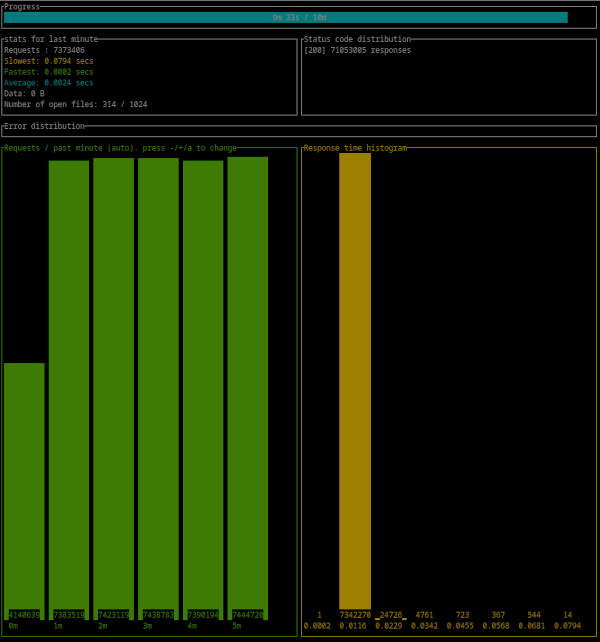

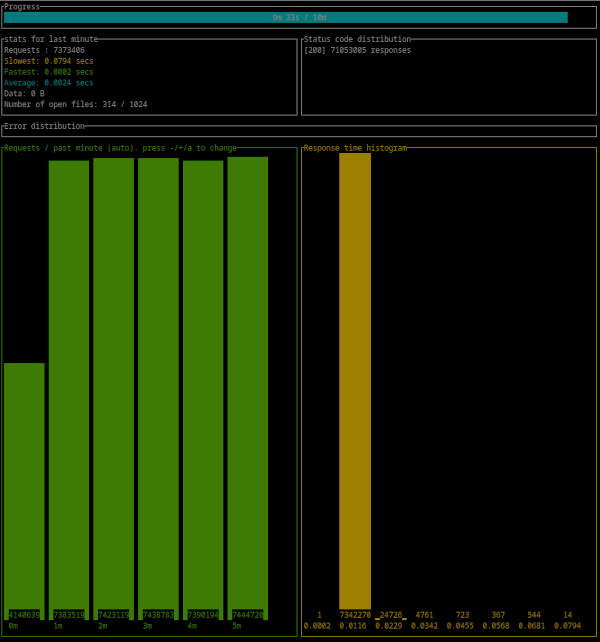

## 💡 Simple benchmark by [Oha](https://github.com/hatoo/oha)

|

## 💡 Simple benchmark by [Oha](https://github.com/hatoo/oha)

|

||||||

|

|

||||||

@@ -443,3 +526,20 @@ Error distribution:

|

|||||||

```

|

```

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

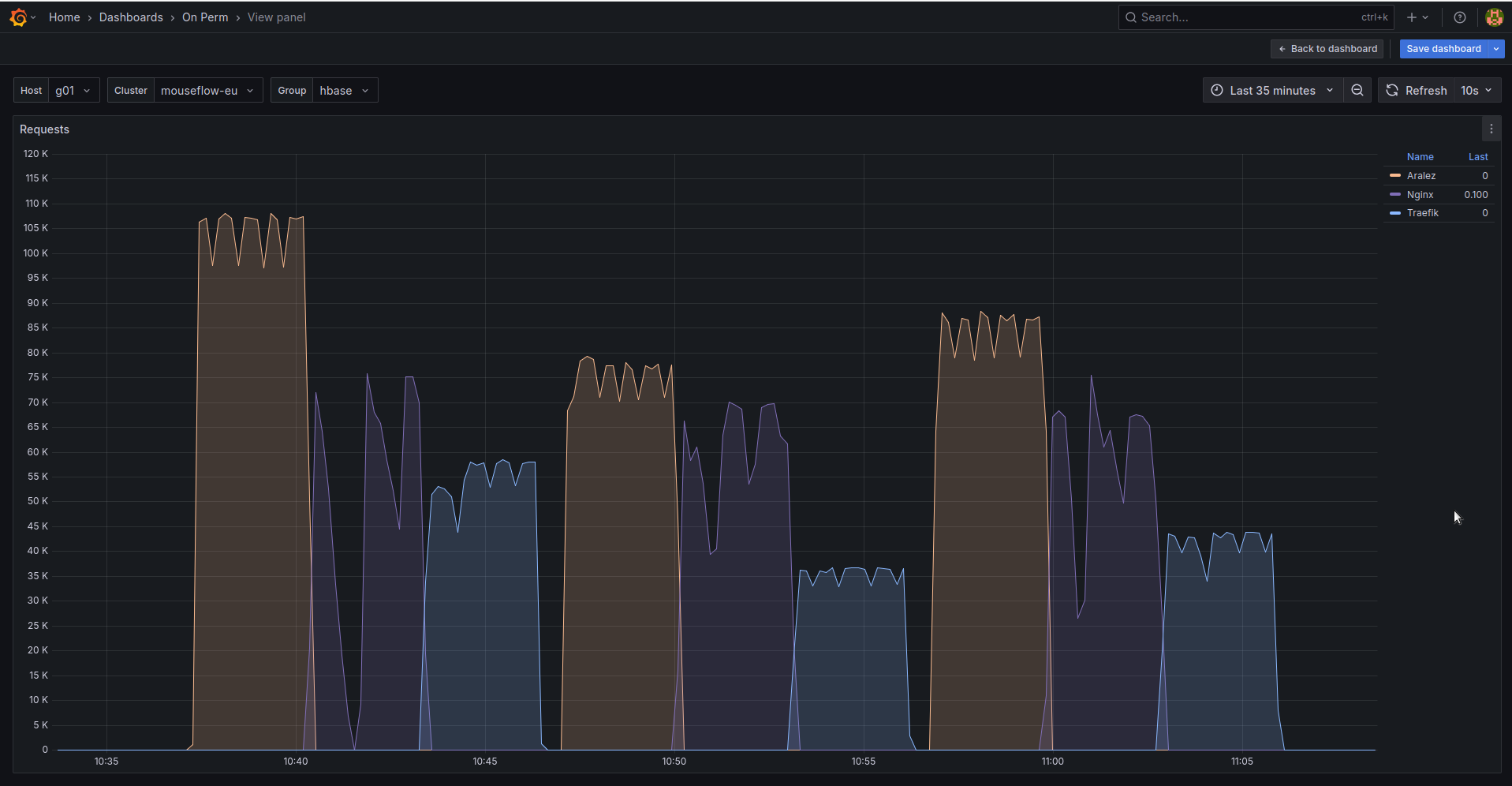

## 🚀 Aralez, Nginx, Traefik performance benchmark

|

||||||

|

|

||||||

|

This benchmark is done on 4 servers. With CPU Intel(R) Xeon(R) E-2174G CPU @ 3.80GHz, 64 GB RAM.

|

||||||

|

|

||||||

|

1. Sever runs Aralez, Traefik, Nginx on different ports. Tuned as much as I could .

|

||||||

|

2. 3x Upstreams servers, running Nginx. Replying with dummy json hardcoded in config file for max performance.

|

||||||

|

|

||||||

|

All servers are connected to the same switch with 1GB port in datacenter , not a home lab. The results:

|

||||||

|

|

||||||

|

|

||||||

|

The results show requests per second performed by Load balancer. You can see 3 batches with 800 concurrent users.

|

||||||

|

|

||||||

|

1. Requests via http1.1 to plain text endpoint.

|

||||||

|

2. Requests to via http2 to SSL endpoint.

|

||||||

|

3. Mixed workload with plain http1.1 and htt2 SSL.

|

||||||

|

|

||||||

|

|||||||

BIN

assets/bench.png

Normal file

BIN

assets/bench.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 160 KiB |

BIN

assets/bench2.png

Normal file

BIN

assets/bench2.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 71 KiB |

@@ -1,20 +1,24 @@

|

|||||||

# Main configuration file, applied on startup

|

# Main configuration file, applied on startup

|

||||||

threads: 12 # Nubber of daemon threads default setting

|

threads: 12 # Number of daemon threads default setting

|

||||||

#user: pastor # Username for running aralez after dropping root privileges, requires program to start as root

|

#runuser: pastor # Username for running aralez after dropping root privileges, requires program to start as root

|

||||||

#group: pastor # Group for running aralez after dropping root privileges, requires program to start as root

|

#rungroup: pastor # Group for running aralez after dropping root privileges, requires program to start as root

|

||||||

daemon: false # Run in background

|

daemon: false # Run in background

|

||||||

upstream_keepalive_pool_size: 500 # Pool size for upstream keepalive connections

|

upstream_keepalive_pool_size: 500 # Pool size for upstream keepalive connections

|

||||||

pid_file: /tmp/aralez.pid # Path to PID file

|

pid_file: /tmp/aralez.pid # Path to PID file

|

||||||

error_log: /tmp/aralez_err.log # Path to error log

|

error_log: /tmp/aralez_err.log # Path to error log

|

||||||

upgrade_sock: /tmp/aralez.sock # Path to socket file

|

upgrade_sock: /tmp/aralez.sock # Path to socket file

|

||||||

|

config_api_enabled: true # Boolean to enable/disable remote config push capability.

|

||||||

config_address: 0.0.0.0:3000 # HTTP API address for pushing upstreams.yaml from remote location

|

config_address: 0.0.0.0:3000 # HTTP API address for pushing upstreams.yaml from remote location

|

||||||

config_tls_address: 0.0.0.0:3001 # HTTP TLS API address for pushing upstreams.yaml from remote location

|

config_tls_address: 0.0.0.0:3001 # HTTP TLS API address for pushing upstreams.yaml from remote location

|

||||||

config_tls_certificate: etc/server.crt # Mandatory if config_tls_address is set

|

config_tls_certificate: /etc/server.crt # Mandatory if config_tls_address is set

|

||||||

config_tls_key_file: etc/key.pem # Mandatory if config_tls_address is set

|

config_tls_key_file: /etc/key.pem # Mandatory if config_tls_address is set

|

||||||

proxy_address_http: 0.0.0.0:6193 # Proxy HTTP bind address

|

proxy_address_http: 0.0.0.0:6193 # Proxy HTTP bind address

|

||||||

proxy_address_tls: 0.0.0.0:6194 # Optional, Proxy TLS bind address

|

proxy_address_tls: 0.0.0.0:6194 # Optional, Proxy TLS bind address

|

||||||

proxy_certificates: etc/yoyo # Mandatory if proxy_address_tls set, should contain certificate and key files strictly in a format {NAME}.crt, {NAME}.key.

|

proxy_certificates: /etc/certs # Mandatory if proxy_address_tls set, should contain a certificate and key files strictly in a format {NAME}.crt, {NAME}.key.

|

||||||

upstreams_conf: etc/upstreams.yaml # the location of upstreams file

|

proxy_tls_grade: a+ # Grade of TLS suite for proxy (a+, a, b, c, unsafe), matching grades of Qualys SSL Labs

|

||||||

|

upstreams_conf: /etc/upstreams.yaml # the location of upstreams file

|

||||||

|

file_server_folder: /opt/storage # Optional, local folder to serve

|

||||||

|

file_server_address: 127.0.0.1:3002 # Optional, Local address for file server. Can set as upstream for public access.

|

||||||

log_level: info # info, warn, error, debug, trace, off

|

log_level: info # info, warn, error, debug, trace, off

|

||||||

hc_method: HEAD # Healthcheck method (HEAD, GET, POST are supported) UPPERCASE

|

hc_method: HEAD # Healthcheck method (HEAD, GET, POST are supported) UPPERCASE

|

||||||

hc_interval: 2 #Interval for health checks in seconds

|

hc_interval: 2 #Interval for health checks in seconds

|

||||||

|

|||||||

@@ -1,45 +1,87 @@

|

|||||||

# The file under watch and hot reload, changes are applied immediately, no need to restart or reload.

|

# The file under watch and hot reload, changes are applied immediately, no need to restart or reload.

|

||||||

provider: "file" # consul

|

provider: "file" # "file" "consul" "kubernetes"

|

||||||

sticky_sessions: false

|

sticky_sessions: false

|

||||||

to_ssl: false

|

to_https: false

|

||||||

headers:

|

rate_limit: 100

|

||||||

|

server_headers:

|

||||||

|

- "X-Forwarded-Proto:https"

|

||||||

|

- "X-Forwarded-Port:443"

|

||||||

|

client_headers:

|

||||||

- "Access-Control-Allow-Origin:*"

|

- "Access-Control-Allow-Origin:*"

|

||||||

- "Access-Control-Allow-Methods:POST, GET, OPTIONS"

|

- "Access-Control-Allow-Methods:POST, GET, OPTIONS"

|

||||||

- "Access-Control-Max-Age:86400"

|

- "Access-Control-Max-Age:86400"

|

||||||

- "X-Custom-Header:Something Special"

|

#authorization:

|

||||||

authorization:

|

# type: "jwt"

|

||||||

type: "jwt"

|

# creds: "910517d9-f9a1-48de-8826-dbadacbd84af-cb6f830e-ab16-47ec-9d8f-0090de732774"

|

||||||

creds: "910517d9-f9a1-48de-8826-dbadacbd84af-cb6f830e-ab16-47ec-9d8f-0090de732774"

|

|

||||||

# type: "basic"

|

# type: "basic"

|

||||||

# creds: "user:Passw0rd"

|

# creds: "username:Pa$$w0rd"

|

||||||

# type: "apikey"

|

# type: "apikey"

|

||||||

# creds: "5ecbf799-1343-4e94-a9b5-e278af5cd313-56b45249-1839-4008-a450-a60dc76d2bae"

|

# creds: "5ecbf799-1343-4e94-a9b5-e278af5cd313-56b45249-1839-4008-a450-a60dc76d2bae"

|

||||||

consul: # If the provider is consul. Otherwise, ignored.

|

consul:

|

||||||

servers:

|

servers:

|

||||||

- "http://master1:8500"

|

- "http://192.168.1.199:8500"

|

||||||

- "http://192.168.22.1:8500"

|

- "http://192.168.1.200:8500"

|

||||||

- "http://master1.foo.local:8500"

|

- "http://192.168.1.201:8500"

|

||||||

services: # proxy: The hostname to access the proxy server, real : The real service name in Consul database.

|

services: # hostname: The hostname to access the proxy server, upstream : The real service name in Consul database.

|

||||||

- proxy: "proxy-frontend-dev-frontend-srv"

|

- hostname: "webapi-service"

|

||||||

real: "frontend-dev-frontend-srv"

|

upstream: "webapi-service-health"

|

||||||

|

path: "/one"

|

||||||

|

client_headers:

|

||||||

|

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

||||||

|

- "X-Proxy-From:Aralez"

|

||||||

|

rate_limit: 1

|

||||||

|

to_https: false

|

||||||

|

- hostname: "webapi-service"

|

||||||

|

upstream: "webapi-service-health"

|

||||||

|

path: "/"

|

||||||

token: "8e2db809-845b-45e1-8b47-2c8356a09da0-a4370955-18c2-4d6e-a8f8-ffcc0b47be81" # Consul server access token, If Consul auth is enabled

|

token: "8e2db809-845b-45e1-8b47-2c8356a09da0-a4370955-18c2-4d6e-a8f8-ffcc0b47be81" # Consul server access token, If Consul auth is enabled

|

||||||

|

kubernetes:

|

||||||

|

servers:

|

||||||

|

- "192.168.1.55:443" #For testing only, overrides with KUBERNETES_SERVICE_HOST : KUBERNETES_SERVICE_PORT_HTTPS env variables.

|

||||||

|

services:

|

||||||

|

- hostname: "webapi-service"

|

||||||

|

path: "/"

|

||||||

|

upstream: "webapi-service"

|

||||||

|

- hostname: "webapi-service"

|

||||||

|

upstream: "console-service"

|

||||||

|

path: "/one"

|

||||||

|

client_headers:

|

||||||

|

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

||||||

|

- "X-Proxy-From:Aralez"

|

||||||

|

rate_limit: 100

|

||||||

|

to_https: false

|

||||||

|

- hostname: "webapi-service"

|

||||||

|

upstream: "rambul-service"

|

||||||

|

path: "/two"

|

||||||

|

- hostname: "websocket-service"

|

||||||

|

upstream: "websocket-service"

|

||||||

|

path: "/"

|

||||||

|

tokenpath: "/path/to/kubetoken.txt" #If not set, will default to /var/run/secrets/kubernetes.io/serviceaccount/token

|

||||||

upstreams:

|

upstreams:

|

||||||

myip.mydomain.com:

|

myip.mydomain.com:

|

||||||

paths:

|

paths:

|

||||||

"/":

|

"/":

|

||||||

|

rate_limit: 200

|

||||||

to_https: false

|

to_https: false

|

||||||

headers:

|

client_headers:

|

||||||

- "X-Proxy-From:Gazan"

|

- "X-Proxy-From:Aralez"

|

||||||

servers: # List of upstreams HOST:PORT

|

servers:

|

||||||

- "127.0.0.1:8000"

|

- "127.0.0.1:8000"

|

||||||

- "127.0.0.2:8000"

|

- "127.0.0.2:8000"

|

||||||

- "127.0.0.3:8000"

|

- "127.0.0.3:8000"

|

||||||

- "127.0.0.4:8000"

|

- "127.0.0.4:8000"

|

||||||

|

- "127.0.0.5:8000"

|

||||||

"/ping":

|

"/ping":

|

||||||

to_https: true

|

authorization: # Will be ignored if global authentication is enabled.

|

||||||

headers:

|

type: "basic"

|

||||||

|

creds: "admin:admin"

|

||||||

|

to_https: false

|

||||||

|

server_headers:

|

||||||

|

- "X-Forwarded-Proto:https"

|

||||||

|

- "X-Forwarded-Port:443"

|

||||||

|

client_headers:

|

||||||

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

||||||

- "X-Proxy-From:Gazan"

|

- "X-Proxy-From:Aralez"

|

||||||

servers:

|

servers:

|

||||||

- "127.0.0.1:8000"

|

- "127.0.0.1:8000"

|

||||||

- "127.0.0.2:8000"

|

- "127.0.0.2:8000"

|

||||||

@@ -49,7 +91,8 @@ upstreams:

|

|||||||

polo.mydomain.com:

|

polo.mydomain.com:

|

||||||

paths:

|

paths:

|

||||||

"/":

|

"/":

|

||||||

headers:

|

to_https: false

|

||||||

|

client_headers:

|

||||||

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

- "X-Some-Thing:Yaaaaaaaaaaaaaaa"

|

||||||

servers:

|

servers:

|

||||||

- "192.168.1.1:8000"

|

- "192.168.1.1:8000"

|

||||||

@@ -58,3 +101,19 @@ upstreams:

|

|||||||

- "127.0.0.2:8000"

|

- "127.0.0.2:8000"

|

||||||

- "127.0.0.3:8000"

|

- "127.0.0.3:8000"

|

||||||

- "127.0.0.4:8000"

|

- "127.0.0.4:8000"

|

||||||

|

apt.mydomain.com:

|

||||||

|

paths:

|

||||||

|

"/":

|

||||||

|

servers:

|

||||||

|

- "192.168.1.10:443"

|

||||||

|

"/.well-known/acme-challenge":

|

||||||

|

healthcheck: false

|

||||||

|

servers:

|

||||||

|

- "127.0.0.1:8001"

|

||||||

|

rdr.mydomain.com:

|

||||||

|

paths:

|

||||||

|

"/":

|

||||||

|

redirect_to: "https://som.other.domain:6194"

|

||||||

|

healthcheck: false

|

||||||

|

servers:

|

||||||

|

- "127.0.0.1:8080"

|

||||||

@@ -1,8 +1,10 @@

|

|||||||

|

mod tls;

|

||||||

mod utils;

|

mod utils;

|

||||||

mod web;

|

mod web;

|

||||||

|

|

||||||

#[global_allocator]

|

#[global_allocator]

|

||||||

static GLOBAL: mimalloc::MiMalloc = mimalloc::MiMalloc;

|

static GLOBAL: mimalloc::MiMalloc = mimalloc::MiMalloc;

|

||||||

|

// pub static A: CountingAllocator = CountingAllocator;

|

||||||

|

|

||||||

fn main() {

|

fn main() {

|

||||||