mirror of

https://github.com/sadoyan/aralez.git

synced 2026-04-30 14:58:38 +08:00

Compare commits

6 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

d3602fa578 | ||

|

|

e304482667 | ||

|

|

f8118f9596 | ||

|

|

f654312466 | ||

|

|

b44f7069a0 | ||

|

|

a44979ec82 |

2

.gitignore

vendored

2

.gitignore

vendored

@@ -5,6 +5,8 @@

|

|||||||

*.dll

|

*.dll

|

||||||

*.exe

|

*.exe

|

||||||

*.sh

|

*.sh

|

||||||

|

/docs/

|

||||||

|

/docs

|

||||||

/target/

|

/target/

|

||||||

*.iml

|

*.iml

|

||||||

.idea/

|

.idea/

|

||||||

|

|||||||

@@ -48,6 +48,7 @@ lazy_static = "1.5.0"

|

|||||||

#openssl = "0.10.73"

|

#openssl = "0.10.73"

|

||||||

x509-parser = "0.17.0"

|

x509-parser = "0.17.0"

|

||||||

rustls-pemfile = "2.2.0"

|

rustls-pemfile = "2.2.0"

|

||||||

|

#hickory-client = { version = "0.25.2" }

|

||||||

tower-http = { version = "0.6.6", features = ["fs"] }

|

tower-http = { version = "0.6.6", features = ["fs"] }

|

||||||

once_cell = "1.20.2"

|

once_cell = "1.20.2"

|

||||||

#moka = { version = "0.12.10", features = ["sync"] }

|

#moka = { version = "0.12.10", features = ["sync"] }

|

||||||

|

|||||||

120

METRICS.md

120

METRICS.md

@@ -1,120 +0,0 @@

|

|||||||

# 📈 Aralez Prometheus Metrics Reference

|

|

||||||

|

|

||||||

This document outlines Prometheus metrics for the [Aralez](https://github.com/sadoyan/aralez) reverse proxy.

|

|

||||||

These metrics can be used for monitoring, alerting and performance analysis.

|

|

||||||

|

|

||||||

Exposed to `http://config_address/metrics`

|

|

||||||

|

|

||||||

By default `http://127.0.0.1:3000/metrics`

|

|

||||||

|

|

||||||

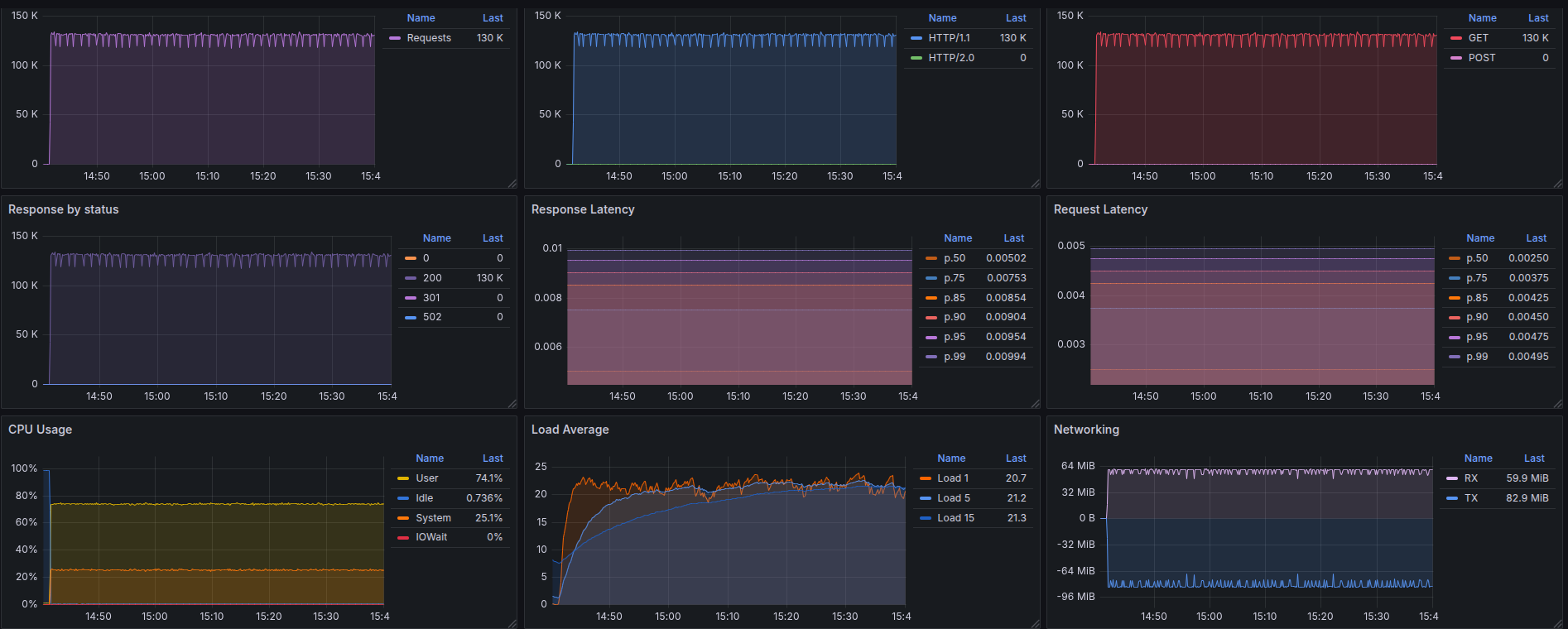

# 📊 Example Grafana dashboard during stress test :

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

## 🛠️ Prometheus Metrics

|

|

||||||

|

|

||||||

### 1. `aralez_requests_total`

|

|

||||||

|

|

||||||

- **Type**: `Counter`

|

|

||||||

- **Purpose**: Total amount requests served by Aralez.

|

|

||||||

|

|

||||||

**PromQL example:**

|

|

||||||

|

|

||||||

```promql

|

|

||||||

rate(aralez_requests_total[5m])

|

|

||||||

```

|

|

||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

### 2. `aralez_errors_total`

|

|

||||||

|

|

||||||

- **Type**: `Counter`

|

|

||||||

- **Purpose**: Count of requests that resulted in an error.

|

|

||||||

|

|

||||||

**PromQL example:**

|

|

||||||

|

|

||||||

```promql

|

|

||||||

rate(aralez_errors_total[5m])

|

|

||||||

```

|

|

||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

### 3. `aralez_responses_total{status="200"}`

|

|

||||||

|

|

||||||

- **Type**: `CounterVec`

|

|

||||||

- **Purpose**: Count of responses by HTTP status code.

|

|

||||||

|

|

||||||

**PromQL example:**

|

|

||||||

|

|

||||||

```promql

|

|

||||||

rate(aralez_responses_total{status=~"5.."}[5m]) > 0

|

|

||||||

```

|

|

||||||

|

|

||||||

> Useful for alerting on 5xx errors.

|

|

||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

### 4. `aralez_response_latency_seconds`

|

|

||||||

|

|

||||||

- **Type**: `Histogram`

|

|

||||||

- **Purpose**: Tracks the latency of responses in seconds.

|

|

||||||

|

|

||||||

**Example bucket output:**

|

|

||||||

|

|

||||||

```prometheus

|

|

||||||

aralez_response_latency_seconds_bucket{le="0.01"} 15

|

|

||||||

aralez_response_latency_seconds_bucket{le="0.1"} 120

|

|

||||||

aralez_response_latency_seconds_bucket{le="0.25"} 245

|

|

||||||

aralez_response_latency_seconds_bucket{le="0.5"} 500

|

|

||||||

...

|

|

||||||

aralez_response_latency_seconds_count 1023

|

|

||||||

aralez_response_latency_seconds_sum 42.6

|

|

||||||

```

|

|

||||||

|

|

||||||

| Metric | Meaning |

|

|

||||||

|-------------------------|---------------------------------------------------------------|

|

|

||||||

| `bucket{le="0.1"} 120` | 120 requests were ≤ 100ms |

|

|

||||||

| `bucket{le="0.25"} 245` | 245 requests were ≤ 250ms |

|

|

||||||

| `count` | Total number of observations (i.e., total responses measured) |

|

|

||||||

| `sum` | Total time of all responses, in seconds |

|

|

||||||

|

|

||||||

### 🔍 How to interpret:

|

|

||||||

|

|

||||||

- `le` means “less than or equal to”.

|

|

||||||

- `count` is total amount of observations.

|

|

||||||

- `sum` is the total time (in seconds) of all responses.

|

|

||||||

|

|

||||||

**PromQL examples:**

|

|

||||||

|

|

||||||

🔹 **95th percentile latency**

|

|

||||||

|

|

||||||

```promql

|

|

||||||

histogram_quantile(0.95, rate(aralez_response_latency_seconds_bucket[5m]))

|

|

||||||

|

|

||||||

```

|

|

||||||

|

|

||||||

🔹 **Average latency**

|

|

||||||

|

|

||||||

```promql

|

|

||||||

rate(aralez_response_latency_seconds_sum[5m]) / rate(aralez_response_latency_seconds_count[5m])

|

|

||||||

```

|

|

||||||

|

|

||||||

---

|

|

||||||

|

|

||||||

## ✅ Notes

|

|

||||||

|

|

||||||

- Metrics are registered after the first served request.

|

|

||||||

|

|

||||||

---

|

|

||||||

✅ Summary of key metrics

|

|

||||||

|

|

||||||

| Metric Name | Type | What it Tells You |

|

|

||||||

|---------------------------------------|------------|---------------------------|

|

|

||||||

| `aralez_requests_total` | Counter | Total requests served |

|

|

||||||

| `aralez_errors_total` | Counter | Number of failed requests |

|

|

||||||

| `aralez_responses_total{status="200"}` | CounterVec | Response status breakdown |

|

|

||||||

| `aralez_response_latency_seconds` | Histogram | How fast responses are |

|

|

||||||

|

|

||||||

📘 *Last updated: May 2025*

|

|

||||||

51

README.md

51

README.md

@@ -66,31 +66,32 @@ Built on Rust, on top of **Cloudflare’s Pingora engine**, **Aralez** delivers

|

|||||||

|

|

||||||

### 🔧 `main.yaml`

|

### 🔧 `main.yaml`

|

||||||

|

|

||||||

| Key | Example Value | Description |

|

| Key | Example Value | Description |

|

||||||

|----------------------------------|--------------------------------------|--------------------------------------------------------------------------------------------------|

|

|----------------------------------|--------------------------------------|----------------------------------------------------------------------------------------------------|

|

||||||

| **threads** | 12 | Number of running daemon threads. Optional, defaults to 1 |

|

| **threads** | 12 | Number of running daemon threads. Optional, defaults to 1 |

|

||||||

| **user** | aralez | Optional, Username for running aralez after dropping root privileges, requires to launch as root |

|

| **user** | aralez | Optional, Username for running aralez after dropping root privileges, requires to launch as root |

|

||||||

| **group** | aralez | Optional,Group for running aralez after dropping root privileges, requires to launch as root |

|

| **group** | aralez | Optional,Group for running aralez after dropping root privileges, requires to launch as root |

|

||||||

| **daemon** | false | Run in background (boolean) |

|

| **daemon** | false | Run in background (boolean) |

|

||||||

| **upstream_keepalive_pool_size** | 500 | Pool size for upstream keepalive connections |

|

| **upstream_keepalive_pool_size** | 500 | Pool size for upstream keepalive connections |

|

||||||

| **pid_file** | /tmp/aralez.pid | Path to PID file |

|

| **pid_file** | /tmp/aralez.pid | Path to PID file |

|

||||||

| **error_log** | /tmp/aralez_err.log | Path to error log file |

|

| **error_log** | /tmp/aralez_err.log | Path to error log file |

|

||||||

| **upgrade_sock** | /tmp/aralez.sock | Path to live upgrade socket file |

|

| **upgrade_sock** | /tmp/aralez.sock | Path to live upgrade socket file |

|

||||||

| **config_address** | 0.0.0.0:3000 | HTTP API address for pushing upstreams.yaml from remote location |

|

| **config_address** | 0.0.0.0:3000 | HTTP API address for pushing upstreams.yaml from remote location |

|

||||||

| **config_tls_address** | 0.0.0.0:3001 | HTTPS API address for pushing upstreams.yaml from remote location |

|

| **config_tls_address** | 0.0.0.0:3001 | HTTPS API address for pushing upstreams.yaml from remote location |

|

||||||

| **config_tls_certificate** | etc/server.crt | Certificate file path for API. Mandatory if proxy_address_tls is set, else optional |

|

| **config_tls_certificate** | etc/server.crt | Certificate file path for API. Mandatory if proxy_address_tls is set, else optional |

|

||||||

| **config_tls_key_file** | etc/key.pem | Private Key file path. Mandatory if proxy_address_tls is set, else optional |

|

| **proxy_tls_grade** | (high, medium, unsafe) | Grade of TLS ciphers, for easy configuration. High matches Qualys SSL Labs A+ (defaults to medium) |

|

||||||

| **proxy_address_http** | 0.0.0.0:6193 | Aralez HTTP bind address |

|

| **config_tls_key_file** | etc/key.pem | Private Key file path. Mandatory if proxy_address_tls is set, else optional |

|

||||||

| **proxy_address_tls** | 0.0.0.0:6194 | Aralez HTTPS bind address (Optional) |

|

| **proxy_address_http** | 0.0.0.0:6193 | Aralez HTTP bind address |

|

||||||

| **proxy_certificates** | etc/certs/ | The directory containing certificate and key files. In a format {NAME}.crt, {NAME}.key. |

|

| **proxy_address_tls** | 0.0.0.0:6194 | Aralez HTTPS bind address (Optional) |

|

||||||

| **upstreams_conf** | etc/upstreams.yaml | The location of upstreams file |

|

| **proxy_certificates** | etc/certs/ | The directory containing certificate and key files. In a format {NAME}.crt, {NAME}.key. |

|

||||||

| **log_level** | info | Log level , possible values : info, warn, error, debug, trace, off |

|

| **upstreams_conf** | etc/upstreams.yaml | The location of upstreams file |

|

||||||

| **hc_method** | HEAD | Healthcheck method (HEAD, GET, POST are supported) UPPERCASE |

|

| **log_level** | info | Log level , possible values : info, warn, error, debug, trace, off |

|

||||||

| **hc_interval** | 2 | Interval for health checks in seconds |

|

| **hc_method** | HEAD | Healthcheck method (HEAD, GET, POST are supported) UPPERCASE |

|

||||||

| **master_key** | 5aeff7f9-7b94-447c-af60-e8c488544a3e | Master key for working with API server and JWT Secret generation |

|

| **hc_interval** | 2 | Interval for health checks in seconds |

|

||||||

| **file_server_folder** | /some/local/folder | Optional, local folder to serve |

|

| **master_key** | 5aeff7f9-7b94-447c-af60-e8c488544a3e | Master key for working with API server and JWT Secret generation |

|

||||||

| **file_server_address** | 127.0.0.1:3002 | Optional, Local address for file server. Can set as upstream for public access |

|

| **file_server_folder** | /some/local/folder | Optional, local folder to serve |

|

||||||

| **config_api_enabled** | true | Boolean to enable/disable remote config push capability |

|

| **file_server_address** | 127.0.0.1:3002 | Optional, Local address for file server. Can set as upstream for public access |

|

||||||

|

| **config_api_enabled** | true | Boolean to enable/disable remote config push capability |

|

||||||

|

|

||||||

### 🌐 `upstreams.yaml`

|

### 🌐 `upstreams.yaml`

|

||||||

|

|

||||||

|

|||||||

@@ -10,15 +10,16 @@ upgrade_sock: /tmp/aralez.sock # Path to socket file

|

|||||||

config_api_enabled: true # Boolean to enable/disable remote config push capability.

|

config_api_enabled: true # Boolean to enable/disable remote config push capability.

|

||||||

config_address: 0.0.0.0:3000 # HTTP API address for pushing upstreams.yaml from remote location

|

config_address: 0.0.0.0:3000 # HTTP API address for pushing upstreams.yaml from remote location

|

||||||

config_tls_address: 0.0.0.0:3001 # HTTP TLS API address for pushing upstreams.yaml from remote location

|

config_tls_address: 0.0.0.0:3001 # HTTP TLS API address for pushing upstreams.yaml from remote location

|

||||||

config_tls_certificate: /opt/Rust/Projects/asyncweb/etc/server.crt # Mandatory if config_tls_address is set

|

config_tls_certificate: /etc/server.crt # Mandatory if config_tls_address is set

|

||||||

config_tls_key_file: /opt/Rust/Projects/asyncweb/etc/key.pem # Mandatory if config_tls_address is set

|

config_tls_key_file: /etc/key.pem # Mandatory if config_tls_address is set

|

||||||

proxy_address_http: 0.0.0.0:6193 # Proxy HTTP bind address

|

proxy_address_http: 0.0.0.0:6193 # Proxy HTTP bind address

|

||||||

proxy_address_tls: 0.0.0.0:6194 # Optional, Proxy TLS bind address

|

proxy_address_tls: 0.0.0.0:6194 # Optional, Proxy TLS bind address

|

||||||

proxy_certificates: /opt/Rust/Projects/asyncweb/etc/yoyo # Mandatory if proxy_address_tls set, should contain certificate and key files strictly in a format {NAME}.crt, {NAME}.key.

|

proxy_certificates: /etc/certs # Mandatory if proxy_address_tls set, should contain a certificate and key files strictly in a format {NAME}.crt, {NAME}.key.

|

||||||

upstreams_conf: /opt/Rust/Projects/asyncweb/etc/upstreams.yaml # the location of upstreams file

|

proxy_tls_grade: a+ # Grade of TLS suite for proxy (a+, a, b, c, unsafe), matching grades of Qualys SSL Labs

|

||||||

|

upstreams_conf: /etc/upstreams.yaml # the location of upstreams file

|

||||||

file_server_folder: /opt/storage # Optional, local folder to serve

|

file_server_folder: /opt/storage # Optional, local folder to serve

|

||||||

file_server_address: 127.0.0.1:3002 # Optional, Local address for file server. Can set as upstream for public access.

|

file_server_address: 127.0.0.1:3002 # Optional, Local address for file server. Can set as upstream for public access.

|

||||||

log_level: info # info, warn, error, debug, trace, off

|

log_level: info # info, warn, error, debug, trace, off

|

||||||

hc_method: HEAD # Healthcheck method (HEAD, GET, POST are supported) UPPERCASE

|

hc_method: HEAD # Healthcheck method (HEAD, GET, POST are supported) UPPERCASE

|

||||||

hc_interval: 2 #Interval for health checks in seconds

|

hc_interval: 2 #Interval for health checks in seconds

|

||||||

master_key: 910517d9-f9a1-48de-8826-dbadacbd84af-cb6f830e-ab16-47ec-9d8f-0090de732774 # Mater key for working with API server and JWT Secret

|

master_key: 910517d9-f9a1-48de-8826-dbadacbd84af-cb6f830e-ab16-47ec-9d8f-0090de732774 # Mater key for working with API server and JWT Secret

|

||||||

@@ -1,5 +1,5 @@

|

|||||||

# The file under watch and hot reload, changes are applied immediately, no need to restart or reload.

|

# The file under watch and hot reload, changes are applied immediately, no need to restart or reload.

|

||||||

provider: "file" # consul

|

provider: "file" # consul, kubernetes

|

||||||

sticky_sessions: false

|

sticky_sessions: false

|

||||||

to_ssl: false

|

to_ssl: false

|

||||||

#rate_limit: 100

|

#rate_limit: 100

|

||||||

@@ -24,6 +24,17 @@ consul: # If the provider is consul. Otherwise, ignored.

|

|||||||

- proxy: "proxy-frontend-dev-frontend-srv"

|

- proxy: "proxy-frontend-dev-frontend-srv"

|

||||||

real: "frontend-dev-frontend-srv"

|

real: "frontend-dev-frontend-srv"

|

||||||

token: "8e2db809-845b-45e1-8b47-2c8356a09da0-a4370955-18c2-4d6e-a8f8-ffcc0b47be81" # Consul server access token, If Consul auth is enabled

|

token: "8e2db809-845b-45e1-8b47-2c8356a09da0-a4370955-18c2-4d6e-a8f8-ffcc0b47be81" # Consul server access token, If Consul auth is enabled

|

||||||

|

kubernetes:

|

||||||

|

servers:

|

||||||

|

- "172.16.0.11:5443" # KUBERNETES_SERVICE_HOST : KUBERNETES_SERVICE_PORT_HTTPS

|

||||||

|

services:

|

||||||

|

- proxy: "vt-api-service-v2"

|

||||||

|

real: "vt-api-service-v2"

|

||||||

|

- proxy: "vt-search-service"

|

||||||

|

real: "vt-search-service"

|

||||||

|

- proxy: "vt-websocket-service"

|

||||||

|

real: "vt-websocket-service"

|

||||||

|

tokenpath: "/tmp/token.txt" # /var/run/secrets/kubernetes.io/serviceaccount/token

|

||||||

upstreams:

|

upstreams:

|

||||||

myip.mydomain.com:

|

myip.mydomain.com:

|

||||||

paths:

|

paths:

|

||||||

|

|||||||

@@ -1,9 +1,11 @@

|

|||||||

pub mod auth;

|

pub mod auth;

|

||||||

pub mod consul;

|

pub mod consul;

|

||||||

pub mod discovery;

|

pub mod discovery;

|

||||||

|

pub mod dnsclient;

|

||||||

mod filewatch;

|

mod filewatch;

|

||||||

pub mod healthcheck;

|

pub mod healthcheck;

|

||||||

pub mod jwt;

|

pub mod jwt;

|

||||||

|

pub mod kuber;

|

||||||

pub mod metrics;

|

pub mod metrics;

|

||||||

pub mod parceyaml;

|

pub mod parceyaml;

|

||||||

pub mod structs;

|

pub mod structs;

|

||||||

|

|||||||

@@ -1,6 +1,5 @@

|

|||||||

use crate::utils::parceyaml::load_configuration;

|

|

||||||

use crate::utils::structs::{Configuration, InnerMap, ServiceMapping, UpstreamsDashMap};

|

use crate::utils::structs::{Configuration, InnerMap, ServiceMapping, UpstreamsDashMap};

|

||||||

use crate::utils::tools::{clone_dashmap_into, compare_dashmaps};

|

use crate::utils::tools::{clone_dashmap_into, compare_dashmaps, print_upstreams};

|

||||||

use dashmap::DashMap;

|

use dashmap::DashMap;

|

||||||

use futures::channel::mpsc::Sender;

|

use futures::channel::mpsc::Sender;

|

||||||

use futures::SinkExt;

|

use futures::SinkExt;

|

||||||

@@ -11,6 +10,7 @@ use reqwest::header::{HeaderMap, HeaderValue};

|

|||||||

use serde::Deserialize;

|

use serde::Deserialize;

|

||||||

use std::collections::HashMap;

|

use std::collections::HashMap;

|

||||||

use std::sync::atomic::AtomicUsize;

|

use std::sync::atomic::AtomicUsize;

|

||||||

|

use std::sync::Arc;

|

||||||

use std::time::Duration;

|

use std::time::Duration;

|

||||||

|

|

||||||

#[derive(Debug, Deserialize)]

|

#[derive(Debug, Deserialize)]

|

||||||

@@ -27,59 +27,53 @@ struct TaggedAddress {

|

|||||||

port: u16,

|

port: u16,

|

||||||

}

|

}

|

||||||

|

|

||||||

pub async fn start(fp: String, mut toreturn: Sender<Configuration>) {

|

pub async fn start(mut toreturn: Sender<Configuration>, config: Arc<Configuration>) {

|

||||||

let config = load_configuration(fp.as_str(), "filepath");

|

|

||||||

let headers = DashMap::new();

|

let headers = DashMap::new();

|

||||||

match config {

|

info!("Consul Discovery is enabled : {}", config.typecfg);

|

||||||

Some(config) => {

|

let consul = config.consul.clone();

|

||||||

if config.typecfg.to_string() != "consul" {

|

let prev_upstreams = UpstreamsDashMap::new();

|

||||||

info!("Not running Consul discovery, requested type is: {}", config.typecfg);

|

match consul {

|

||||||

return;

|

Some(consul) => {

|

||||||

}

|

let servers = consul.servers.unwrap();

|

||||||

|

info!("Consul Servers => {:?}", servers);

|

||||||

|

let end = servers.len() - 1;

|

||||||

|

|

||||||

info!("Consul Discovery is enabled : {}", config.typecfg);

|

loop {

|

||||||

let consul = config.consul.clone();

|

let mut num = 0;

|

||||||

let prev_upstreams = UpstreamsDashMap::new();

|

if end > 0 {

|

||||||

match consul {

|

num = rand::rng().random_range(0..end);

|

||||||

Some(consul) => {

|

|

||||||

let servers = consul.servers.unwrap();

|

|

||||||

info!("Consul Servers => {:?}", servers);

|

|

||||||

let end = servers.len();

|

|

||||||

|

|

||||||

loop {

|

|

||||||

let num = rand::rng().random_range(1..end);

|

|

||||||

headers.clear();

|

|

||||||

for (k, v) in config.headers.clone() {

|

|

||||||

headers.insert(k.to_string(), v);

|

|

||||||

}

|

|

||||||

let consul_data = servers.get(num).unwrap().to_string();

|

|

||||||

let upstreams = consul_request(consul_data, consul.services.clone(), consul.token.clone());

|

|

||||||

match upstreams.await {

|

|

||||||

Some(upstreams) => {

|

|

||||||

if !compare_dashmaps(&upstreams, &prev_upstreams) {

|

|

||||||

let mut tosend: Configuration = Configuration {

|

|

||||||

upstreams: Default::default(),

|

|

||||||

headers: Default::default(),

|

|

||||||

consul: None,

|

|

||||||

typecfg: "".to_string(),

|

|

||||||

extraparams: config.extraparams.clone(),

|

|

||||||

};

|

|

||||||

|

|

||||||

clone_dashmap_into(&upstreams, &prev_upstreams);

|

|

||||||

clone_dashmap_into(&upstreams, &tosend.upstreams);

|

|

||||||

tosend.headers = headers.clone();

|

|

||||||

tosend.extraparams.authentication = config.extraparams.authentication.clone();

|

|

||||||

tosend.typecfg = config.typecfg.clone();

|

|

||||||

tosend.consul = config.consul.clone();

|

|

||||||

toreturn.send(tosend).await.unwrap();

|

|

||||||

}

|

|

||||||

}

|

|

||||||

None => {}

|

|

||||||

}

|

|

||||||

sleep(Duration::from_secs(5)).await;

|

|

||||||

}

|

|

||||||

}

|

}

|

||||||

None => {}

|

headers.clear();

|

||||||

|

for (k, v) in config.headers.clone() {

|

||||||

|

headers.insert(k.to_string(), v);

|

||||||

|

}

|

||||||

|

let consul_data = servers.get(num).unwrap().to_string();

|

||||||

|

let upstreams = consul_request(consul_data, consul.services.clone(), consul.token.clone());

|

||||||

|

match upstreams.await {

|

||||||

|

Some(upstreams) => {

|

||||||

|

if !compare_dashmaps(&upstreams, &prev_upstreams) {

|

||||||

|

let mut tosend: Configuration = Configuration {

|

||||||

|

upstreams: Default::default(),

|

||||||

|

headers: Default::default(),

|

||||||

|

consul: None,

|

||||||

|

kubernetes: None,

|

||||||

|

typecfg: "".to_string(),

|

||||||

|

extraparams: config.extraparams.clone(),

|

||||||

|

};

|

||||||

|

|

||||||

|

clone_dashmap_into(&upstreams, &prev_upstreams);

|

||||||

|

clone_dashmap_into(&upstreams, &tosend.upstreams);

|

||||||

|

tosend.headers = headers.clone();

|

||||||

|

tosend.extraparams.authentication = config.extraparams.authentication.clone();

|

||||||

|

tosend.typecfg = config.typecfg.clone();

|

||||||

|

tosend.consul = config.consul.clone();

|

||||||

|

print_upstreams(&tosend.upstreams);

|

||||||

|

toreturn.send(tosend).await.unwrap();

|

||||||

|

}

|

||||||

|

}

|

||||||

|

None => {}

|

||||||

|

}

|

||||||

|

sleep(Duration::from_secs(5)).await;

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

None => {}

|

None => {}

|

||||||

|

|||||||

@@ -1,13 +1,11 @@

|

|||||||

use crate::utils::consul;

|

|

||||||

use crate::utils::filewatch;

|

use crate::utils::filewatch;

|

||||||

use crate::utils::structs::Configuration;

|

use crate::utils::structs::Configuration;

|

||||||

|

use crate::utils::{consul, kuber};

|

||||||

use crate::web::webserver;

|

use crate::web::webserver;

|

||||||

use async_trait::async_trait;

|

use async_trait::async_trait;

|

||||||

use futures::channel::mpsc::Sender;

|

use futures::channel::mpsc::Sender;

|

||||||

|

use std::sync::Arc;

|

||||||

|

|

||||||

pub struct FromFileProvider {

|

|

||||||

pub path: String,

|

|

||||||

}

|

|

||||||

pub struct APIUpstreamProvider {

|

pub struct APIUpstreamProvider {

|

||||||

pub config_api_enabled: bool,

|

pub config_api_enabled: bool,

|

||||||

pub address: String,

|

pub address: String,

|

||||||

@@ -19,15 +17,6 @@ pub struct APIUpstreamProvider {

|

|||||||

pub file_server_folder: Option<String>,

|

pub file_server_folder: Option<String>,

|

||||||

}

|

}

|

||||||

|

|

||||||

pub struct ConsulProvider {

|

|

||||||

pub path: String,

|

|

||||||

}

|

|

||||||

|

|

||||||

#[async_trait]

|

|

||||||

pub trait Discovery {

|

|

||||||

async fn start(&self, tx: Sender<Configuration>);

|

|

||||||

}

|

|

||||||

|

|

||||||

#[async_trait]

|

#[async_trait]

|

||||||

impl Discovery for APIUpstreamProvider {

|

impl Discovery for APIUpstreamProvider {

|

||||||

async fn start(&self, toreturn: Sender<Configuration>) {

|

async fn start(&self, toreturn: Sender<Configuration>) {

|

||||||

@@ -35,6 +24,23 @@ impl Discovery for APIUpstreamProvider {

|

|||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

|

pub struct FromFileProvider {

|

||||||

|

pub path: String,

|

||||||

|

}

|

||||||

|

|

||||||

|

pub struct ConsulProvider {

|

||||||

|

pub config: Arc<Configuration>,

|

||||||

|

}

|

||||||

|

|

||||||

|

pub struct KubernetesProvider {

|

||||||

|

pub config: Arc<Configuration>,

|

||||||

|

}

|

||||||

|

|

||||||

|

#[async_trait]

|

||||||

|

pub trait Discovery {

|

||||||

|

async fn start(&self, tx: Sender<Configuration>);

|

||||||

|

}

|

||||||

|

|

||||||

#[async_trait]

|

#[async_trait]

|

||||||

impl Discovery for FromFileProvider {

|

impl Discovery for FromFileProvider {

|

||||||

async fn start(&self, tx: Sender<Configuration>) {

|

async fn start(&self, tx: Sender<Configuration>) {

|

||||||

@@ -45,6 +51,13 @@ impl Discovery for FromFileProvider {

|

|||||||

#[async_trait]

|

#[async_trait]

|

||||||

impl Discovery for ConsulProvider {

|

impl Discovery for ConsulProvider {

|

||||||

async fn start(&self, tx: Sender<Configuration>) {

|

async fn start(&self, tx: Sender<Configuration>) {

|

||||||

tokio::spawn(consul::start(self.path.clone(), tx.clone()));

|

tokio::spawn(consul::start(tx.clone(), self.config.clone()));

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

#[async_trait]

|

||||||

|

impl Discovery for KubernetesProvider {

|

||||||

|

async fn start(&self, tx: Sender<Configuration>) {

|

||||||

|

tokio::spawn(kuber::start(tx.clone(), self.config.clone()));

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|||||||

158

src/utils/dnsclient.rs

Normal file

158

src/utils/dnsclient.rs

Normal file

@@ -0,0 +1,158 @@

|

|||||||

|

/*

|

||||||

|

use crate::utils::structs::InnerMap;

|

||||||

|

use dashmap::DashMap;

|

||||||

|

use hickory_client::client::{Client, ClientHandle};

|

||||||

|

use hickory_client::proto::rr::{DNSClass, Name, RecordType};

|

||||||

|

use hickory_client::proto::runtime::TokioRuntimeProvider;

|

||||||

|

use hickory_client::proto::udp::UdpClientStream;

|

||||||

|

use std::net::SocketAddr;

|

||||||

|

use std::str::FromStr;

|

||||||

|

use std::sync::atomic::AtomicUsize;

|

||||||

|

use std::time::Duration;

|

||||||

|

use tokio::sync::Mutex;

|

||||||

|

|

||||||

|

type DnsError = Box<dyn std::error::Error + Send + Sync + 'static>;

|

||||||

|

|

||||||

|

pub struct DnsClientPool {

|

||||||

|

clients: Vec<Mutex<DnsClient>>,

|

||||||

|

}

|

||||||

|

|

||||||

|

struct DnsClient {

|

||||||

|

client: Client,

|

||||||

|

}

|

||||||

|

|

||||||

|

pub async fn start2(mut toreturn: Sender<Configuration>, config: Arc<Configuration>) {

|

||||||

|

let k8s = config.kubernetes.clone();

|

||||||

|

match k8s {

|

||||||

|

Some(k8s) => {

|

||||||

|

let dnserver = k8s.servers.unwrap_or(vec!["127.0.0.1:53".to_string()]);

|

||||||

|

let headers = DashMap::new();

|

||||||

|

let end = dnserver.len() - 1;

|

||||||

|

let mut num = 0;

|

||||||

|

if end > 0 {

|

||||||

|

num = rand::rng().random_range(0..end);

|

||||||

|

}

|

||||||

|

let srv = dnserver.get(num).unwrap().to_string();

|

||||||

|

let pool = DnsClientPool::new(5, srv.clone()).await;

|

||||||

|

let u = UpstreamsDashMap::new();

|

||||||

|

if let Some(whitelist) = k8s.services {

|

||||||

|

loop {

|

||||||

|

let upstreams = UpstreamsDashMap::new();

|

||||||

|

for service in whitelist.iter() {

|

||||||

|

let ret = pool.query_srv(service.real.as_str(), srv.clone()).await;

|

||||||

|

match ret {

|

||||||

|

Ok(r) => {

|

||||||

|

upstreams.insert(service.proxy.clone(), r);

|

||||||

|

}

|

||||||

|

Err(e) => eprintln!("DNS query failed for {:?}: {:?}", service, e),

|

||||||

|

}

|

||||||

|

}

|

||||||

|

if !compare_dashmaps(&u, &upstreams) {

|

||||||

|

headers.clear();

|

||||||

|

for (k, v) in config.headers.clone() {

|

||||||

|

headers.insert(k.to_string(), v);

|

||||||

|

}

|

||||||

|

|

||||||

|

let mut tosend: Configuration = Configuration {

|

||||||

|

upstreams: Default::default(),

|

||||||

|

headers: Default::default(),

|

||||||

|

consul: None,

|

||||||

|

kubernetes: None,

|

||||||

|

typecfg: "".to_string(),

|

||||||

|

extraparams: config.extraparams.clone(),

|

||||||

|

};

|

||||||

|

|

||||||

|

clone_dashmap_into(&upstreams, &u);

|

||||||

|

clone_dashmap_into(&upstreams, &tosend.upstreams);

|

||||||

|

tosend.headers = headers.clone();

|

||||||

|

tosend.extraparams.authentication = config.extraparams.authentication.clone();

|

||||||

|

tosend.typecfg = config.typecfg.clone();

|

||||||

|

tosend.consul = config.consul.clone();

|

||||||

|

print_upstreams(&tosend.upstreams);

|

||||||

|

toreturn.send(tosend).await.unwrap();

|

||||||

|

}

|

||||||

|

|

||||||

|

tokio::time::sleep(Duration::from_secs(1)).await;

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

None => {}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

impl DnsClient {

|

||||||

|

pub async fn new(server: String) -> Result<Self, DnsError> {

|

||||||

|

let server_details = server;

|

||||||

|

let server: SocketAddr = server_details.parse().expect("Unable to parse socket address");

|

||||||

|

let conn = UdpClientStream::builder(server, TokioRuntimeProvider::default()).build();

|

||||||

|

let (client, bg) = Client::connect(conn).await.unwrap();

|

||||||

|

tokio::spawn(bg);

|

||||||

|

Ok(Self { client })

|

||||||

|

}

|

||||||

|

|

||||||

|

pub async fn query_srv(&mut self, name: &str) -> Result<DashMap<String, (Vec<InnerMap>, AtomicUsize)>, DnsError> {

|

||||||

|

let upstreams: DashMap<String, (Vec<InnerMap>, AtomicUsize)> = DashMap::new();

|

||||||

|

let mut values = Vec::new();

|

||||||

|

match tokio::time::timeout(Duration::from_secs(5), self.client.query(Name::from_str(name)?, DNSClass::IN, RecordType::SRV)).await {

|

||||||

|

Ok(Ok(response)) => {

|

||||||

|

for answer in response.answers() {

|

||||||

|

if let hickory_client::proto::rr::RData::SRV(srv) = answer.data() {

|

||||||

|

let to_add = InnerMap {

|

||||||

|

address: srv.target().to_string(),

|

||||||

|

port: srv.port(),

|

||||||

|

is_ssl: false,

|

||||||

|

is_http2: false,

|

||||||

|

to_https: false,

|

||||||

|

rate_limit: None,

|

||||||

|

};

|

||||||

|

values.push(to_add);

|

||||||

|

}

|

||||||

|

}

|

||||||

|

upstreams.insert("/".to_string(), (values, AtomicUsize::new(0)));

|

||||||

|

Ok(upstreams)

|

||||||

|

}

|

||||||

|

Ok(Err(e)) => Err(Box::new(e)),

|

||||||

|

Err(_) => Err("DNS query timed out".into()),

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

impl DnsClientPool {

|

||||||

|

pub async fn new(pool_size: usize, server: String) -> Self {

|

||||||

|

let mut clients = Vec::with_capacity(pool_size);

|

||||||

|

for _ in 0..pool_size {

|

||||||

|

if let Ok(client) = DnsClient::new(server.clone()).await {

|

||||||

|

clients.push(Mutex::new(client));

|

||||||

|

}

|

||||||

|

}

|

||||||

|

Self { clients }

|

||||||

|

}

|

||||||

|

|

||||||

|

pub async fn query_srv(&self, name: &str, server: String) -> Result<DashMap<String, (Vec<InnerMap>, AtomicUsize)>, DnsError> {

|

||||||

|

// Try to get an available client

|

||||||

|

for client_mutex in &self.clients {

|

||||||

|

if let Ok(mut client) = client_mutex.try_lock() {

|

||||||

|

let vay = client.query_srv(name).await;

|

||||||

|

match vay {

|

||||||

|

Ok(_) => return vay,

|

||||||

|

Err(_) => {

|

||||||

|

// If query fails, drop this client and create a new one

|

||||||

|

*client = match DnsClient::new(server).await {

|

||||||

|

Ok(c) => c,

|

||||||

|

Err(e) => return Err(e),

|

||||||

|

};

|

||||||

|

// Retry with the new client

|

||||||

|

return client.query_srv(name).await;

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

// If all clients are busy, wait for the first one with a timeout

|

||||||

|

match tokio::time::timeout(Duration::from_secs(2), self.clients[0].lock()).await {

|

||||||

|

Ok(mut client) => client.query_srv(name).await,

|

||||||

|

Err(_) => Err("All DNS clients are busy and timeout reached".into()),

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

*/

|

||||||

@@ -2,7 +2,7 @@ use crate::utils::parceyaml::load_configuration;

|

|||||||

use crate::utils::structs::Configuration;

|

use crate::utils::structs::Configuration;

|

||||||

use futures::channel::mpsc::Sender;

|

use futures::channel::mpsc::Sender;

|

||||||

use futures::SinkExt;

|

use futures::SinkExt;

|

||||||

use log::{error, info, warn};

|

use log::error;

|

||||||

use notify::event::ModifyKind;

|

use notify::event::ModifyKind;

|

||||||

use notify::{Config, Event, EventKind, RecommendedWatcher, RecursiveMode, Watcher};

|

use notify::{Config, Event, EventKind, RecommendedWatcher, RecursiveMode, Watcher};

|

||||||

use pingora::prelude::sleep;

|

use pingora::prelude::sleep;

|

||||||

@@ -15,19 +15,7 @@ pub async fn start(fp: String, mut toreturn: Sender<Configuration>) {

|

|||||||

let file_path = fp.as_str();

|

let file_path = fp.as_str();

|

||||||

let parent_dir = Path::new(file_path).parent().unwrap();

|

let parent_dir = Path::new(file_path).parent().unwrap();

|

||||||

let (local_tx, mut local_rx) = tokio::sync::mpsc::channel::<notify::Result<Event>>(1);

|

let (local_tx, mut local_rx) = tokio::sync::mpsc::channel::<notify::Result<Event>>(1);

|

||||||

let snd = load_configuration(file_path, "filepath");

|

|

||||||

|

|

||||||

match snd {

|

|

||||||

Some(snd) => {

|

|

||||||

if snd.typecfg != "file" {

|

|

||||||

warn!("Disabling file watcher, requested discovery type is: {}", snd.typecfg);

|

|

||||||

return;

|

|

||||||

}

|

|

||||||

info!("Watching for changes in {:?}", parent_dir);

|

|

||||||

toreturn.send(snd).await.unwrap();

|

|

||||||

}

|

|

||||||

None => {}

|

|

||||||

}

|

|

||||||

let _watcher_handle = task::spawn_blocking({

|

let _watcher_handle = task::spawn_blocking({

|

||||||

let parent_dir = parent_dir.to_path_buf(); // Move directory path into the closure

|

let parent_dir = parent_dir.to_path_buf(); // Move directory path into the closure

|

||||||

move || {

|

move || {

|

||||||

@@ -53,7 +41,7 @@ pub async fn start(fp: String, mut toreturn: Sender<Configuration>) {

|

|||||||

if start.elapsed() > Duration::from_secs(2) {

|

if start.elapsed() > Duration::from_secs(2) {

|

||||||

start = Instant::now();

|

start = Instant::now();

|

||||||

// info!("Config File changed :=> {:?}", e);

|

// info!("Config File changed :=> {:?}", e);

|

||||||

let snd = load_configuration(file_path, "filepath");

|

let snd = load_configuration(file_path, "filepath").await;

|

||||||

match snd {

|

match snd {

|

||||||

Some(snd) => {

|

Some(snd) => {

|

||||||

toreturn.send(snd).await.unwrap();

|

toreturn.send(snd).await.unwrap();

|

||||||

|

|||||||

@@ -1,7 +1,7 @@

|

|||||||

use crate::utils::structs::{InnerMap, UpstreamsDashMap, UpstreamsIdMap};

|

use crate::utils::structs::{InnerMap, UpstreamsDashMap, UpstreamsIdMap};

|

||||||

use crate::utils::tools::*;

|

use crate::utils::tools::*;

|

||||||

use dashmap::DashMap;

|

use dashmap::DashMap;

|

||||||

use log::{error, info, warn};

|

use log::{error, warn};

|

||||||

use reqwest::{Client, Version};

|

use reqwest::{Client, Version};

|

||||||

use std::sync::atomic::AtomicUsize;

|

use std::sync::atomic::AtomicUsize;

|

||||||

use std::sync::Arc;

|

use std::sync::Arc;

|

||||||

@@ -9,87 +9,78 @@ use std::time::Duration;

|

|||||||

use tokio::time::interval;

|

use tokio::time::interval;

|

||||||

use tonic::transport::Endpoint;

|

use tonic::transport::Endpoint;

|

||||||

|

|

||||||

#[allow(unused_assignments)]

|

|

||||||

pub async fn hc2(upslist: Arc<UpstreamsDashMap>, fullist: Arc<UpstreamsDashMap>, idlist: Arc<UpstreamsIdMap>, params: (&str, u64)) {

|

pub async fn hc2(upslist: Arc<UpstreamsDashMap>, fullist: Arc<UpstreamsDashMap>, idlist: Arc<UpstreamsIdMap>, params: (&str, u64)) {

|

||||||

let mut period = interval(Duration::from_secs(params.1));

|

let mut period = interval(Duration::from_secs(params.1));

|

||||||

let mut first_run = 0;

|

let client = Client::builder().timeout(Duration::from_secs(params.1)).danger_accept_invalid_certs(true).build().unwrap();

|

||||||

let client = Client::builder().timeout(Duration::from_secs(2)).danger_accept_invalid_certs(true).build().unwrap();

|

|

||||||

loop {

|

loop {

|

||||||

tokio::select! {

|

tokio::select! {

|

||||||

_ = period.tick() => {

|

_ = period.tick() => {

|

||||||

let totest : UpstreamsDashMap = DashMap::new();

|

populate_upstreams(&upslist, &fullist, &idlist, params, &client).await;

|

||||||

let fclone : UpstreamsDashMap = clone_dashmap(&fullist);

|

|

||||||

for val in fclone.iter() {

|

|

||||||

let host = val.key();

|

|

||||||

let inner = DashMap::new();

|

|

||||||

let mut scheme = InnerMap::new();

|

|

||||||

for path_entry in val.value().iter() {

|

|

||||||

let path = path_entry.key();

|

|

||||||

let mut innervec= Vec::new();

|

|

||||||

for k in path_entry.value().0 .iter().enumerate() {

|

|

||||||

let mut _link = String::new();

|

|

||||||

let tls = detect_tls(k.1.address.as_str(), &k.1.port, &client).await;

|

|

||||||

let mut is_h2 = false;

|

|

||||||

|

|

||||||

if tls.1 == Some(Version::HTTP_2) {

|

|

||||||

is_h2 = true;

|

|

||||||

}

|

|

||||||

match tls.0 {

|

|

||||||

true => _link = format!("https://{}:{}{}", k.1.address, k.1.port, path),

|

|

||||||

false => _link = format!("http://{}:{}{}", k.1.address, k.1.port, path),

|

|

||||||

}

|

|

||||||

scheme = InnerMap {

|

|

||||||

address: k.1.address.clone(),

|

|

||||||

port: k.1.port,

|

|

||||||

is_ssl: tls.0,

|

|

||||||

is_http2: is_h2,

|

|

||||||

to_https: k.1.to_https,

|

|

||||||

rate_limit: k.1.rate_limit,

|

|

||||||

};

|

|

||||||

let resp = http_request(_link.as_str(), params.0, "", &client).await;

|

|

||||||

match resp.0 {

|

|

||||||

true => {

|

|

||||||

if resp.1 {

|

|

||||||

scheme = InnerMap {

|

|

||||||

address: k.1.address.clone(),

|

|

||||||

port: k.1.port,

|

|

||||||

is_ssl: tls.0,

|

|

||||||

is_http2: is_h2,

|

|

||||||

to_https: k.1.to_https,

|

|

||||||

rate_limit: k.1.rate_limit,

|

|

||||||

};

|

|

||||||

}

|

|

||||||

innervec.push(scheme);

|

|

||||||

}

|

|

||||||

false => {

|

|

||||||

warn!("Dead Upstream : {}", _link);

|

|

||||||

}

|

|

||||||

}

|

|

||||||

}

|

|

||||||

inner.insert(path.clone().to_owned(), (innervec, AtomicUsize::new(0)));

|

|

||||||

}

|

|

||||||

totest.insert(host.clone(), inner);

|

|

||||||

}

|

|

||||||

|

|

||||||

if first_run == 1 {

|

|

||||||

info!("Performing initial hatchecks and upstreams ssl detection");

|

|

||||||

clone_idmap_into(&totest, &idlist);

|

|

||||||

info!("Aralez is up and ready to serve requests, the upstreams list is:");

|

|

||||||

print_upstreams(&totest)

|

|

||||||

}

|

|

||||||

|

|

||||||

first_run+=1;

|

|

||||||

|

|

||||||

if ! compare_dashmaps(&totest, &upslist){

|

|

||||||

clone_dashmap_into(&totest, &upslist);

|

|

||||||

clone_idmap_into(&totest, &idlist);

|

|

||||||

}

|

|

||||||

|

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

|

pub async fn populate_upstreams(upslist: &Arc<UpstreamsDashMap>, fullist: &Arc<UpstreamsDashMap>, idlist: &Arc<UpstreamsIdMap>, params: (&str, u64), client: &Client) {

|

||||||

|

let totest = build_upstreams(fullist, params.0, client).await;

|

||||||

|

if !compare_dashmaps(&totest, upslist) {

|

||||||

|

clone_dashmap_into(&totest, upslist);

|

||||||

|

clone_idmap_into(&totest, idlist);

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

pub async fn initiate_upstreams(fullist: UpstreamsDashMap) -> UpstreamsDashMap {

|

||||||

|

let client = Client::builder().timeout(Duration::from_secs(2)).danger_accept_invalid_certs(true).build().unwrap();

|

||||||

|

build_upstreams(&fullist, "HEAD", &client).await

|

||||||

|

}

|

||||||

|

|

||||||

|

async fn build_upstreams(fullist: &UpstreamsDashMap, method: &str, client: &Client) -> UpstreamsDashMap {

|

||||||

|

let totest: UpstreamsDashMap = DashMap::new();

|

||||||

|

let fclone = clone_dashmap(fullist);

|

||||||

|

for val in fclone.iter() {

|

||||||

|

let host = val.key();

|

||||||

|

let inner = DashMap::new();

|

||||||

|

|

||||||

|

for path_entry in val.value().iter() {

|

||||||

|

let path = path_entry.key();

|

||||||

|

let mut innervec = Vec::new();

|

||||||

|

|

||||||

|

for (_, upstream) in path_entry.value().0.iter().enumerate() {

|

||||||

|

let tls = detect_tls(upstream.address.as_str(), &upstream.port, &client).await;

|

||||||

|

let is_h2 = matches!(tls.1, Some(Version::HTTP_2));

|

||||||

|

|

||||||

|

let link = if tls.0 {

|

||||||

|

format!("https://{}:{}{}", upstream.address, upstream.port, path)

|

||||||

|

} else {

|

||||||

|

format!("http://{}:{}{}", upstream.address, upstream.port, path)

|

||||||

|

};

|

||||||

|

|

||||||

|

let mut scheme = InnerMap {

|

||||||

|

address: upstream.address.clone(),

|

||||||

|

port: upstream.port,

|

||||||

|

is_ssl: tls.0,

|

||||||

|

is_http2: is_h2,

|

||||||

|

to_https: upstream.to_https,

|

||||||

|

rate_limit: upstream.rate_limit,

|

||||||

|

};

|

||||||

|

|

||||||

|

let resp = http_request(&link, method, "", &client).await;

|

||||||

|

if resp.0 {

|

||||||

|

if resp.1 {

|

||||||

|

scheme.is_http2 = is_h2; // could be adjusted further

|

||||||

|

}

|

||||||

|

innervec.push(scheme);

|

||||||

|

} else {

|

||||||

|

warn!("Dead Upstream : {}", link);

|

||||||

|

}

|

||||||

|

}

|

||||||

|

inner.insert(path.clone(), (innervec, AtomicUsize::new(0)));

|

||||||

|

}

|

||||||

|

totest.insert(host.clone(), inner);

|

||||||

|

}

|

||||||

|

totest

|

||||||

|

}

|

||||||

|

|

||||||

async fn http_request(url: &str, method: &str, payload: &str, client: &Client) -> (bool, bool) {

|

async fn http_request(url: &str, method: &str, payload: &str, client: &Client) -> (bool, bool) {

|

||||||

if !["POST", "GET", "HEAD"].contains(&method) {

|

if !["POST", "GET", "HEAD"].contains(&method) {

|

||||||

error!("Method {} not supported. Only GET|POST|HEAD are supported ", method);

|

error!("Method {} not supported. Only GET|POST|HEAD are supported ", method);

|

||||||

|

|||||||

133

src/utils/kuber.rs

Normal file

133

src/utils/kuber.rs

Normal file

@@ -0,0 +1,133 @@

|

|||||||

|

// use crate::utils::dnsclient::DnsClientPool;

|

||||||

|

use crate::utils::structs::{Configuration, InnerMap, UpstreamsDashMap};

|

||||||

|

use crate::utils::tools::{clone_dashmap_into, compare_dashmaps, print_upstreams};

|

||||||

|

use dashmap::DashMap;

|

||||||

|

use futures::channel::mpsc::Sender;

|

||||||

|

use futures::SinkExt;

|

||||||

|

use pingora::prelude::sleep;

|

||||||

|

use rand::Rng;

|

||||||

|

use reqwest::Client;

|

||||||

|

use std::env;

|

||||||

|

use std::sync::atomic::AtomicUsize;

|

||||||

|

use std::sync::Arc;

|

||||||

|

use std::time::Duration;

|

||||||

|

use tokio::fs::File;

|

||||||

|

use tokio::io::AsyncReadExt;

|

||||||

|

|

||||||

|

// static KUBERNETES_SERVICE_HOST: &str = "140.238.122.18:6443";

|

||||||

|

// static TOKEN: &str = "eyJhbGciOiJSUzI1NiIsImtpZCI6InJXMzl2VFlHTDM4V0tBbWxNQnRhbnRVcDlvcS10MjRDVHNwc2p3d1ZXeDAifQ.eyJhdWQiOlsiaHR0cHM6Ly9rdWJlcm5ldGVzLmRlZmF1bHQuc3ZjLmNsdXN0ZXIubG9jYWwiXSwiZXhwIjoxNzg4MjY3OTgxLCJpYXQiOjE3NTY3MzE5ODEsImlzcyI6Imh0dHBzOi8va3ViZXJuZXRlcy5kZWZhdWx0LnN2Yy5jbHVzdGVyLmxvY2FsIiwianRpIjoiMDM4ZWVlODAtNGViZi00MjU5LWI0OTctYmYwNDgxMmYyNWJhIiwia3ViZXJuZXRlcy5pbyI6eyJuYW1lc3BhY2UiOiJzdGFnaW5nIiwibm9kZSI6eyJuYW1lIjoiMTcyLjE2LjAuMTU1IiwidWlkIjoiOGY2ZTk2ZjYtNDdjNS00YjZhLTkwMmUtYmZhZTUwNzcxMWFjIn0sInBvZCI6eyJuYW1lIjoiYXJhbGV6LTY3Njg5OGY5ZDUtaHpienEiLCJ1aWQiOiI2NjAyZDFjNy00ZWM2LTRiZDEtYTc3NS00NzI5OGYyMTc0N2QifSwic2VydmljZWFjY291bnQiOnsibmFtZSI6ImFyYWxlei1zYSIsInVpZCI6IjVjYmYxZTU2LTJjY2YtNDFlMS05OGU2LTc3NmY1ZWY1NGRkOCJ9LCJ3YXJuYWZ0ZXIiOjE3NTY3MzU1ODh9LCJuYmYiOjE3NTY3MzE5ODEsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDpzdGFnaW5nOmFyYWxlei1zYSJ9.fnlQoOMEztWx6aE4JNPCMVDVc4s8ygs1tvuU_4FoNTWnTipFivzV3duHAg5sHFnLexYEbeBzWqr1betk__ATfy5RB5UaDdg0kk2AdVjYhvUfW4FcIqPNzXBUd74BOUG8vhN6bhZTQC8Fh0eCLCn9XXwVGIO94LKi9LmLbFiDAhpQDGwbXYnI8kV4nNGRE3kf0fsb6SyHs_8bOGc-t2U6OPdAFqBsk4JliaqiXhJKsoc8JCfUkcxYkT7GqIZxFYpvgisbOdZL7_iVyLU1BiSPMHb0jFa4O60aZ8EzCR7Mio0F5A8eZjSCf_f90ecUCGFuW3eCTCDd02RutXeSyPqxhQ";

|

||||||

|

|

||||||

|

#[derive(Debug, serde::Deserialize)]

|

||||||

|

struct Endpoints {

|

||||||

|

subsets: Option<Vec<Subset>>,

|

||||||

|

}

|

||||||

|

|

||||||

|

#[derive(Debug, serde::Deserialize)]

|

||||||

|

struct Subset {

|

||||||

|

addresses: Option<Vec<Address>>,

|

||||||

|

ports: Option<Vec<Port>>,

|

||||||

|

}

|

||||||

|

|

||||||

|

#[derive(Debug, serde::Deserialize)]

|

||||||

|

struct Address {

|

||||||

|

ip: String,

|

||||||

|

}

|

||||||

|

|

||||||

|

#[derive(Debug, serde::Deserialize)]

|

||||||

|

struct Port {

|

||||||

|

// name: String,

|

||||||

|

port: u16,

|

||||||

|

}

|

||||||

|

|

||||||

|

pub async fn start(mut toreturn: Sender<Configuration>, config: Arc<Configuration>) {

|

||||||

|

let upstreams = UpstreamsDashMap::new();

|

||||||

|

let prev_upstreams = UpstreamsDashMap::new();

|

||||||

|

loop {

|

||||||

|

if let Some(kuber) = config.kubernetes.clone() {

|

||||||

|

let path = kuber.tokenpath.unwrap_or("/var/run/secrets/kubernetes.io/serviceaccount/token".to_string());

|

||||||

|

let token = read_token(path.as_str()).await;

|

||||||

|

let servers = kuber.servers.unwrap_or(vec![format!(

|

||||||

|

"{}:{}",

|

||||||

|

env::var("KUBERNETES_SERVICE_HOST").unwrap_or("0.0.0.0".to_string()),

|

||||||

|

env::var("KUBERNETES_SERVICE_PORT_HTTPS").unwrap_or("0".to_string())

|

||||||

|

)]);

|

||||||

|

let end = servers.len() - 1;

|

||||||

|

let mut num = 0;

|

||||||

|

if end > 0 {

|

||||||

|

num = rand::rng().random_range(0..end);

|

||||||

|

}

|

||||||

|

let server = servers.get(num).unwrap().to_string();

|

||||||

|

|

||||||

|

if let Some(svc) = kuber.services {

|

||||||

|

for i in svc {

|

||||||

|

let url = format!("https://{}/api/v1/namespaces/staging/endpoints/{}", server, i.real);

|

||||||

|

let list = get_by_http(&*url, &*token).await;

|

||||||

|

if let Some(list) = list {

|

||||||

|

upstreams.insert(i.proxy.clone(), list);

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

if !compare_dashmaps(&upstreams, &prev_upstreams) {

|

||||||

|

let tosend: Configuration = Configuration {

|

||||||

|

upstreams: Default::default(),

|

||||||

|

headers: config.headers.clone(),

|

||||||

|

consul: config.consul.clone(),

|

||||||

|

kubernetes: config.kubernetes.clone(),

|

||||||

|

typecfg: config.typecfg.clone(),

|

||||||

|

extraparams: config.extraparams.clone(),

|

||||||

|

};

|

||||||

|

|

||||||

|

clone_dashmap_into(&upstreams, &prev_upstreams);

|

||||||

|

clone_dashmap_into(&upstreams, &tosend.upstreams);

|

||||||

|

print_upstreams(&tosend.upstreams);

|

||||||

|

toreturn.send(tosend).await.unwrap();

|

||||||

|

}

|

||||||

|

sleep(Duration::from_secs(5)).await;

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

pub async fn get_by_http(url: &str, token: &str) -> Option<DashMap<String, (Vec<InnerMap>, AtomicUsize)>> {

|

||||||

|

let client = Client::builder().timeout(Duration::from_secs(2)).danger_accept_invalid_certs(true).build().ok()?;

|

||||||

|

|

||||||

|

let resp = client.get(url).bearer_auth(token).send().await.ok()?;

|

||||||

|

|

||||||

|

if !resp.status().is_success() {

|

||||||

|

eprintln!("Kubernetes API returned status: {}", resp.status());

|

||||||

|

return None;

|

||||||

|

}

|

||||||

|

|

||||||

|

let endpoints: Endpoints = resp.json().await.ok()?;

|

||||||

|

let upstreams: DashMap<String, (Vec<InnerMap>, AtomicUsize)> = DashMap::new();

|

||||||

|

|

||||||

|

if let Some(subsets) = endpoints.subsets {

|

||||||

|

for subset in subsets {

|

||||||

|

if let (Some(addresses), Some(ports)) = (subset.addresses, subset.ports) {

|

||||||

|

for addr in addresses {

|

||||||

|

let mut inner_vec = Vec::new();

|

||||||

|

for port in &ports {

|

||||||

|

let to_add = InnerMap {

|

||||||

|

address: addr.ip.clone(),

|

||||||

|

port: port.port.clone(),

|

||||||

|

is_ssl: false,

|

||||||

|

is_http2: false,

|

||||||

|

to_https: false,

|

||||||

|

rate_limit: None,

|

||||||

|

};

|

||||||

|

inner_vec.push(to_add);

|

||||||

|

}

|

||||||

|

upstreams.insert("/".to_string(), (inner_vec, AtomicUsize::new(0)));

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

Some(upstreams)

|

||||||

|

}

|

||||||

|

|

||||||

|

async fn read_token(path: &str) -> String {

|

||||||

|

let mut file = File::open(path).await.unwrap();

|

||||||

|

let mut contents = String::new();

|

||||||

|

file.read_to_string(&mut contents).await.unwrap();

|

||||||

|

contents.trim().to_string()

|

||||||

|

}

|

||||||

@@ -61,27 +61,3 @@ pub fn calc_metrics(metric_types: &MetricTypes) {

|

|||||||

REQUESTS_BY_METHOD.with_label_values(&[&metric_types.method]).inc();

|

REQUESTS_BY_METHOD.with_label_values(&[&metric_types.method]).inc();

|

||||||

RESPONSE_LATENCY.observe(metric_types.latency.as_secs_f64());

|

RESPONSE_LATENCY.observe(metric_types.latency.as_secs_f64());

|

||||||

}

|

}

|

||||||

/*

|

|

||||||

pub fn calc_metrics(method: String, code: u16, latency: Duration) {

|

|

||||||

REQUEST_COUNT.inc();

|

|

||||||

let timer = REQUEST_LATENCY.start_timer();

|

|

||||||

timer.observe_duration();

|

|

||||||

RESPONSE_CODES.with_label_values(&[&code.to_string()]).inc();

|

|

||||||

REQUESTS_BY_METHOD.with_label_values(&[&method]).inc();

|

|

||||||

RESPONSE_LATENCY.observe(latency.as_secs_f64());

|

|

||||||

}

|

|

||||||

|

|

||||||

tokio::spawn(async move {

|

|

||||||

let mut interval = tokio::time::interval(std::time::Duration::from_secs(5));

|

|

||||||

loop {

|

|

||||||

interval.tick().await;

|

|

||||||

|

|

||||||

// read Pingora stats

|

|

||||||

let stats = pingora.get_stats();

|

|

||||||

|

|

||||||

// update Prometheus metrics accordingly

|

|

||||||

REQUEST_COUNT.set(stats.requests_total);

|

|

||||||

// ... etc

|

|

||||||

}

|

|

||||||

});

|

|

||||||

*/

|

|

||||||

|

|||||||

@@ -1,11 +1,15 @@

|

|||||||

|

use crate::utils::healthcheck;

|

||||||

use crate::utils::structs::*;

|

use crate::utils::structs::*;

|

||||||

|

use crate::utils::tools::{clone_dashmap, clone_dashmap_into, print_upstreams};

|

||||||

use dashmap::DashMap;

|

use dashmap::DashMap;

|

||||||

use log::{error, info, warn};

|

use log::{error, info, warn};

|

||||||

use std::collections::HashMap;

|

use std::collections::HashMap;

|

||||||

use std::fs;

|

|

||||||

use std::sync::atomic::AtomicUsize;

|

use std::sync::atomic::AtomicUsize;

|

||||||

|

// use std::sync::mpsc::{channel, Receiver, Sender};

|

||||||

|

use std::{env, fs};

|

||||||

|

// use tokio::sync::oneshot::{Receiver, Sender};

|

||||||

|

|

||||||

pub fn load_configuration(d: &str, kind: &str) -> Option<Configuration> {

|

pub async fn load_configuration(d: &str, kind: &str) -> Option<Configuration> {

|

||||||

let yaml_data = match kind {

|

let yaml_data = match kind {

|

||||||

"filepath" => match fs::read_to_string(d) {

|

"filepath" => match fs::read_to_string(d) {

|

||||||

Ok(data) => {

|

Ok(data) => {

|

||||||

@@ -38,23 +42,22 @@ pub fn load_configuration(d: &str, kind: &str) -> Option<Configuration> {

|

|||||||

|

|

||||||

let mut toreturn = Configuration::default();

|

let mut toreturn = Configuration::default();

|

||||||

|

|

||||||

populate_headers_and_auth(&mut toreturn, &parsed);

|

populate_headers_and_auth(&mut toreturn, &parsed).await;

|

||||||

toreturn.typecfg = parsed.provider.clone();

|

toreturn.typecfg = parsed.provider.clone();

|

||||||

|

|

||||||

match parsed.provider.as_str() {

|

match parsed.provider.as_str() {

|

||||||

"file" => {

|

"file" => {

|

||||||

populate_file_upstreams(&mut toreturn, &parsed);

|

populate_file_upstreams(&mut toreturn, &parsed).await;

|

||||||

Some(toreturn)

|

Some(toreturn)

|

||||||

}

|

}

|

||||||

"consul" => {

|

"consul" => {

|

||||||

toreturn.consul = parsed.consul;

|

toreturn.consul = parsed.consul;

|

||||||

if toreturn.consul.is_some() {

|

toreturn.consul.is_some().then_some(toreturn)

|

||||||

Some(toreturn)

|

}

|

||||||

} else {

|

"kubernetes" => {

|

||||||

None

|

toreturn.kubernetes = parsed.kubernetes;

|

||||||

}

|

toreturn.kubernetes.is_some().then_some(toreturn)

|

||||||

}

|

}

|

||||||

"kubernetes" => None,

|

|

||||||

_ => {

|

_ => {

|

||||||

warn!("Unknown provider {}", parsed.provider);

|

warn!("Unknown provider {}", parsed.provider);

|

||||||

None

|

None

|

||||||

@@ -62,7 +65,7 @@ pub fn load_configuration(d: &str, kind: &str) -> Option<Configuration> {

|

|||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

fn populate_headers_and_auth(config: &mut Configuration, parsed: &Config) {

|

async fn populate_headers_and_auth(config: &mut Configuration, parsed: &Config) {

|

||||||

if let Some(headers) = &parsed.headers {

|

if let Some(headers) = &parsed.headers {

|

||||||

let mut hl = Vec::new();

|

let mut hl = Vec::new();

|

||||||

for header in headers {

|

for header in headers {

|

||||||

@@ -93,7 +96,8 @@ fn populate_headers_and_auth(config: &mut Configuration, parsed: &Config) {

|

|||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

fn populate_file_upstreams(config: &mut Configuration, parsed: &Config) {

|

async fn populate_file_upstreams(config: &mut Configuration, parsed: &Config) {

|

||||||

|

let imtdashmap = UpstreamsDashMap::new();

|

||||||

if let Some(upstreams) = &parsed.upstreams {

|

if let Some(upstreams) = &parsed.upstreams {

|

||||||

for (hostname, host_config) in upstreams {

|

for (hostname, host_config) in upstreams {

|

||||||

let path_map = DashMap::new();

|

let path_map = DashMap::new();

|

||||||

@@ -116,14 +120,6 @@ fn populate_file_upstreams(config: &mut Configuration, parsed: &Config) {

|

|||||||

header_list.insert(path.clone(), hl);

|

header_list.insert(path.clone(), hl);

|

||||||

|

|

||||||

for server in &path_config.servers {

|

for server in &path_config.servers {

|

||||||

// let mut rate: Option<isize> = None;

|

|

||||||

// let size: isize = path_config.servers.len() as isize;

|

|

||||||

// if let Some(limit) = &path_config.rate_limit {

|

|

||||||

// if size > 0 {

|

|

||||||

// rate = Some(limit / size);

|

|

||||||

// }

|

|

||||||

// }

|

|

||||||

|

|

||||||

if let Some((ip, port_str)) = server.split_once(':') {

|

if let Some((ip, port_str)) = server.split_once(':') {

|

||||||

if let Ok(port) = port_str.parse::<u16>() {

|

if let Ok(port) = port_str.parse::<u16>() {

|

||||||

server_list.push(InnerMap {

|

server_list.push(InnerMap {

|

||||||

@@ -138,22 +134,24 @@ fn populate_file_upstreams(config: &mut Configuration, parsed: &Config) {

|